How AI Is Transforming Data Engineering in 2026

By 2026, 80% of data quality tools will use AI. Discover how autonomous pipelines, GenAI, and agentic ETL are reshaping the role of the modern data engineer.

Abdul Nazar • May 11, 2026

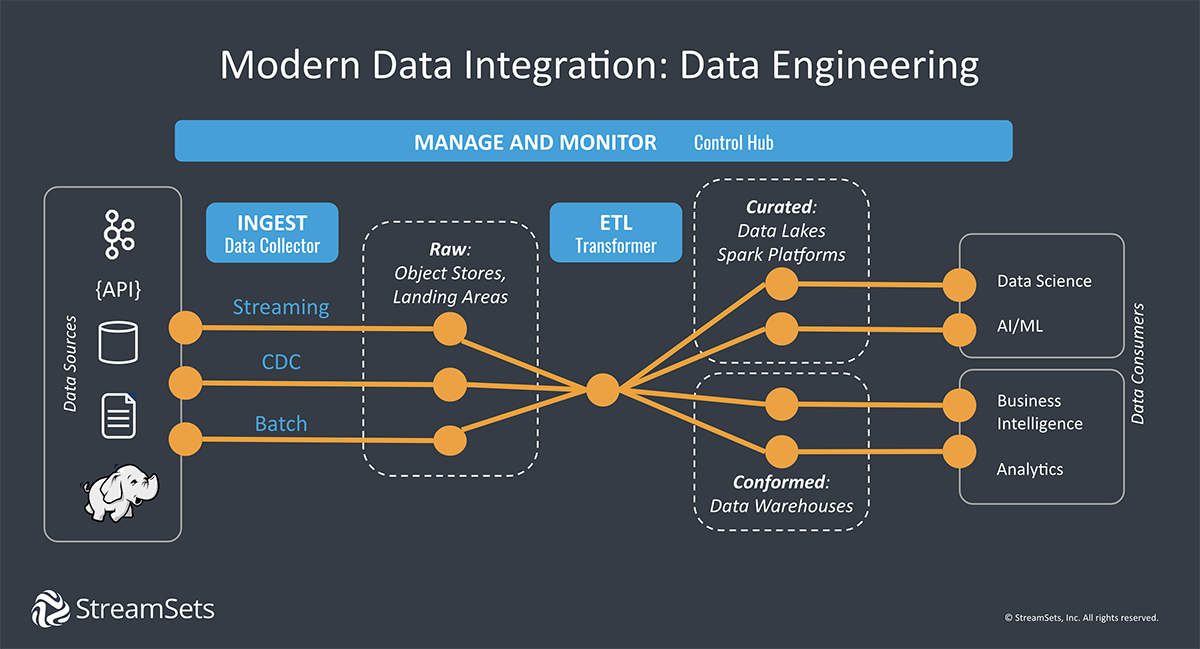

The era of manual, rigid ETL (Extract, Transform, Load) workflows is ending as the industry pivots toward autonomous data engineering. By 2026, 80% of organizations will have deployed data quality solutions that leverage AI and machine learning, fundamentally altering how data flows from source to insight. This shift isn't merely about faster processing; it represents a transition to agentic data pipelines that can observe, reason, and self-correct without human intervention.

How is AI reshaping traditional ETL workflows?

Traditional ETL is evolving from a series of hard-coded instructions into a dynamic, intent-based ecosystem where AI handles the mechanical complexity. While SQL remains the foundation of data analysis, the heavy lifting of extraction and orchestration is increasingly managed by AI agents that understand data semantics rather than just file formats.

In traditional workflows, a change in a source table schema would break downstream pipelines, requiring a data engineer to manually update the code. In 2026, agentic pipelines identify these schema shifts in real-time, propose a new mapping based on metadata history, and test the transformation before deploying the fix—often before a human even notices the drift.

What are the core pillars of autonomous data engineering?

The transformation of data engineering spans the entire lifecycle, from the first byte ingested to the final row observed. Modern platforms like Databricks and Snowflake are integrating these capabilities natively into their lakehouse architectures, moving beyond simple automation toward true intelligence.

Intelligent Ingestion and Mapping: AI-driven tools now automatically detect source systems and suggest the most efficient ingestion strategy (batch vs. streaming). Schema mapping, once a tedious manual task, is now handled by LLMs that recognize that "custid" in one system and "clientidentifier" in another refer to the same entity. In a world with thousands of SaaS applications, AI mapping tools reduce the time spent on manual connector configuration by up to 70%.

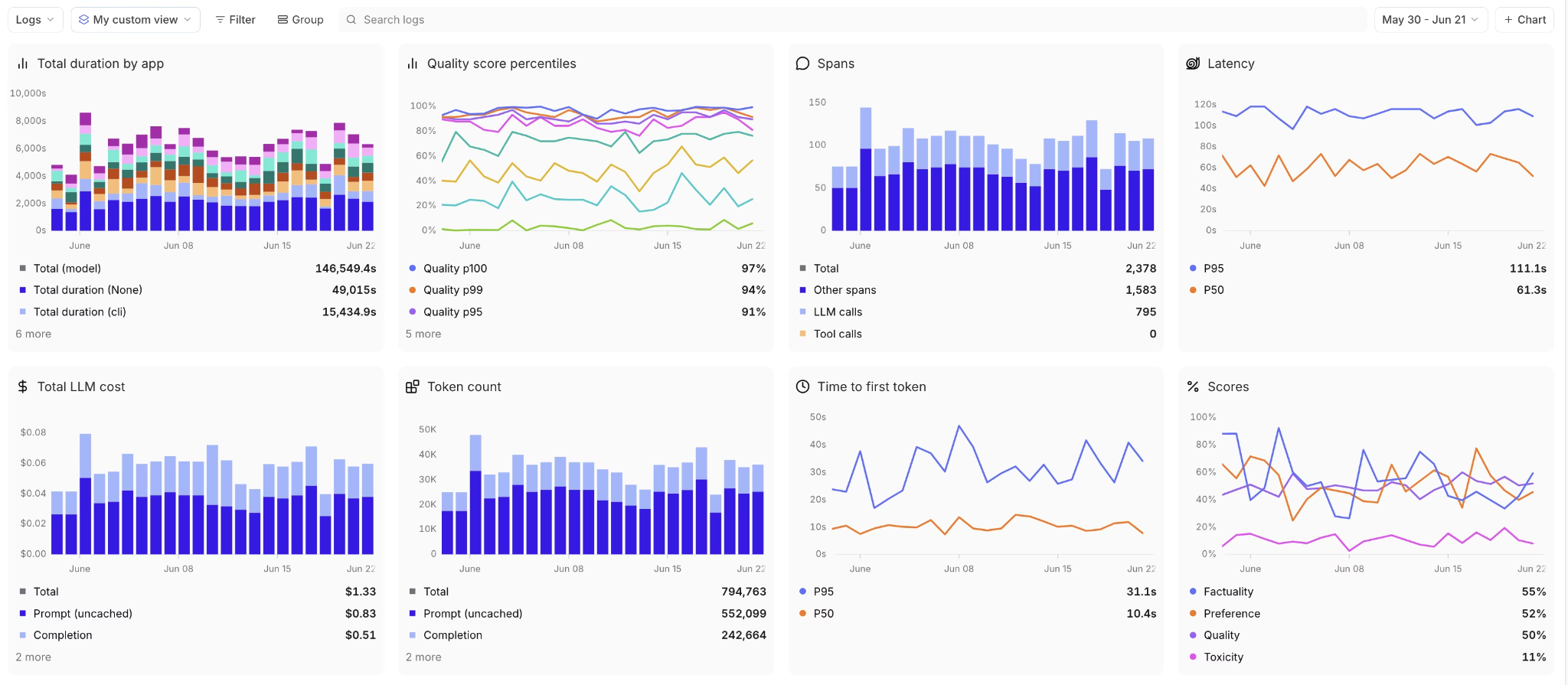

Automated Data Quality and Observability: Data observability has moved from a "nice-to-have" to a tactical necessity. Systems now use ML to establish "normal" data distribution patterns, flagging anomalies—such as a sudden 20% drop in transaction volume—immediately. These tools don't just alert engineers; they perform root-cause analysis by correlating the anomaly with recent upstream code changes or external API downtime.

Dynamic Orchestration: Traditional schedulers (like basic Cron jobs) are being replaced by AI orchestrators that optimize resource allocation. These systems predict pipeline runtimes and scale compute clusters up or down based on current data volume and priority. This prevents "runaway spend" by ensuring that heavy transformation jobs only run when resources are cheapest or most available.

Real-Time Metadata Management: Metadata is no longer a static catalog. Active metadata management uses AI to crawl data environments, creating a living graph of data lineage that helps engineers understand the downstream impact of any change. This enables a "shift-left" approach to data quality, where problems are identified at the source before they can pollute downstream analytics.

How do traditional and AI-powered pipelines compare?

The gap between legacy ETL and AI-assisted pipelines is best seen through the lens of developer productivity and system resilience. While traditional pipelines were built for predictability, AI-powered pipelines are built for adaptability in high-entropy environments.

Factor | Traditional ETL (Legacy) | AI-Powered Pipelines (2026) |

|---|---|---|

Development Cycle | Requires 4-6 weeks for new source integration | Can integrate new sources in <4 hours |

Code Maintenance | 30-50% of engineer time spent fixing breaks | <10% of time spent on reactive maintenance |

Data Correction | Manual reprocessing after error detection | Autonomous mid-flight correction and retries |

User Interface | Drag-and-drop or code-heavy editors | Natural language "Copilot" assistants |

Resource Efficiency | Static allocation (over-provisioned) | Predictive auto-scaling (just-in-time) |

In practice, a retail organization using traditional ETL might struggle to integrate a new point-of-sale system during a holiday surge, potentially losing 48 hours of sales data. An AI-powered organization, by contrast, leverages agentic pipelines that automatically detect the new API schema, provision a landing zone, and notify the engineer of the successful integration within minutes.

How do traditional and AI-powered pipelines compare?

The gap between legacy ETL and AI-assisted pipelines is best seen through the lens of developer productivity and system resilience.

Feature | Traditional ETL (Legacy) | AI-Powered Pipelines (2026) |

|---|---|---|

Development | Manual Python/SQL coding | Natural language prompts (GenAI) |

Schema Changes | Pipelines break; manual repair | Self-healing via intent-based mapping |

Data Quality | Rule-based (if-then logic) | ML-driven anomaly detection |

Scaling | Pre-defined cluster sizes | Autonomous auto-scaling based on load |

Maintenance | Reactive debugging | Proactive root-cause analysis |

Will GenAI and LLMs eliminate the need for data engineers?

Generative AI is not replacing data engineers; it is elevating them from "pipeline plumbers" to data architects and governors. Platforms like IBM's AI assistant allow engineers to build entire pipelines using natural language, significantly reducing the "time to first row." This democratization allows less technical users to perform basic ingestion, but the role of the engineer remains critical for complex logic.

According to industry surveys, data engineers proficient in AI are expected to be in the top 1% of the workforce by 2026. This trend is driven by a massive productivity surge: a 2026 study found that data teams using LLM-assisted development are 3x more productive in delivering complex business logic than teams relying on manual Python scripts.

The role is shifting away from writing boilerplate code and toward:

AI Strategy and Architecture: Designing the "guardrails" within which AI agents operate to ensure security and scalability.

Strategic Quality Control: Designing advanced validation frameworks that verify AI-generated output against business truth.

Human Context Bridging: Translating nuanced business requirements—which AI often fails to capture—into technical specifications for model implementation.

What are the risks and governance concerns?

The move toward autonomous pipelines introduces new challenges that require rigorous oversight. The "black box" nature of some AI-driven transformations can lead to unpredictable data lineage, making it difficult to audit why a specific transformation occurred or how a particular number was calculated.

Governance teams are increasingly focused on sovereign AI and data literacy. As pipelines become more automated, the risk of "automated errors" scales exponentially. If a logic error is introduced by a Generative AI tool, it can propagate through a massive data estate in minutes. This necessitates a "human-in-the-loop" approach for critical financial or personal data pipelines, where AI suggests the change but a certified data steward must approve it.

Furthermore, Gartner predicts that by 2026, 60% of data engineering failures will be due to "trust gaps"—situations where the business no longer trusts autonomous systems due to a single high-profile hallucination or data leak. Organizations must build robust Trust, Risk, and Security Management (TRiSM) frameworks into their data pipelines to mitigate these threats. This includes automated bias detection in transformations and stringent PII (Personally Identifiable Information) masking that cannot be overridden by an AI agent.

How does AI impact real-time data processing?

The demand for sub-second insights is pushing AI directly into the streaming layer. In 2026, real-time data engineering is characterized by intelligent stream conditioning, where ML models embedded in the pipeline pre-process data as it moves through Kafka or Flink.

Traditional streaming required complex windowing logic and backpressure management. AI-powered stream processors predict congestion before it happens, automatically adjusting the partition strategy or scaling the consumer group. This is particularly vital in industries like High-Frequency Trading (HFT) and cybersecurity, where seconds of delay can result in millions of dollars in losses or data breaches.

What new job roles are emerging in the AI-data era?

As the "Data Engineer" title becomes broader, specialized sub-roles are beginning to dominate the hiring landscape. The transition toward autonomous systems has created a need for professionals who can oversee the machines that build the pipelines.

Data AI Architect: Responsible for the infrastructure that supports both traditional analytics and Generative AI applications like RAG (Retrieval-Augmented Generation).

Pipeline Auditor: A governance-focused role that ensures AI-generated data flows comply with GDPR, CCPA, and internal ethics policies.

Data Product Manager: A bridge role that manages data as a product, focusing on the "usability" and "trustworthiness" of data for end-users rather than just the underlying code.

LLM Ops Engineer (Data focused): Specialized engineers who focus on the data pipelines needed to fine-tune and serve large language models at scale.

These roles represent the next evolution of the Data Engineering workforce, where technical proficiency is balanced with domain expertise and ethical considerations. The 2030 data professional will be less of a coder and more of a "conductor," managing an orchestra of AI agents to deliver value to the business.

What are the risks and governance concerns?

The move toward autonomous pipelines introduces new challenges that require rigorous oversight. The "black box" nature of some AI-driven transformations can lead to unpredictable data lineage, making it difficult to audit why a specific transformation occurred.

Governance teams are increasingly focused on sovereign AI and data literacy. As pipelines become more automated, the risk of "automated errors" scales. If a logic error is introduced by a Generative AI tool, it can propagate through a massive data estate in minutes. This necessitates a "human-in-the-loop" approach for critical financial or personal data pipelines.

What does the next 5 years look like for data engineering?

By 2030, we expect the concept of "manual ETL" to exist only in legacy maintenance. The industry is moving toward Autonomous Data Fabric, where data flows are as ubiquitous and self-managing as electricity in a smart grid.

The winner in this new era will be the organization that treats data as a governed product. Investing in AI fluency is no longer optional; it is the primary differentiator for both companies and individual practitioners. The future of data engineering is one where technology handles the how, allowing humans to focus entirely on the why.

Frequently Asked Questions

Can AI-powered ETL tools handle legacy on-premise data?

Yes, modern platforms like Informatica Intelligent Data Management Cloud and AWS Glue are designed for hybrid environments. They use AI agents to bridge the gap between legacy databases and modern cloud warehouses.

How does natural language pipeline development actually work?

Users provide a prompt like "Ingest transaction data from Stripe, join it with Customer records in Snowflake, and flag any duplicate IDs." The NL2SQL engine generates the underlying SQL and orchestration logic, which the engineer then reviews and approves.

What is the most important skill for a data engineer in 2026?

Beyond SQL and Python, the most valuable skill is AI Prompt Engineering for Data. Understanding how to guide LLMs to produce accurate transformations and how to troubleshoot AI-generated code is the new industry standard.