Importance of AI Agents in Software Development (2026 Guide)

AI agents are driving 35% productivity gains in 2026. Discover how autonomous orchestration and Model Context Protocol are revolutionizing the modern SDLC.

Arjunan Subramani • May 8, 2026

The fundamental shift in software engineering for 2026 is the transition from generative assistants to autonomous agents. While early tools like GitHub Copilot acted as "autocomplete for code," modern AI agents now function as junior-to-mid-level engineers capable of planning, executing, and verifying complex tasks. According to the 2026 Global Software Industry Outlook, AI agents are driving productivity gains of 30% to 35% across the entire software development lifecycle (SDLC), moving the human role from manual coder to system orchestrator.

The importance of these agents lies in their ability to bridge the "reasoning-to-execution" gap. Unlike static Large Language Models (LLMs), agents possess tool-use capabilities, long-term memory, and self-correction loops. They don't just suggest code; they run compilers, execute test suites, and read documentation to resolve errors independently. This shift is reflected in the market, with the global AI agents market projected to hit $182.97 billion by 2033, growing at a staggering 49.6% CAGR. For development teams, agents are no longer optional "nice-to-haves" but essential components for maintaining competitive velocity.

How do AI agents differ from traditional coding assistants?

AI agents are distinguished by their autonomy and agency, allowing them to manage multi-step workflows without constant human prompting. Traditional assistants require a "human-in-the-loop" for every line of code, whereas agents can be assigned an entire GitHub issue—such as "Refactor this API to use FastAPI"—and find the relevant files, modify them, and submit a pull request for review.

In 2026, the performance of these agents is measured by benchmarks like SWE-bench Verified, which tests their ability to solve real-world GitHub issues. As of May 2026, leading models like Claude Mythos Preview have achieved a 93.9% success rate on these tasks. This represents a leap from the simple code-completion era toward a future where agents handle the "grunt work" of development, including dependency updates, legacy code migration, and cross-file refactoring.

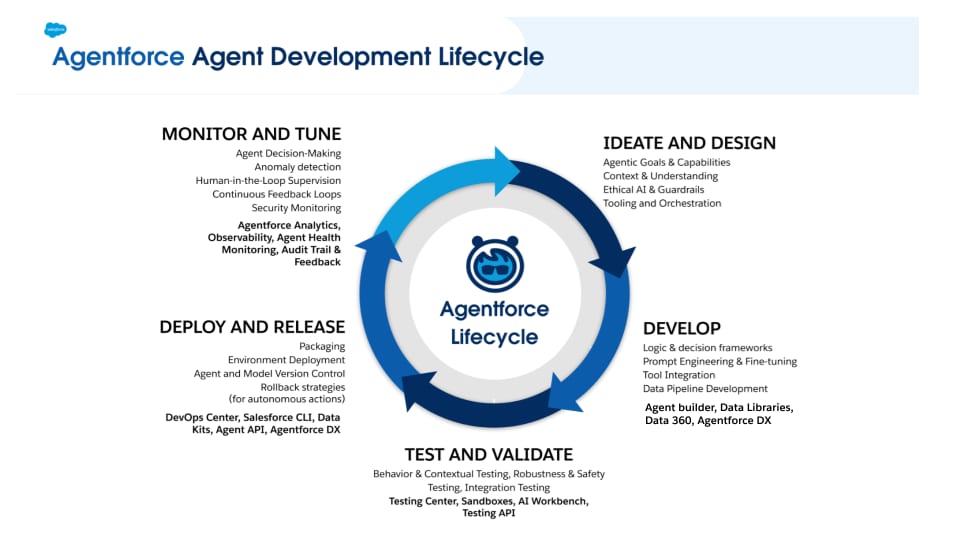

Why is agentic orchestration becoming the new standard?

Orchestration allows specialized agents to work together like a disciplined engineering department, where one agent writes tests while another implements the backend logic and a third checks for security vulnerabilities. This multi-agent paradigm has led to a 1,445% surge in multi-agent system inquiries as organizations realize that single-agent systems often fail on complex, interconnected tasks.

Orchestration Layer | Primary Function | Ideal Team Size |

|---|---|---|

Model Context Protocol (MCP) | Standardizes how agents access files, databases, and local development environments. | Universal standard for all team sizes. |

LangGraph / CrewAI | Manages stateful, graph-based workflows where agents pass tasks to one another. | Mid-to-large enterprises with complex SDLCs. |

Agent-to-Agent (A2A) | Direct communication protocols allowing specialized agents to coordinate without a central hub. | Decentralized development teams and open-source projects. |

By 2030, 80% of developers will work with autonomous agents, marking a transition where "vibe coding" (high-level descriptive programming) becomes as valid as manual typing. The value proposition is clear: agents handle the 23-minute sessions of deep technical execution now common in development, freeing humans to focus on high-level architecture and product strategy.

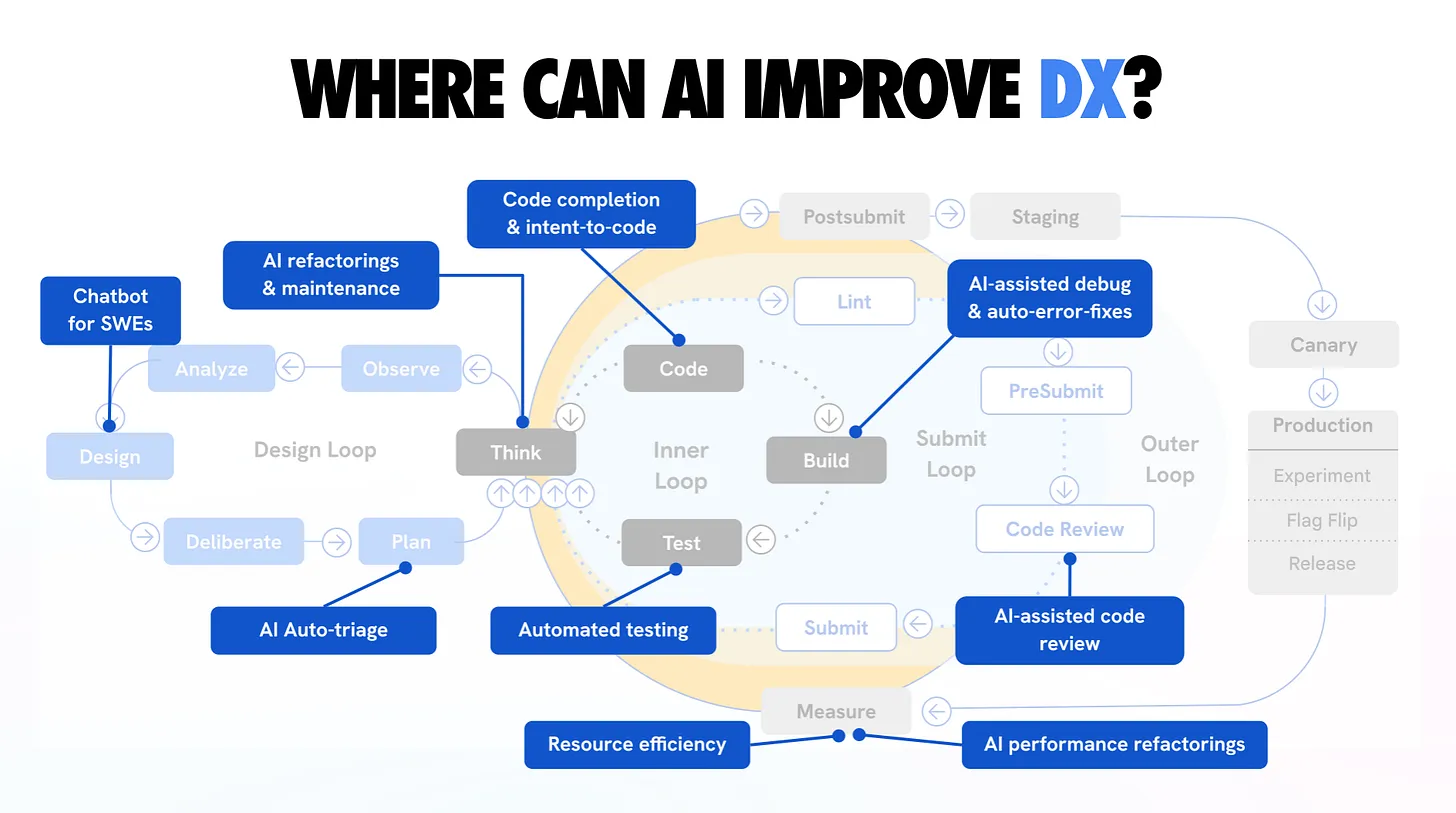

What are the tangible benefits of AI agents in the SDLC?

The most significant impact of AI agents is the compression of the development cycle from days to hours. In 2026, engineering teams are running an average of 12 AI agents per developer to handle specific vertical tasks, achieving a 42% reduction in time-to-market for new features.

Beyond simple speed, these agents drive quantifiable application performance gains. Performance-tuning agents now operate "at the edge" of the SDLC, automatically refactoring inefficient database queries and memory-intensive functions. On average, agent-optimized codebases see a 24% improvement in execution speed compared to manually maintained legacy code.

Automated Bug Resolution: Agents monitor production logs, identify errors, and automatically generate a fix and a regression test. This "active healing" approach reduces Mean Time to Recovery (MTTR) by up to 60%.

Legacy Migration: Tools like Claude Code and GitHub Next agents are capable of reading entire legacy codebases in COBOL or Java 8 and migrating them to modern architectures with human-verified precision.

Continuous Documentation: Documentation is no longer a post-sprint chore. Agents update READMEs, API docs, and architecture diagrams in real-time as the code evolves, ensuring the "source of truth" is never stale.

While the benefits are immense, the risks remain real. AI-generated code currently has 2.74x more vulnerabilities than human-written code if left unmonitored. This has necessitated the rise of "security agents" that act as automated guardians, scanning every agent-written line for CVEs before it enters the production branch.

Beyond Efficiency: The Psychological Impact of Agentic Tools

While productivity numbers capture the executive value, the importance of AI agents in 2026 also extends to developer well-being and job satisfaction. By delegating monotonous tasks like unit test generation and boilerplate configuration to agents, cognitive load is reduced by an estimated 25% for senior engineers.

This shift addresses the "burnout epidemic" in tech by allowing developers to stay in a state of "flow" for longer periods. Instead of context-switching to handle a minor dependency bug, a developer can simply dispatch a secondary agent to resolve it in the background. This creates a dual-track development process: the human iterates on the product's value proposition while the agentic fleet maintains the core technical hygiene.

Strategic Resource Reallocation

The adoption of autonomous agents allows organizations to reallocate resources toward innovation over maintenance. Currently, up to 70% of IT budgets are traditionally spent on "keeping the lights on." AI agents flip this ratio by automating legacy debt management and infrastructure scaling.

In a 2026 pilot study, engineering teams using agentic orchestration were able to launch new features 42% faster than teams relying solely on standard AI assistants. This speed is not merely about typing faster; it is about the agent’s ability to perform autonomous research, such as evaluating three different third-party libraries for a feature and presenting a recommendation with a proof-of-concept already built.

The Rise of "Agent-Ops"

To support this importance, a new discipline called Agent-Ops has emerged. This focuses on the observability, reliability, and security of the agentic workforce. Companies are now implementing "agent playgrounds" where new models are benchmarked against internal codebases before being given write-access to production repositories.

As these systems become more sophisticated, the distinction between "software" and "AI" vanishes. We are entering an era where software is no longer a static product but a living system maintained by a collaborative mesh of human intelligence and agentic execution. This evolution ensures that software can scale not just in traffic, but in complexity, without the exponential increase in human headcount that previously limited growth.

How do the top AI agents compare in 2026?

The selection of an AI agent now depends more on operational context than simple prompt capability. Modern benchmarks like SWE-bench Verified provide a standardized look at how these agents resolve real-world GitHub issues autonomously.

AI Agent | SWE-bench Verified Score | Primary Use Case | Key Differentiator |

|---|---|---|---|

Claude Code (Opus 4.7) | 88.2% | Complex multi-file refactoring and CLI-heavy workflows. | Native "Agent Teams" feature for spawning sub-agents. |

GPT-5.5 (Codex) | 84.6% | Enterprise-scale legacy migration and long-term project planning. | Deepest integration with Azure/OpenAI ecosystem. |

Cursor (Agent Mode) | 81.4% | IDE-native feature development and rapid prototyping. | Zero-latency local context via indexed repositories. |

OpenCode | 76.9% | Decentralized and privacy-focused open-source projects. | Provider-agnostic engine that runs on local GPUs. |

Gemini CLI | 74.2% | Research-intensive tasks and massive codebase analysis. | Industry-leading 2M+ token context window. |

How do agents solve the "context window" problem?

Agents solve the context limitation of traditional LLMs by using Model Context Protocol (MCP) and RAG-enhanced memory systems. Instead of a developer having to copy-paste several files into a chat window, an agent can independently "crawl" the repository, identifying only the code blocks relevant to the current task.

This deep context awareness allows for multi-file edits, which now characterize 78% of all agentic coding sessions. When a developer asks to "change the database schema," the agent understands that this change requires updates to the ORM models, the migration scripts, the API payloads, and the frontend types simultaneously. This holistic understanding is what transforms a "chatbot" into a "software engineer."

What is the future of the "human-agent" collaboration?

The future is one of orchestration over execution. As we move deeper into 2026, 90% of engineers are shifting their focus from writing individual lines of code to designing the agentic workflows that build the software. The human provides the "why" (business requirements, user experience goals) while the agents handle the "how" (implementation details, infrastructure, testing).

This does not mean the end of the software engineer; rather, it elevates the role. A single senior engineer can now manage a "pod" of agents that produce the output of a five-person team. The importance of AI agents lies in this force multiplier effect, allowing small companies to build world-class software at a speed and scale that was previously impossible.

Frequently Asked Questions

Can AI agents completely replace junior developers?

No, but they redefine the role of a junior developer. Instead of writing boilerplate, junior developers in 2026 act as Agent Supervisors, learning to debug agent output and manage documentation. The focus shifts toward understanding system architecture rather than just syntax.

Is agentic AI secure enough for fintech or healthcare apps?

Only when paired with Enterprise Agentic Governance. Leading companies use a "defense in depth" strategy where AI-generated code must pass through three independent security agents and one final human review before deployment.

How do I start using AI agents in my workflow today?

The fastest entry point is through CLI-based agents like Claude Code or IDE-integrated agents like Cursor's Agent Mode. These tools provide immediate access to the "plan-read-write-test" loop directly within your existing codebase.