Architecting AI-Native Data Systems in 2026

Data engineering is the 2026 bottleneck for AI. Learn how to bridge the visibility-understanding gap with AI-native architecture and real-time observability.

Athira Krishnan • May 8, 2026

In 2026, the real challenge for AI isn't the model—it's the data. As a senior data engineer at Experience.com, I've seen that while AI models are getting smarter, the underlying data pipelines are often the weakest link. We've moved past the phase of minor AI experiments and into a period where data engineering is the primary bottleneck for building reliable, autonomous organizations.

The difference between a flashy prototype and a successful product is the engineering rigor applied to the data layer. In 2026, it is no longer enough to just move data from one place to another. We are now building the "nervous system" for companies that rely on real-time information to drive their AI agents.

In this article, I want to share the practical lessons we’ve learned about building systems that actually work in production. We’ll look at why data maturity is the new competitive edge, how modern architecture has evolved, and how to avoid the "visibility gap" that often causes AI projects to fail.

Why is Data Maturity Now More Important Than the AI Itself?

Data maturity is the most important safeguard against operational failure for AI systems. A 2026 Gartner Maturity Report shows that the most successful companies are the ones prioritizing data governance and automated management rather than just buying the latest AI tools.

If your data is messy, your AI will be dangerously wrong. When you use AI agents to make real-time decisions, even a small error in your data can cause a huge system failure. This is why 80% of CEOs believe that AI will force a total overhaul of how they handle their business operations.

At Experience.com, we’ve found that "good enough" data no longer exists. In the past, a mistake in a data table might just result in a slightly off-center chart on a dashboard. Today, that same mistake could lead to an AI customer support bot promising refunds that don't exist or a logistics agent ordering too much inventory based on corrupted logs. Ensuring data maturity is the only way to protect against these risks.

What Does a Modern AI-Native Data Architecture Look Like?

A modern data pipeline is no longer just a simple "move and store" process; it is a live system that feeds AI agents the context they need to make decisions. The standard ETL (Extract, Transform, Load) model has been replaced by a layered orchestration stack designed for speed and accuracy.

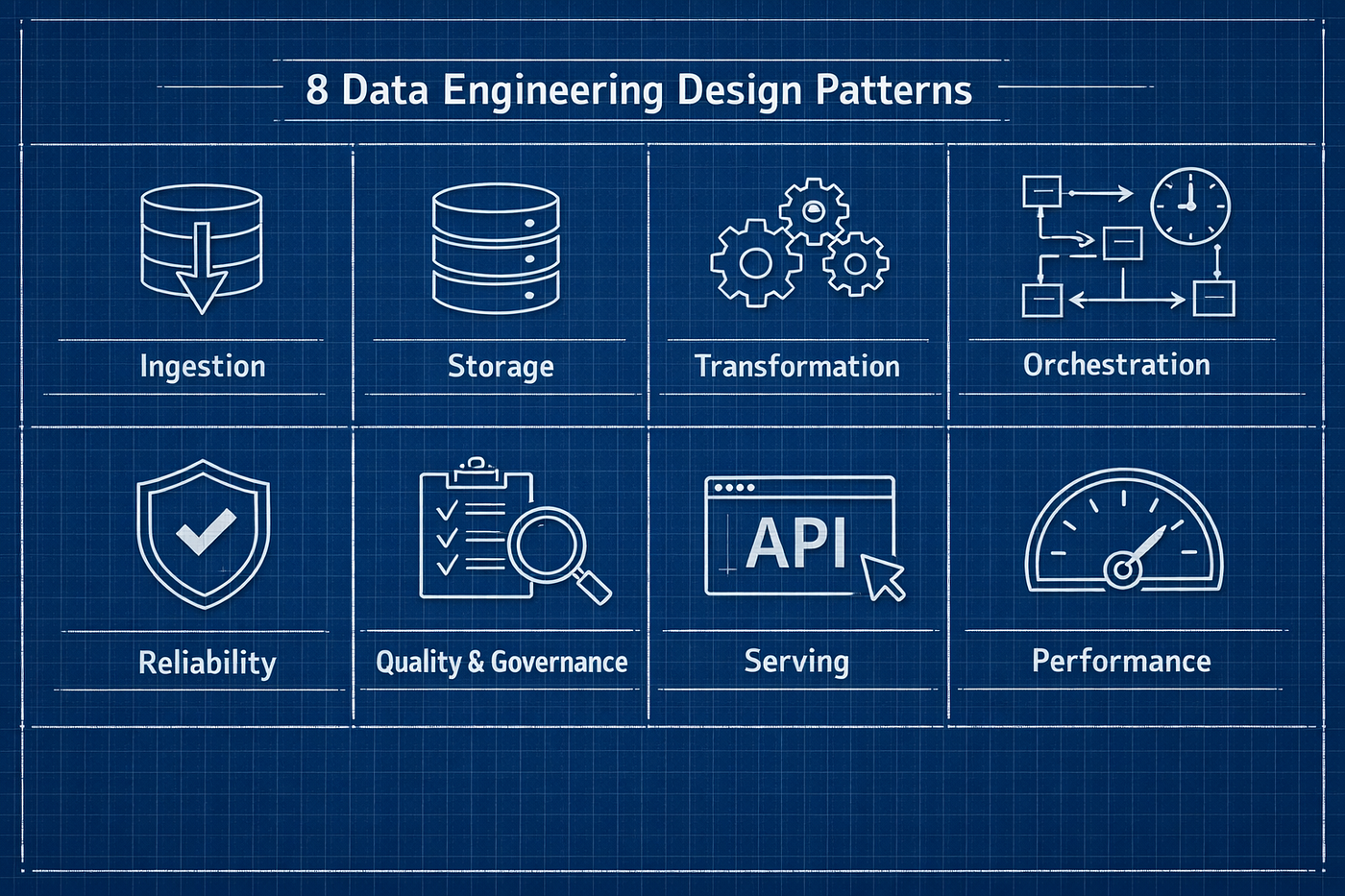

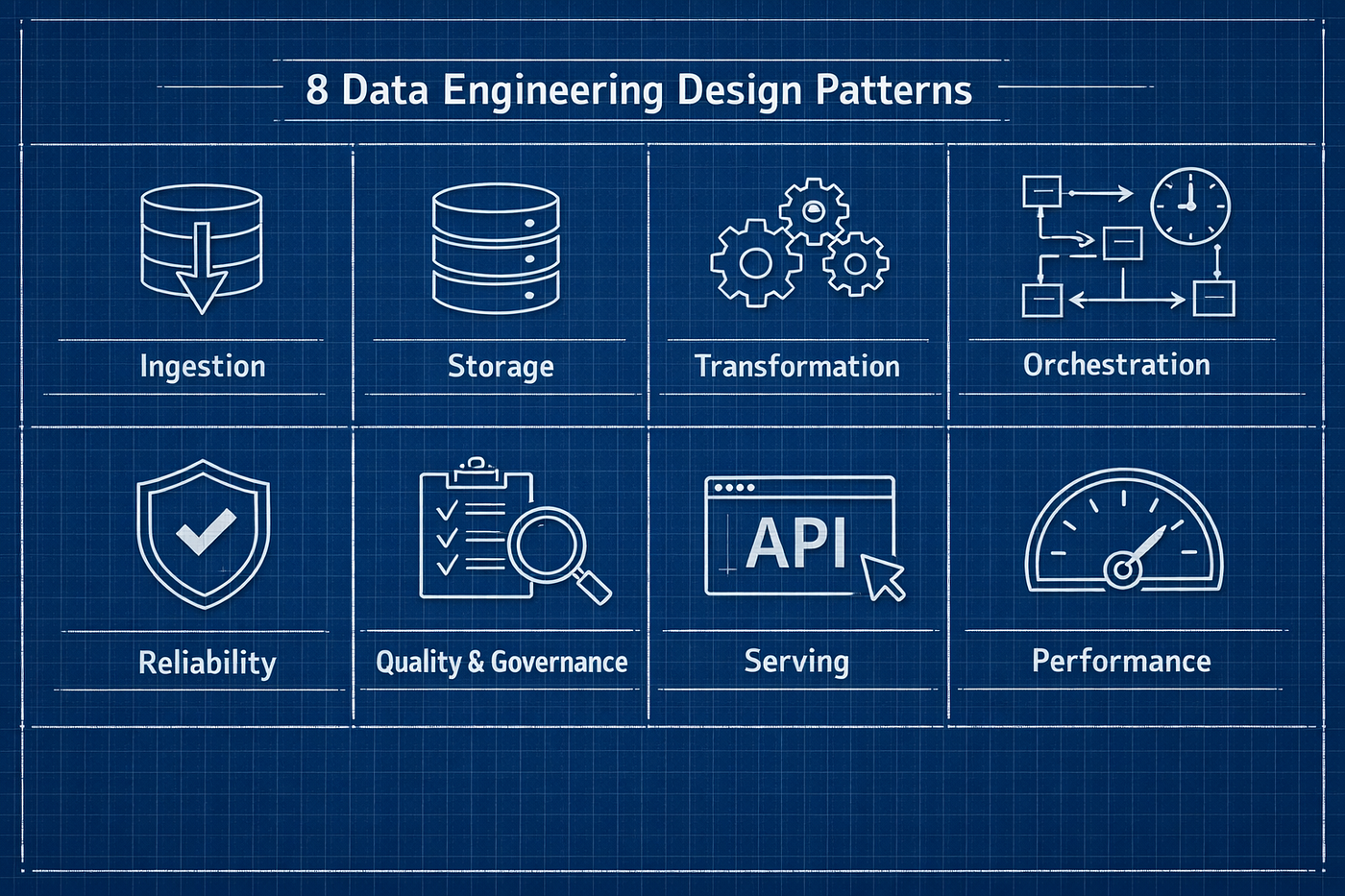

According to research on 2026 pipelines, a reliable architecture now includes:

Real-Time Streams: Data moves instantly via platforms like Kafka so AI agents have the latest context.

The Metadata Layer: We add automated labels and descriptions to data so LLMs can understand the meaning of the columns they are reading.

Feature Stores: These act like high-speed caches, storing the most important information in a format AI can consume in milliseconds.

Unified Data Clouds: Platforms like Snowflake's AI Data Cloud allow us to store structured and unstructured data in one place.

Observability Plane: This is the "brain" that watches for data errors and performance drops in real time.

Investing in the metadata layer has been our biggest win. By helping our AI models understand what the data actually represents, we’ve seen hallucination rates drop by nearly 30%. When the model knows exactly what a column means, it makes far fewer mistakes.

How Do We Bridge the "Visibility-Understanding Gap"?

The "Visibility-Understanding Gap" is the most common reason for AI production failures. It happens when you have plenty of logs and charts, but none of them tell you why your AI agent just gave a wrong answer. Having data is useless if you don't have the context to fix a problem quickly.

A 2026 report from Datadog found that operational complexity is the biggest barrier to scaling AI. One major issue is that rate limit errors—where the AI provider cuts you off for many too many requests—now cause one-third of all failures.

In our New York office, we’ve stopped looking just at traditional metrics like CPU usage. Instead, we track token usage, which has doubled in the last year. We now monitor "Prompt Efficiency" to see if our AI is wasting money by re-processing the same instructions over and over again. If your engineering team doesn't have visibility into these AI-specific metrics, you're essentially flying blind.

How is AI Improving Modern Data Engineering Workflows?

AI has transformed the daily life of a data engineer by taking over the repetitive "toil" of building and maintaining pipelines. Instead of manually writing thousands of lines of boilerplate code or hunting for specific bugs in massive logs, we now use AI-native tools to automate the technical busywork, allowing us to focus on system design and strategy.

At Experience.com, we’ve integrated AI directly into our workflow to handle the heavy lifting in three main areas:

Automated Schema Mapping: One of the biggest time-wasters in the past was manually mapping columns when moving data from one system to another. Now, modern tools can "read" the data and suggest the correct mappings with high accuracy.

Smart Monitoring and Root Cause Analysis: When a pipeline fails today, AI doesn't just send an alert; it looks through the logs, identifies the specific point of failure (like a changed API response), and suggests a fix.

Natural Language Data Exploration: We can now "talk" to our metadata. If I need to know which tables contain customer billing info, I can ask the system in plain English instead of searching through outdated documentation.

By letting AI handle these tasks, our engineering team has regained roughly 25% of their weekly capacity. We aren't spending less time working; we are spending more time building features that actually matter to our users.

Notes from the Field: 3 Mistakes We Made

Even with AI’s help, 2026 has taught us some painful lessons. Building AI-ready systems is a new discipline, and we’ve had our fair share of "expensive learning opportunities" along the way.

The Recursive Cost Spike: We once deployed an agent that was accidentally designed to retry a failed request by asking another AI to "fix" the request first. The two systems ended up in a loop, burning through hundreds of dollars in API tokens in minutes before a human intervened. We now have "circuit breakers" that automatically kill any process that exceeds its budget.

Ignoring Semantic Drift: Early on, we didn't realize that our AI agents were slowly getting worse at answering questions even though our "standard" code was healthy. The data hadn't changed, but the way the AI interpreted the data had shifted. This taught us that we need to monitor for "answers" just as strictly as we monitor for "uptime."

Over-Prompting: In our excitement, we started sending massive instruction manuals (system prompts) with every single data request. This made our systems slow and incredibly expensive. We learned to "compress" our instructions and use context caching, which cut our latency and costs by almost 20% overnight.

The Cost Engineering Crisis: Managing the AI Bill

It’s impossible to talk about 2026 data engineering without talking about the financial side. As Snowflake's AI Data Predictions suggest, the biggest "silent killer" of AI projects this year is the uncontrolled escalation of token costs.

For many engineers, "FinOps" used to be something that only the infrastructure team cared about. In 2026, every data engineer needs to be a FinOps specialist. When you are running massive RAG (Retrieval-Augmented Generation) pipelines, the cost is tied directly to the efficiency of your retrieval. If your search algorithm is retrieving 10 documents when only 2 are relevant, you are literally throwing money away with every API call.

We’ve started implementing "Budget Guardrails" directly into our orchestration layer. If a specific tenant's token consumption exceeds a calculated threshold based on their historical utility, the system automatically switches to a smaller, more cost-effective model for non-critical tasks. This kind of dynamic routing is the only way to stay profitable while scaling AI features across millions of users.

Summary: The Engineering Shift

If I could leave you with one takeaway, it's this: AI is a data engineering problem in a model's clothing. The teams that will win in the next two years aren't the ones with the largest LLMs; they are the ones who can reliably feed those models high-quality, real-time data while maintaining total visibility into how tokens are being spent.

Our role has evolved from being the "custodians of data" to being the "architects of intelligence." It’s no longer enough to ensure the data is there; we have to ensure it’s meaningful, cost-effective, and safe. As we move into the latter half of 2026, our job as engineers is becoming less about "building the pipeline" and more about "governing the intelligence." It's a challenging time to be in data, but it's also the most exciting era we've ever seen. We’re finally at the table, and the stakes have never been higher.

Discussion Questions

Is your team still treating AI as a separate "plugin," or is it integrated into your core data architecture?

What is the biggest operational hurdle you've faced when moving an agent from an MVP into full production?

How are you managing "Token Debt"—the compounding cost of inefficient system prompts and lack of context caching?

Do you believe data observability should be part of the engineering stack, or a separate governance responsibility?

With 80% of organizations now multi-model, how are you ensuring data consistency across different LLM providers?

About the Author: Athira Krishnan is a Senior Data Engineer at Experience.com in New York City. She specializes in building AI-ready data infrastructure and observability systems for cloud-scale analytics. Athira has over 12 years of experience in the data space and has been a lead architect in moving Experience.com toward an AI-first operational model. When she's not debugging vector stores, she can be found mentoring junior engineers in the NYC tech community.

Why is Data Maturity Now More Important Than the AI Itself?

In 2026, the competitive advantage has shifted from who has the best model to who has the most mature data ecosystem. According to a 2026 Gartner Maturity Report, the future belongs not to those who simply buy AI tools, but to those who focus on data governance and automated management to maximize their investments.

We’ve reached a point where "messy logs and CSVs" are no longer just an analytics problem—they are a system failure for AI agents. When you deploy autonomous agents that make real-time decisions, even a minor schema drift can cause a cascading failure. We’re seeing a 80% of CEOs admitting that AI will force a total overhaul of their operational capabilities. This isn't just about "cleaning data" anymore; it's about building highly resilient, observable, and self-healing pipelines.

What Does a Modern AI-Native Data Architecture Look Like?

The standard ETL pipeline we all grew up with is being replaced by a multi-layered orchestration stack. In the modern model, we aren't just thinking about batch processing; we are thinking about real-time event streaming and feature stores specifically optimized for AI agents.

A typical 2026 architecture usually breaks down into these fundamental layers:

Real-Time Operational Sources: We’ve moved beyond 24-hour syncs. Data flows in via event streaming (Kafka, Pulsar) to serve agents that need immediate context.

The Feature Store (AI-Optimized Layer): This is where we materialize data in formats that agents can consume instantly. Think of it as a low-latency cache for the most important vectors and attributes.

The Unified Data Cloud: Platforms like Snowflake's AI Data Cloud now act as a single source of truth where structured analytics and unstructured model training data coexist.

The Observability Control Plane: This is the "brain" that monitors for data drift, rate limit errors, and model performance in production.

How Do We Bridge the "Visibility-Understanding Gap"?

One of the biggest frustrations in my current role is that we have more data than ever, but often fewer answers during an incident. We call this the Visibility-Understanding Gap. You can have all the metrics and logs in the world, but if your dashboard shows a 400 error and doesn't tell you why an LLM agent just failed, the visibility is useless.

In Datadog's State of AI Engineering 2026 report, operational complexity was identified as the primary barrier to Scaling AI. We're finding that rate limit errors now account for nearly a third of all LLM call failures. To fix this, we've had to implement an "intelligence layer" on top of our observability tools—an AI that monitors our AI.

For example, when a monitoring dashboard in our NYC office flags a performance dip in our cloud analytics system, we don't just look at CPU usage. We look at token usage, which has more than doubled year-over-year, and context window pressure. If an agent is re-processing the full prompt every time rather than using cached context, our costs spike and our latency follows.

Real-World Example: Automating Cloud Analytics Monitoring

Let’s get concrete. At Experience.com, we recently over-hauled our monitoring for a fleet of customer-facing AI agents. Originally, we used standard APM (Application Performance Monitoring). But we quickly realized that "Healthy" in APM terms didn't mean the agent was actually helping the user.

We built a custom AI Observability Dashboard that tracks:

Semantic Drift: Are the agent's answers moving away from the ground truth over time?

Cost-per-Outcome: Instead of just tracking total token cost, we track how many tokens it took to successfully resolve a customer query.

Agent Scaffolding Latency: 69% of input tokens are now used in system prompts (scaffolding). We monitor how much of this overhead is truly necessary.

By automating this governance, we reduced our "Visibility-Understanding Gap" and cut our incident response time for AI failures by 40%. We stopped guessing why agents were hallucinating and started seeing the data quality issues causing it.

Summary: The Engineering Shift

If I could leave you with one takeaway, it's this: AI is a data engineering problem in a model's clothing. The teams that will win in the next two years aren't the ones with the largest LLMs; they are the ones who can reliably feed those models high-quality, real-time data while maintaining total visibility into how tokens are being spent.

As we move into the latter half of 2026, our job as engineers is becoming less about "building the pipeline" and more about "governing the intelligence." It's a challenging time to be in data, but it's also the most exciting era we've ever seen.

Discussion Questions

Is your team still treating AI as a separate "plugin," or is it integrated into your core data architecture?

What is the biggest operational hurdle you've faced when moving an agent from an MVP into full production?

How are you managing "Token Debt"—the compounding cost of inefficient system prompts and lack of context caching?

Do you believe data observability should be part of the engineering stack, or a separate governance responsibility?

With 80% of organizations now multi-model, how are you ensuring data consistency across different LLM providers?

About the Author: Athira Krishnan is a Senior Data Engineer at Experience.com in New York City. She specializes in building AI-ready data infrastructure and observability systems for cloud-scale analytics.