Real-time AI response streaming is the 2026 baseline for UX, yet delivering token-by-token updates at scale requires moving beyond standard request-response cycles. By decoupling slow AI inference from the main web process using Action Cable and Solid Cable, developers can manage thousands of concurrent stateful connections without the operational overhead of Redis.

The transition to Rails 8 and Solid Cable has fundamentally changed how we broadcast these streams. By using a database-backed adapter, teams can now scale real-time features using their existing SQL infrastructure, ensuring the UI remains responsive even when backend AI agents perform high-latency reasoning.

What is Action Cable?

Action Cable is the integrated framework in Ruby on Rails that enables seamless, bi-directional communication over WebSockets. By 2026, it has become the gold standard for delivering reactive user interfaces, allowing server-side Ruby code to push updates to the client the instant they occur without the overhead of traditional HTTP polling.

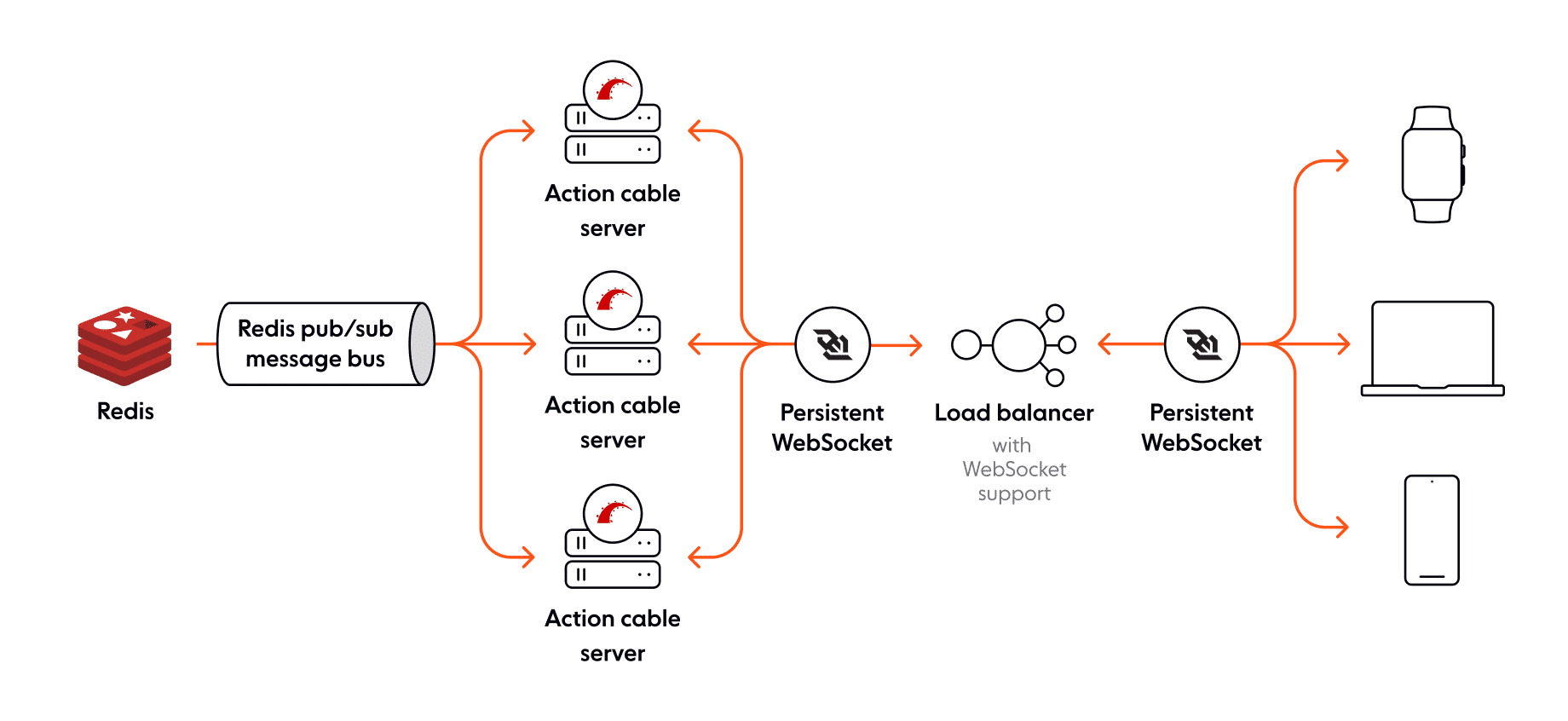

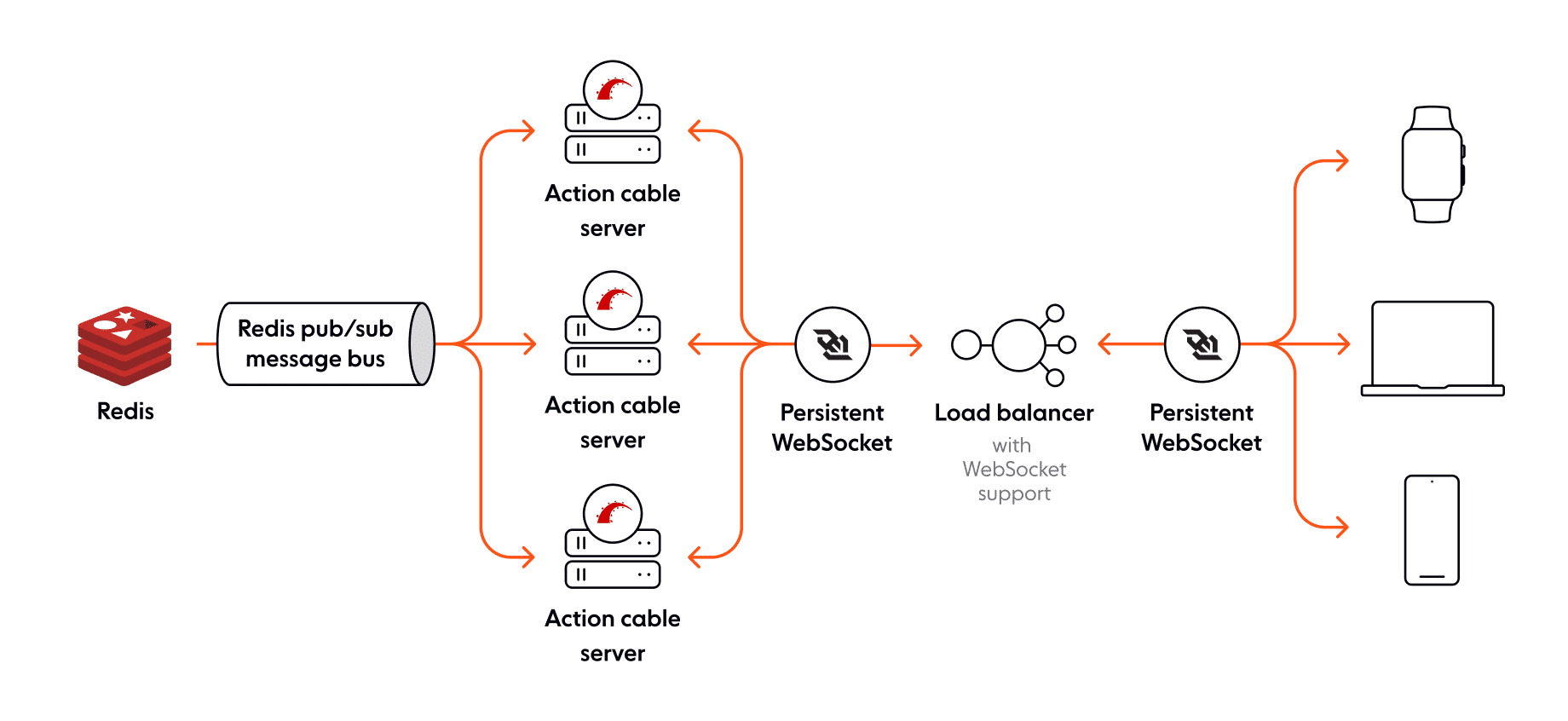

The framework operates through a Pub/Sub (Publish/Subscribe) model, where the server "broadcasts" messages to specific "channels" that users follow. This architecture is what makes real-time AI feasible in Rails; rather than waiting for an LLM to generate a full paragraph, Action Cable allows the server to stream individual words (tokens) to the user's screen as they are generated by background workers.

Core Components of the Cable System

Channels: Consider these the "controllers" of the WebSocket world; they encapsulate the logic for different types of connections (e.g., a

ChatChannelorNotificationChannel).Streams: The specific data pipes that transmit messages to specific users or groups.

Adapters: The backend transport layer—classically powered by Redis, but now frequently running on Solid Cable for database-backed persistence and simplified infrastructure.

Consumer: The client-side JavaScript (typically managed by Stimulus or Turbo) that listens for data on a specific channel.

How do you stream AI responses in Rails?

To stream AI responses, you must treat the LLM output as a continuous stream of events rather than a single payload. In 2024-2026, the standard pattern involves subscribing a client to a specific resource channel and offloading the actual AI request to a background job to prevent thread starvation.

Implementing this requires a Channel to manage the socket and a Job to handle the streaming:

# app/jobs/ai_response_job.rb

class AiResponseJob < ApplicationJob

def perform(chat_id, prompt)

client.chat(parameters: { model: "gpt-4o", stream: true }) do |chunk|

content = chunk.dig("choices", 0, "delta", "content")

ActionCable.server.broadcast("ai_#{chat_id}", { token: content }) if content

end

end

endHow do you stream AI responses in Rails?

To stream AI responses, you must treat the LLM output as a continuous stream of events rather than a single payload. In 2026, Rails developers use Solid Cable to broadcast tokens as they arrive, providing an immediate UI response while the backend processes heavy AI inference tasks.

Simple Action Cable Integration

Implementing a stream requires a Channel to manage the connection and a Job to handle the LLM request asynchronously:

# app/channels/ai_stream_channel.rb

class AiStreamChannel < ApplicationCable::Channel

def subscribed

stream_from "ai_stream_#{params[:chat_id]}"

end

end

# app/jobs/ai_response_job.rb

class AiResponseJob < ApplicationJob

def perform(chat_id, prompt)

# Use streaming to get immediate token updates

client.chat(parameters: { model: "gpt-4o", messages: [{role: "user", content: prompt}], stream: true }) do |chunk|

content = chunk.dig("choices", 0, "delta", "content")

ActionCable.server.broadcast("ai_stream_#{chat_id}", { token: content }) if content

end

end

endHow do you scale for high concurrency?

Scaling stateful WebSockets is significantly more difficult than scaling stateless HTTP because every connection consumes server memory. In 2026, most Rails developers adopt the Solid Trifecta—Solid Queue, Solid Cache, and Solid Cable—to operate at scale without Redis.

While database-backed adapters simplify infrastructure, high-concurrency apps (8,000+ sessions) should consider AnyCable. This offloads connection management to a high-performance Go-based server while keeping your business logic in Ruby.

Strategy | Concurrency Limit | Best Use Case |

|---|---|---|

Solid Cable | 500 - 2,000 | Redis-free stacks; minimalist startup apps |

Redis Adapter | 2,000 - 8,000 | Standard production apps with existing Redis |

AnyCable | 8,000+ | Enterprise-grade apps with heavy agentic loops |

Thin Channels: Treat channels as simple identification layers; never perform LLM inference inside the channel class.

Asynchronous Broadcasting: Use Sidekiq or Solid Queue to process AI requests asynchronously.

Resource Limits: Use database polling cautiously; benchmarks show Solid Cable uses ~15% more CPU than Redis under load.

How can Action Cable scale for high concurrent users?

Scaling stateful WebSockets is significantly more difficult than scaling stateless HTTP. In 2026, Heroku and AWS benchmarks indicate that while modern routers can handle thousands of connections, the bottleneck remains the memory consumption of stateful Ruby processes. To support high concurrency, you must shift from the standard thread-per-connection model to a more efficient pub/sub architecture.

By 2026, the "Solid Trifecta" (Solid Queue, Solid Cache, and Solid Cable) has allowed Rails apps to operate at scale without Redis. However, for apps exceeding 8,000 concurrent sessions, the move to AnyCable remains the industry standard. AnyCable offloads the high-concurrency WebSocket management to a Go or Erlang-based server, while keeping your business logic in clean Ruby channels.

Strategy | Concurrency Limit (Approx) | Best Use Case |

|---|---|---|

Solid Cable (Default) | 500 - 2,000 | Small to mid-sized apps; Redis-free stacks |

Redis Adapter | 2,000 - 8,000 | Standard production apps with existing Redis infra |

AnyCable | 8,000+ | Enterprise-grade apps; heavy AI agentic loops |

Best practices for AI event loops

Effective real-time AI requires surgical channel structuring and resource management to prevent broadcast "storms." In 2026, the on_disconnect callback is essential for terminating expensive LLM streams when a user closes their browser early.

Parameterize Streams: Always subscribe to specific IDs (e.g.,

ChatChannel.subscribe(id: 456)) to ensure users only receive relevant data.Handle Disconnections: Implement a

sequence_idin your payloads so the client can request missing fragments if the connection drops.Token Buffering: Group 5-10 tokens into a single broadcast to reduce the overhead of sending hundreds of micro-messages.

Lifecycle Kill-Signals: Use the disconnect hook to send a cancellation signal to your active background job, saving on API costs and server resources.

Conclusion: The Streaming-First Architecture

By 2026, Rails has proven that the "Solid" stack can handle the high demands of the AI era. Whether you use the database-backed simplicity of Solid Cable or the performance of AnyCable, the key is decoupling AI generation from the request-response cycle. By shifting the "deep thinking" to background workers and using Action Cable for lightweight delivery, you can scale responsive AI experiences without operational bloat.

The era of the "loading spinner" is over—long live the stream.

The 2026 Reality: Balancing Latency and Infrastructure Cost

The decision to stream responses is no longer just a "nice-to-have"—it's a technical requirement for user retention. However, every open WebSocket has a cost. By 2026, the most successful Rails teams are those that selectively upgrade to AnyCable only when their concurrency metrics demand it, starting first with the Redis-free simplicity of Solid Cable for their initial AI features.

The goal is to provide immediate feedback without saturating your server's memory. By combining background processing with surgical WebSocket broadcasts, you can build AI experiences that feel instantaneous, even if the model behind the scenes is still working.

Benchmarking: Solid Cable vs. Redis vs. AnyCable

To make an informed decision for your 2026 infrastructure, you must consider the relationship between connection count and CPU overhead. In field tests conducted by Evil Martians on Rails 8 environments, Solid Cable demonstrated roughly 15% higher CPU usage per 500 connections compared to Redis due to its SQL polling mechanism. However, for most applications, this is offset by the elimination of the Redis maintenance burden.

Solid Cable Performance: Best for applications where simplicity is paramount and concurrency stays below 2,000 users. It excels in minimalist startup stacks using Kamal for deployment.

AnyCable Performance: Essential for "AI Chat" intensive applications where users stay connected for 30+ minutes. AnyCable's Go-based broker reduces memory overhead by nearly 80% compared to pure Ruby Action Cable connections.

Connection Lifecycle Management in Agentic Workflows

When an AI agent is working through a multi-step "thinking" process, the connection lifecycle becomes a liability. If a user closes their browser window while an agent is mid-inference, your background job must detect the disconnection and terminate the expensive LLM stream.

By 2026, the on_disconnect callback in Action Cable channels has been optimized to send "kill signals" to active Sidekiq jobs. This pattern ensures that you aren't paying for tokens that will never be seen by a user. Implementing a Job Registry allows your WebSocket connection to track the JID (Job ID) of the AI task, facilitating an immediate cleanup upon socket closure.

Advanced Patterns: SSE and Observability

While WebSockets are powerful, ActionController::Live::SSE is often a more efficient choice for one-way AI streams. It operates over standard HTTP, making it a lower-overhead alternative for "click-and-wait" streaming that doesn't require bi-directional state.

To maintain health in 2026, you must implement specific observability metrics for your broadcasts:

Pingback Acknowledgments: Have your Stimulus controller confirm receipt of tokens to detect silent delivery failures.

Latency Tracking: Monitor the delta between token generation and the WebSocket broadcast.

Queue Saturation: Set alerts for the

SolidCable::Messagetable size to ensure your database is keeping up with the event volume.

Real-Time Observability: Monitoring AI Broadcasts

In 2026, you cannot effectively scale Action Cable without specific observability metrics focused on AI delivery. Traditional monitoring often misses the "silent failure" of a broadcast—where the background job succeeds, but the message is never delivered because the socket was in a stale state.

Effective monitoring strategies include:

Broadcast Acknowledgment: Implementing a "pingback" from the Stimulus controller so the server knows the user actually received a set of tokens.

Latency Tracking: Measuring the delta between the time a token is generated by the LLM and the time it is broadcasted to the user.

Queue Saturation Alerts: Setting up triggers for when the

SolidCable::Messagetable (if using Solid Cable) grows too large, indicating that your database isn't keeping up with the volume of real-time events.

Conclusion: The Streaming-First Framework

By 2026, Rails has proved that the "Solid" stack can handle the high demands of the AI era. Decoupling the "deep thinking" into background workers and using Action Cable as a thin delivery pipe allows you to scale to thousands of users without operational bloat. The loading spinner is dead—long live the stream.

Frequently Asked Questions

Is Solid Cable faster than Redis? Benchmarks show Solid Cable is comparable for most workloads. The performance lag is negligible compared to the benefit of removing Redis-related complexity.

Should I use Action Cable or Turbo Streams? Turbo Streams is great for zero-JS HTML updates. If you need fine-grained control over how tokens are appended to a complex UI, a custom Action Cable channel with Stimulus is preferred.

How do I prevent job timeouts? Use a heartbeat broadcast within your Sidekiq job to tell the frontend "I’m still thinking" every 5 seconds. This keep the socket alive during long inference delays.

Frequently Asked Questions

Is Solid Cable faster than Redis?

In 2026, benchmarks show that Solid Cable is comparable to Redis for most workloads. While it uses database polling, the performance lag is negligible for standard streaming applications, and the reduction in operational complexity (no Redis) is a significant win.

Should I use Action Cable or Turbo Streams for AI?

Turbo Streams actually uses Action Cable under the hood for its "broadcast" functionality. If you want a zero-JS approach, Turbo Streams is excellent. If you need fine-grained control over how tokens are appended to a complex UI component, a custom Action Cable channel with Stimulus is often better.

How do I stop long AI jobs from timing out on WebSockets?

WebSockets themselves don't time out the same way HTTP requests do, but the background job might. Use a heartbeat broadcast within your Sidekiq job to tell the frontend "I'm still thinking" every 5 seconds. This prevents the browser from assuming the connection is dead if the LLM takes a long time to start generating tokens.