How to Build Hallucination-Free QnA AI Agents (2026 Guide)

Enterprises are replacing "fun" AI with verifiable QnA agents. Learn how grounding and RAG evals eliminate hallucinations to make AI a true work replacement.

Chandan Maruthi • May 13, 2026

The era of "fun" AI experiments is ending as enterprises shift from novelty chat to sovereign agents capable of executing multi-step work. In 2026, the primary barrier to this transition is no longer processing speed or cost, but verifiable reliability. While early generative models suffered from hallucination rates reaching 40% in systematic review tasks, a new class of QnA AI agents, grounded in semantic context and rigorous evaluation frameworks, is finally making AI a viable work replacement for the enterprise.

Why is traditional AI failing the enterprise?

The "hallucination problem" isn't a minor bug; it is a structural byproduct of how large language models (LLMs) predict the next token based on statistical probability rather than factual truth. For a creative writer, this unpredictability is a feature; for a customer service lead or a legal analyst, it is a liability.

Gartner reports in May 2026 that a lack of semantic grounding is causing widespread inaccuracy in AI agents, leading to wasted corporate spending and severe governance vulnerabilities. When an agent "guesses" a refund policy or "invents" a contract clause, the operational risk outweighs the efficiency gain. To move past this, enterprises are adopting Retrieval-Augmented Generation (RAG) as the default architecture to ground outputs in their own private, verifiable data.

How do grounded AI agents guarantee truth?

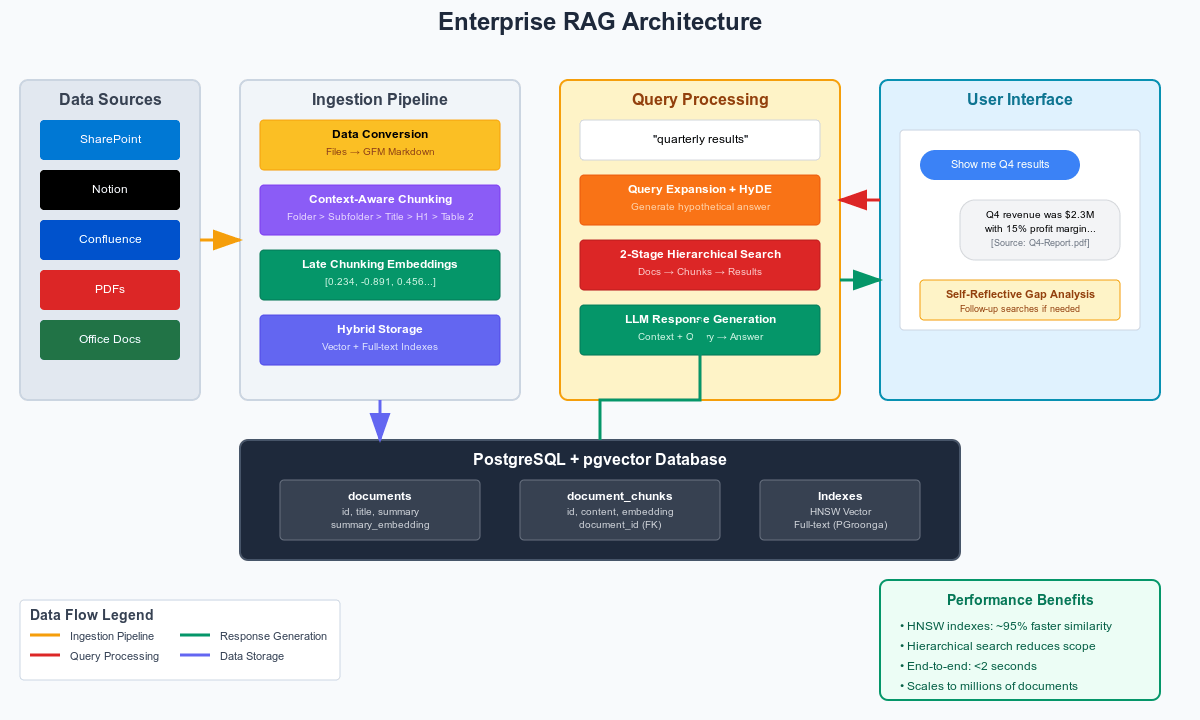

Grounded AI agents operate on a "closed book" principle: they are strictly forbidden from answering using their internal training data alone. Instead, they must retrieve specific "chunks" of verified documentation before generating a response. This process ensures that every word the agent speaks can be traced back to a specific source document, transforming the AI from a creative writer into a highly efficient librarian.

Evidence of this shift's impact is already appearing in high-stakes industries. A 2026 study on PubMed found that RAG-enhanced systems for clinical decision support achieved an 89% performance improvement over baseline models. This is the difference between a system you can deploy in a regulated hospital environment and one that remains a laboratory curiosity. By anchoring the LLM's reasoning engine to a verified context layer, companies are finally seeing the "hallucination-free" performance required for production.

To achieve this level of accuracy, grounding requires three distinct technical layers:

Semantic Vector Store: Documents are converted into mathematical representations (embeddings) that map the meaning of the text, allowing the agent to find information even if the user doesn't use the exact keywords.

Strict Prompting (The Guardrail): The LLM is given an explicit instruction: "Use ONLY the following context to answer the question. If the answer is not in the context, say 'I do not know'."

Citation Engine: Every generated sentence is tagged with a reference to the source chunk, allowing human supervisors to click through and verify the original document.

What are the core metrics for verifying AI agents?

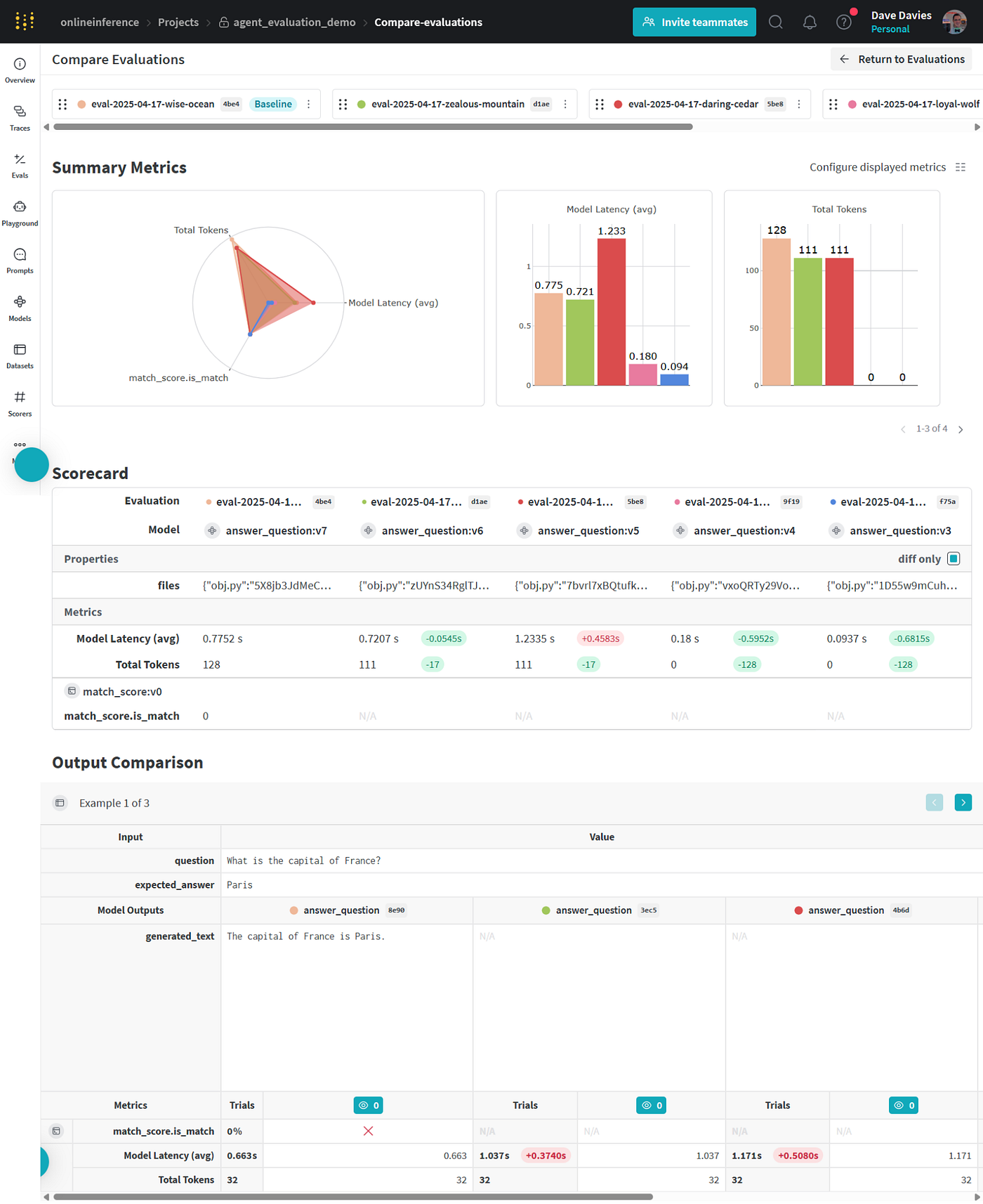

You cannot manage what you cannot measure. Productizing an AI agent requires moving away from "vibe-based" testing—where developers manually check ten prompts—to automated evaluation (Evals) using frameworks like Ragas, DeepEval, or Arize Phoenix. These tools use a "Judge LLM" to score the agent's performance across the RAG Triad:

Metric Name | What it Measures | Why it Matters |

|---|---|---|

Faithfulness | Measures how accurately the answer aligns with the retrieved context. | Prevents the agent from "making things up" that aren't in your knowledge base. |

Context Recall | Checks if the retrieval system actually found the correct document needed to answer. | Ensures the agent has the whole truth, not just a partial or irrelevant fragment. |

Answer Relevancy | Measures how directly the agent's response addresses the user's actual question. | Stops the agent from providing technically correct but uselessly broad information. |

In 2026, leading AI teams target specific diagnostic benchmarks: a faithfulness score of at least 0.75 and an answer relevancy of 0.8 is generally considered the "production-ready" floor for enterprise agents.

Can AI agents actually replace human work today?

When grounded in truth and verified by evals, AI agents are proving they can handle complex workflows that were previously human-exclusive. JPMorgan now runs over 450 agentic AI examples in production daily, while Salesforce has reportedly cut $5 million in legal costs through verified contract automation.

The most dramatic example comes from Klarna, which replaced the work equivalent of 853 full-time employees with a single grounded customer service AI agent. This wasn't achieved by simply "hooking up a chatbot," but by building a semantic context layer that guarantees the agent only provides information verified by Klarna’s internal support documentation.

How to build an evaluation stack for production?

Building a reliable QnA agent is an iterative process that requires a diagnostic framework. Experts recommend a three-stage lifecycle for enterprise evaluation:

Exploration (Ragas): Use this framework during initial development to gain component-level metrics on context precision and recall.

Testing (DeepEval): Integrate pytest-style testing into your CI/CD pipeline to ensure that new code deployments don't cause "regressions" (making the agent dumber or more prone to hallucinations).

Observability (Arize Phoenix): Deploy this for production monitoring to visualize your embedding space and catch "drift" when user questions begin to fall outside your agent's verified knowledge area.

By the end of 2026, over 60% of production AI applications will rely on these RAG-specific evaluation dimensions. For the modern CEO, the question is no longer "Will AI hallucinate?" but "Do we have the evaluation infrastructure to prove it won't?"

How does grounded AI solve for security and governance?

The shift toward verifiable agents is as much about risk mitigation as it is about productivity. In a standard LLM environment, sensitive data used during training can "leak" through or be approximated by the model. Grounded agents solve this by maintaining a separation of powers between the reasoning engine and the data repository.

Attribute-Based Access Control (ABAC) is the standard for 2026 enterprise agents. In this model, the agent first identifies the user's permissions before searching the vector store. If a junior analyst asks a question about executive compensation, the grounding mechanism simply fails to retrieve those documents, meaning the agent cannot even "hallucinate" an answer because the underlying data is invisible to it.

Furthermore, verifiable answers create a permanent audit trail. For industries like finance and healthcare, every automated interaction must be defensible. Because grounded agents provide citations for every claim, compliance teams can treat AI logs exactly like human-managed support logs—tracing every interaction back to an approved corporate policy or clinical guideline. This transparency is the primary reason Gartner predicts AI agents will reshape infrastructure for over 80% of organizations by the end of the year.

Frequently Asked Questions

Does "grounded AI" mean it can't make mistakes?

No, grounding drastically reduces hallucinations by restricting the AI to specific sources, but errors can still occur if the source data itself is wrong or if the retrieval system pulls the wrong document. This is why "Context Recall" and "Faithfulness" evals are critical to catch these rare failures before a user sees them.

Why do I need a "Judge LLM" to evaluate my agent?

Human evaluation is too slow and expensive for the thousands of test cases required for enterprise certification. A "Judge LLM" (typically a larger model like GPT-4o or Claude 3.5 Sonnet) can analyze the logic of your agent's answers at a cost of $0.001 to $0.003 per test case, providing the scale needed for continuous improvement.

What is the difference between RAG and a standard chatbot?

A standard chatbot relies on what it learned during its initial training, which is often outdated or generic. A RAG agent uses the LLM as a "reasoning engine" that reads your latest PDFs, SQL databases, and Slack messages in real-time before answering, ensuring the response is always current and specific to your business.