Real-Time Data Processing: The 2026 Dataflow Guide

Master real-time data processing with Google Cloud Dataflow. Learn how exactly-once delivery and auto-scaling workers drive performance in 2026 pipelines.

Devesh Balaji Bilapate • May 11, 2026

Data is no longer a static asset for post-mortem analysis; by 2026, roughly 30% of all generated data is expected to be real-time, forcing a shift from scheduled batch windows to continuous event-stream execution. Organizations today face a "latency tax" where every second of delay in data processing translates directly to lost revenue in fraud detection, stock trading, or customer personalization.

Real-time data processing using Google Cloud Dataflow has emerged as the standard for solving this challenge, primarily because it abstracts the immense complexity of distributed state management and "exactly-once" delivery. As a fully managed service, it allows engineers to focus on business logic rather than manually tuning worker clusters or managing low-level checkpoints.

What is Real-Time Dataflow Processing?

Real-time data processing refers to the continuous ingestion and transformation of data points within milliseconds to seconds of their generation. Unlike traditional Extract, Transform, Load (ETL) processes that operate on finite blocks of data, a "Dataflow" approach treats data as an unbounded stream that never ends.

The architecture typically starts with a messaging service like Google Cloud Pub/Sub, which acts as the buffer for incoming events. Dataflow then "subscribes" to this stream, applying transformations—such as filtering, joining, or aggregating—before sinking the results into a high-performance database like BigQuery or Bigtable. In 2026, verified data shows over 340 companies are leveraging this specific stack to maintain business agility in high-stakes environments.

How Dataflow Manages Complex Event Timing

The hardest part of real-time processing isn't the data speed; it’s the event timing. In a distributed system, an event that happens at 10:00 AM (Event Time) might not reach your processing engine until 10:05 AM (Processing Time) due to network lag or mobile connectivity drops.

Dataflow solves this using the Apache Beam model, which focuses on four critical questions:

What is being computed? (Transformations)

Where in event time is it being computed? (Windowing)

When in processing time are the results emitted? (Triggers)

How do refinements relate to previous results? (Accumulation)

By using Watermarks, Dataflow can track how far the pipeline has progressed relative to the actual time the events occurred. This allows the system to wait for "late data" without stalling the entire pipeline, ensuring that hourly summaries or daily totals remain accurate despite the chaos of real-world internet connectivity.

Benchmarking Performance: Dataflow vs. Flink and Spark

When choosing a streaming engine, engineers often weigh Dataflow (Apache Beam) against Apache Flink and Apache Spark. While Apache Flink is often cited as the 'streaming king' for its raw sub-millisecond latency, Dataflow offers a different value proposition centered on operational simplicity and vertical scaling.

Performance Metric | Google Cloud Dataflow | Apache Flink | Apache Spark (Structured) |

|---|---|---|---|

Typical Latency | Low seconds to sub-second | Milliseconds | Micro-batch (low seconds) |

Scaling Model | Fully managed autoscaling | Manual or Kubernetes-managed | Fixed cluster or Databricks-managed |

Portability | High (Apache Beam SDK) | Medium (Specific to Flink) | Medium (Specific to Spark) |

State Management | Native, service-managed | RocksDB incremental checkpoints | Checkpoint-based recovery |

For teams that don't want to manage their own infrastructure, Dataflow's ability to autoscale workers based on CPU load and data throughput is a decisive factor. In a 2026 comparison of high-throughput engines, Dataflow was praised for its ability to handle unpredictable event spikes without manual intervention, which is essential for high-concurrency real-time analytics.

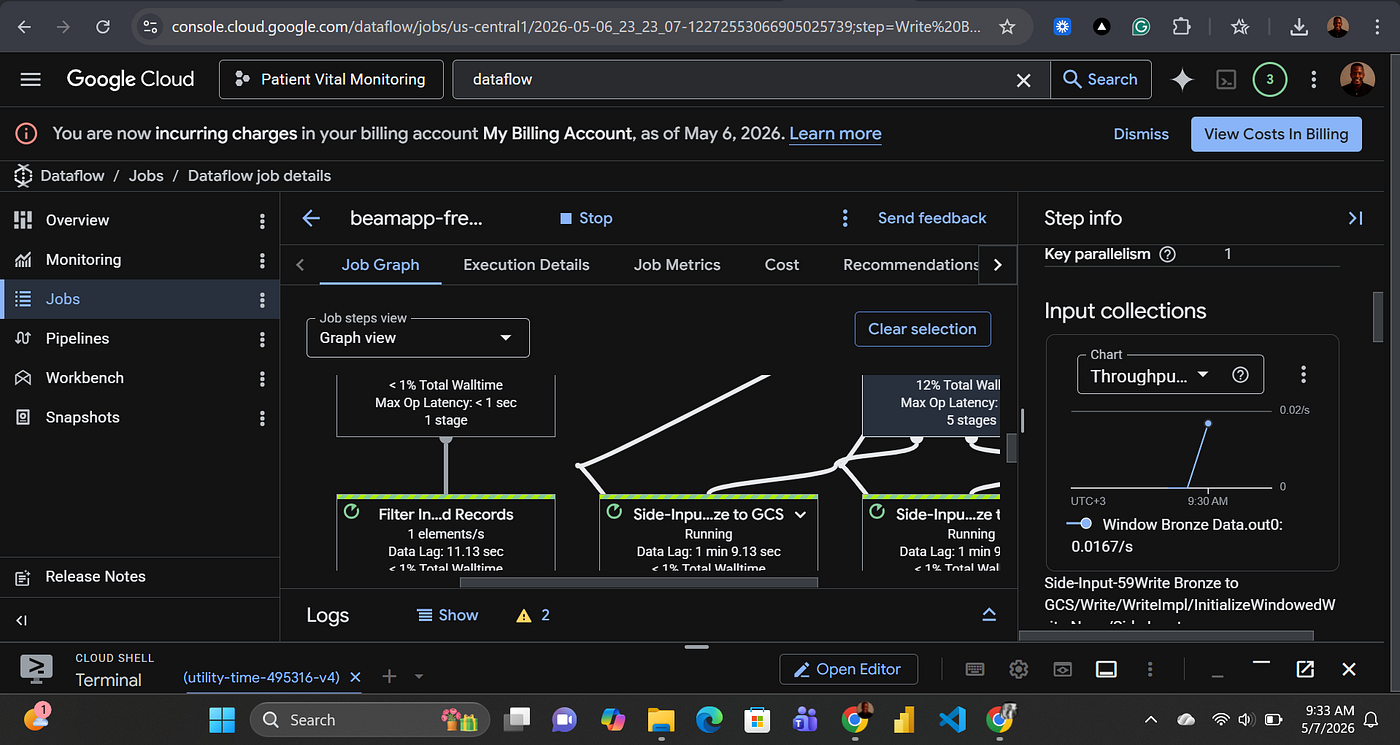

Practical Implementation: Building an IoT Pipeline

A common 2026 use case for Dataflow is processing IoT sensor data in real-time. Imagine a fleet of 50,000 delivery vehicles transmitting temperature and location data every second.

The pipeline logic follows a predictable pattern:

Ingest: Connect to a Pub/Sub topic where telemetry data arrives.

Window: Group data into 5-minute fixed windows per vehicle ID.

Aggregate: Calculate the average temperature and detect if it exceeds a threshold (e.g., above 40°F for refrigerated goods).

Trigger: If a threshold is breached, emit an "Alert" to a separate topic immediately (side input) while continuing the 5-minute calculation.

Sink: Write the final aggregated metrics to BigQuery for long-term trend analysis.

New Dataflow features in 2026 have significantly improved this process by allowing ML models to be called directly from within the pipeline. This means a Dataflow job can now perform real-time sentiment analysis on social media feeds or predictive maintenance on equipment data without the latency of an external API call.

What are the Core Challenges of Real-Time Pipelines?

While Dataflow abstracts infrastructure, engineers must still design for stateful recovery and backpressure. A real-time system is only as strong as its weakest link; if your sink (e.g., a SQL database) cannot handle the write volume from Dataflow, the system will experience backpressure, leading to data freshness lag.

Common architectural hurdles include:

Data Skew: If one specific key (like a high-traffic sensor) receives 90% of the events, that specific worker becomes a bottleneck. Dataflow helps by "re-sharding" the work, but poor key selection in the code can still cause hot spots.

Side Input Scaling: Using external data (like a lookup table for customer names) inside the pipeline is efficient, but if that side input is too large, it can bloat worker memory and slow down processing.

Checkpoint Overhead: Maintaining "exactly-once" delivery requires constant metadata storage. While Dataflow handles this internally, very complex stateful transformations can increase the latency of each individual record.

Addressing these requires a shift in mindset from static capacity planning to dynamic monitoring. By 2026, advanced observability tools integrated into Google Cloud allow engineers to visualize these bottlenecks in real-time, pinpointing exactly which transformation in the graph is falling behind.

Why Cost Optimization Matters in 2026

Streaming pipelines are notoriously expensive because they are always-on, unlike batch jobs that only run for an hour. To manage ROI, organizations are moving toward "Flexible Resource Scheduling" and leveraging Dataflow Prime's specialized worker machines tailored to specific memory or CPU needs.

Strategic cost levers include:

Vertical Autoscaling: Automatically swapping machine types based on the memory footprint of the job without restarting the pipeline.

Right-Fitting Shuffles: Moving the "shuffle" operation (shifting data between workers) to a dedicated service layer rather than using local worker disks, which reduces IOPS costs.

Drafting and Development: Many teams now use local runners for development and only deploy to full Dataflow clusters for production, significantly reducing pre-production spend.

Effective cost management in 2026 isn't about choosing the cheapest engine; it's about choosing the engine that provides the best price-to-latency ratio. Industry reports indicate that while managed services like Dataflow carry a premium, the reduction in DevOps headcount often results in a lower Total Cost of Ownership (TCO) compared to self-managed Spark clusters.

How to Manage Pipeline Evolution and Updates

One of the most overlooked aspects of real-time processing is updating code on the fly. You cannot simply "turn off" a streaming pipeline to update a transformation without risking data loss or a massive backlog.

Dataflow supports "In-Place Updates," which allows a new version of the job to take over the state of the old job. This process involves:

Compatibility Checks: Ensuring the new pipeline graph can read the intermediate state (checkpoints) of the previous version.

Drain Operations: For breaking changes, a "Drain" allows the old job to finish processing all currently buffered data before the new job starts, ensuring no record is dropped.

Lifecycle Management: By 2026, CI/CD for Dataflow has matured, with automated blue-green deployments becoming the standard for sensitive financial and healthcare pipelines.

This lifecycle rigor ensures that "real-time" doesn't just mean fast data, but also continuous availability. If your pipeline goes down for a code update, the "real-time" promise is broken. Modern Dataflow implementations treat the pipeline code as a living service, subject to the same uptime requirements as a frontend web server.

Why Companies Are Moving Away from Batch

The transition from batch to real-time isn't just about speed; it's about the granularity of response. Legacy systems that run every 24 hours lose the nuance of intra-day fluctuations. For instance, in fraud detection, a batch process might identify a stolen card 12 hours after the first transaction—long after the funds are gone. A real-time Dataflow pipeline can block the transaction at the point of sale.

Furthermore, real-time analytics provides a foundation for business agility by enabling sub-second query latency for user-facing dashboards. When data is ingested and queried instantly, companies can pivot their digital marketing spend or supply chain logistics based on what is happening right now, rather than yesterday.

The Takeaway

Real-time data processing is no longer a luxury for tech giants—it is the baseline expectation for modern data engineering. By moving to a Dataflow-centric architecture, you reduce the operational burden of managing distributed infrastructure while gaining the ability to act on data at the moment it matters most. As ML integration becomes standard in streaming pipelines throughout 2026, the gap between "knowing" and "acting" will continue to disappear.

Frequently Asked Questions

What is the difference between Google Cloud Dataflow and Apache Beam?

Apache Beam is the open-source SDK (unified model) used to write the code for your pipeline. Google Cloud Dataflow is the fully managed service (the runner) that executes that code in the cloud. You write your logic once in Beam and can theoretically run it on Dataflow, Flink, or Spark.

Can Dataflow handle both batch and streaming in the same pipeline?

Yes, this is one of its primary strengths. The Apache Beam model is designed for unified processing, meaning a single pipeline can handle historical "batch" data from Cloud Storage and real-time "streaming" data from Pub/Sub simultaneously.

How does Dataflow handle "exactly-once" processing?

Dataflow uses a combination of stable state storage and unique record IDs. If a worker fails during a transformation, Dataflow ensures that the state is rolled back and the data is re-processed without creating duplicates in the final sink, which is a common problem in simpler streaming systems.