How Embedded AI Works: Architectures and Trends for 2026

Learn how AI works in embedded systems through NPU acceleration, TinyML, and physical AI. Discover why 2026 hardware is 40x more efficient for edge inference.

Gokulaselvan Thamilvanan • May 8, 2026

In 2026, the integration of Artificial Intelligence into embedded systems has transitioned from a specialized research niche to a prerequisite for industrial and consumer automation. Modern embedded AI works by shifting the heavy lifting of neural network inference from high-power cloud servers directly onto localized silicon, such as Microcontrollers (MCUs) and System-on-Chips (SoCs). This transition, often termed "Physical AI" by industry leaders like Arm Holdings, focuses on three primary pillars: dedicated hardware acceleration, model compression techniques like TinyML, and real-time execution environments that bypass the latency of the cloud.

What enables AI to run on resource-constrained hardware?

Embedded AI works through a combination of hardware-level optimization and software-side "thinning" of neural networks to fit within the megabyte-scale memory envelopes typical of edge devices. Unlike cloud AI, which utilizes massive GPU clusters with hundreds of gigabytes of VRAM, embedded systems rely on Neural Processing Units (NPUs), which are 10-40x more efficient than standard CPUs for AI inference tasks.

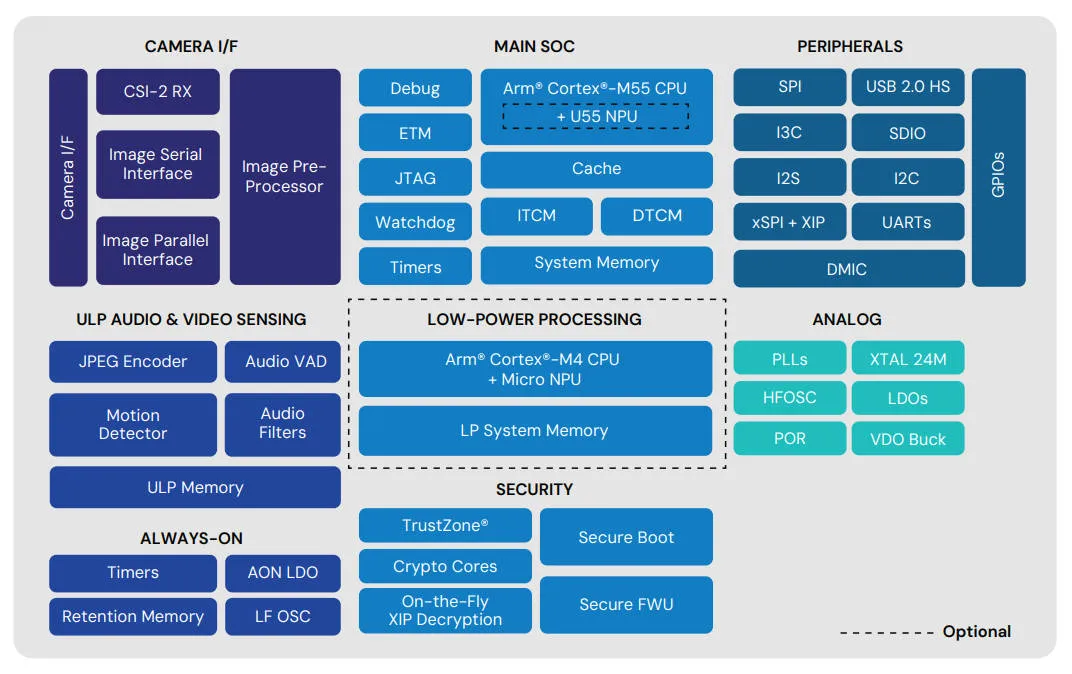

The architecture of an AI-enabled embedded system typically follows a hierarchical processing flow. Sensory data (vision, vibration, or audio) is first pre-processed by a Digital Signal Processor (DSP) or the CPU. Once cleaned, the data is pushed to a dedicated NPU or a "Vector Extension" within the CPU core (such as Arm's Helium technology) that performs the high-volume matrix multiplications—the "math" of AI—with minimal power draw. According to Renesas, this dedicated acceleration allows for magnitudes of higher performance uplift compared to traditional scalar CPU processing, enabling complex tasks like pedestrian detection or keyword spotting to run in sub-1W power envelopes.

How do NPUs differ from traditional DSPs in AI tasks?

The primary difference between a Neural Processing Unit (NPU) and a Digital Signal Processor (DSP) lies in their architectural specialization: NPUs are hard-wired for the specific nonlinear operations and data flows of neural networks, whereas DSPs are flexible mathematical generalists. While a DSP excels at repetitive mathematical tasks and signal filtering, an NPU is significantly more efficient for deep learning inference because it utilizes optimized memory access patterns specifically for weight and activation loading.

Feature | Digital Signal Processor (DSP) | Neural Processing Unit (NPU) |

|---|---|---|

Primary Formula | Fast Fourier Transforms (FFT), MAC | Multiply-Accumulate (MAC) for Tensors |

Data Movement | Linear, high-bandwidth streaming | Non-linear, optimized for model weights |

Instruction Set | General-purpose signal math | Specialized AI instructions (Convolution/ReLU) |

Power Intensity | Low, but scales poorly with model size | Ultra-low per inference operation |

Flexibility | High: usable for audio, motor, and RF | Low: highly specialized for neural networks |

In a 2026 comparison by Synopsys, it was noted that while a vector DSP can handle some AI processing in the "< 1 TOPS" (Trillion Operations Per Second) range, modern industrial applications requiring higher throughput are gravitating toward NPU-DSP hybrids. This "best of both worlds" approach uses the DSP for sensor fusion and pre-conditioning, then hands the heavy inference workload to the NPU to maximize battery life and minimize thermal throttling.

Why is TinyML the "brain" of the modern microcontroller?

TinyML (Tiny Machine Learning) is the software discipline that makes embedded AI possible by reducing model size without catastrophic accuracy loss. In 2026, developers no longer try to move large models to the edge; instead, they use techniques like Quantization and Pruning during the training phase to ensure the resulting model consumes only hundreds of kilobytes.

Quantization: This process converts high-precision 32-bit floating-point numbers into lower-precision 8-bit or even 4-bit integers. This reduces memory usage by 75% while only marginally impacting prediction accuracy.

Pruning: Developers remove "dead" neurons or connections in a neural network that do not contribute significantly to the output.

Knowledge Distillation: A smaller "student" model is trained to mimic the behavior of a larger, pre-trained "teacher" model.

By leveraging these strategies, companies like Arm are now deploying distributed agents that can perform "always-on" multimodal experiences—such as recognizing both a voice command and a hand gesture simultaneously—within a single, low-power MCU.

How does physical AI influence industrial automation?

"Physical AI" refers to AI that lives inside moving machines—robots, autonomous vehicles, and factory equipment—that must sense and react to physical environments in real-time. The move toward Arm's AGI CPU architecture in 2026 represents a shift toward "turnkey" AI silicon that allows machines to handle complex navigation and human-interaction tasks locally.

In industrial settings, this technology drives Predictive Maintenance. Rather than sending raw vibration data from a turbine to the cloud (which is bandwidth-intensive), an embedded AI model monitors the data locally. It identifies the unique "signature" of a bearing failure before it happens, only alerting the central system when a fault is imminent. This reduces data transmission by over 90% and ensures the machine can shut down safely even if the factory's network connection is lost.

What are the security implications of on-device AI?

Security in embedded AI has evolved in 2026 to focus on "Model Safeguarding" and "Data Sovereignty." Because inference happens locally, sensitive data—such as high-fidelity audio from a smart home device or medical data from a wearable—never leaves the machine. This provides a natural privacy barrier that cloud-based AI cannot match.

However, the "Edge-first" design also introduces new attack vectors. Hackers might attempt "Inference Attacks" to reverse-engineer the proprietary models stored in the chip's flash memory. To counter this, newer hardware architectures, such as the IEEE-standardized secure AI infrastructure, incorporate hardware-level encryption and Trusted Execution Environments (TEEs) that isolate the AI weights and biometric data from the rest of the system's firmware.

How do hardware-software co-design principles improve inference?

The efficiency of embedded AI in 2026 is largely a result of hardware-software co-design, where the neural network architecture is developed in tandem with the physical silicon it will inhabit. In the past, engineers would design a model in a high-level framework like PyTorch and then struggle to "squeeze" it onto a chip. Today, automated tools like Arm's Ethos-U compiler analyze the target NPU's specific memory layout and instruction set before the model is even finalized.

This co-design approach allows for "zero-copy" data movement. In traditional systems, data is frequently moved between the CPU cache and the main system RAM, a process that consumes significant energy. In AI-optimized embedded systems, the NPU features local SRAM buffers that keep the model's weights and the incoming sensor data in the same physical vicinity. According to NXP Semiconductors, this spatial proximity reduces the "energy-per-inference" metric by up to 60%, enabling devices to run complex vision models on energy harvested from the sun or ambient vibrations.

Furthermore, this synergy enables Heterogeneous Execution. If a specific layer of a neural network—such as a custom activation function—is not supported by the NPU's hard-wired logic, the system can dynamically offload just that single layer to the CPU or DSP. This ensures that the AI application never "breaks," providing a fallback mechanism that maintains 99.9% uptime for safety-critical systems like autonomous medical injectors or industrial drone controllers.

What role does memory hierarchy play in edge AI latency?

Memory bandwidth is often the primary bottleneck for embedded AI, rather than raw compute power. Specifically, the latency between the On-chip Flash and the NPU's internal registers determines whether a device can react in 10 milliseconds or 100 milliseconds. In 2026, many embedded AI chips utilize "In-Memory Computing" (IMC) or specialized "Tightly Coupled Memories" (TCM) to solve this.

TCM allows the processor to access AI model weights with zero wait-states, which is essential for "High-Frequency AI" tasks like motor control in high-speed robotics. By placing the most frequently accessed parts of the neural network in TCM, developers can ensure deterministic execution—meaning the AI always arrives at its conclusion within a guaranteed timeframe. This determinism is why embedded AI is now trusted for tasks that were previously too risky for neural networks, such as managing the balance of bipedal humanoid robots on uneven terrain.

The shift toward Unified Memory Architectures in modern SoCs has further bridged the gap. By allowing the CPU, GPU, and NPU to share a single, high-speed pool of LPDDR5X memory, the system avoids the need to duplicate large vision buffers across different memory banks. This not only saves precious silicon area but also significantly lowers the bill of materials (BOM) for manufacturers, making sophisticated AI features accessible in entry-level consumer electronics.

Frequently Asked Questions

Can I upgrade the AI model on an embedded device after it’s sold?

Yes. Modern embedded systems support Over-the-Air (OTA) updates. However, the new model must be re-quantized and re-verified for the specific hardware target to ensure it doesn't exceed the memory or power limits of the original device.

Does embedded AI require an internet connection?

No. One of the core benefits of embedded AI is "Offline Autonomy." Once the model is flashed onto the device, it can perform inference independently of any network, making it ideal for remote industrial sites, agricultural sensors, and deep-sea robotics.

Which is better for AI: an MCU or a MPU?

It depends on the complexity of the task. Microcontrollers (MCUs) are best for low-power, simple tasks like keyword spotting or vibration analysis. Microprocessors (MPUs) with dedicated NPUs are better for vision-heavy tasks like object tracking or autonomous navigation, where higher throughput is required.

Is embedded AI the same as Edge Computing?

They are related but distinct. Edge Computing refers to the broader topology of processing data near the source. Embedded AI (or Edge AI) refers specifically to the deployment of machine learning models within those edge devices.

What is the future of AI in embedded systems beyond 2026?

The horizon for embedded AI looks toward Collaborative Computing and Quantum-Inspired Architectures. Researchers are already exploring quantum-inspired approaches to deploying intelligence on resource-constrained devices, which could allow for even more efficient optimization of complex schedules and routing in logistics robots.

As we move toward the late 2020s, the "ChatGPT moment for physical AI" is expected to mature. We will see systems that not only infer from pre-trained models but also perform "On-device Learning," where a robot or smart tool adapts its behavior to its specific user or environment without needing a firmware update. This leap from "static inference" to "dynamic learning" will represent the next major evolution in how we interact with the machines that populate our daily lives.

By moving intelligence into the silicon itself, embedded AI is making the world safer, more private, and significantly more efficient. Whether it is a wearable that detects a heart arrhythmia in real-time or a factory robot that avoids a collision, the "math in the chip" is fast becoming the heartbeat of modern technology.