The integration of Cloud, DevOps, and MLOps has transitioned from a competitive advantage to a fundamental operational requirement in 2026. As Gartner projects that 80% of organizations will adopt AI-native development platforms by the end of this year, the focus has shifted from mere infrastructure provisioning to autonomous orchestration and "Preemptive Cybersecurity."

For engineering leaders, the challenge is no longer just "moving to the cloud"—it is managing the exponential complexity of multi-agent systems, sovereign infrastructure, and the convergence of traditional software delivery with large-scale model lifecycles.

How is AI-Native Platform Engineering Replacing Traditional DevOps?

In 2026, manual CI/CD pipelines are increasingly viewed as technical debt, replaced by Internal Developer Platforms (IDPs) that use generative AI to manage environment provisioning and security audits. Gartner identifies AI-native development platforms as a top strategic trend, enabling software teams to evolve into smaller, hyper-augmented units that spend 70% less time on repetitive infrastructure tasks.

These platforms do not just automate code deployments; they predict infrastructure failures and suggest optimizations before they impact production. The traditional "you build it, you run it" mantra has shifted toward a model where AI runs the baseline, and humans focus on high-level architectural design and policy-driven governance.

What defines the convergence of MLOps and LLMOps in 2026?

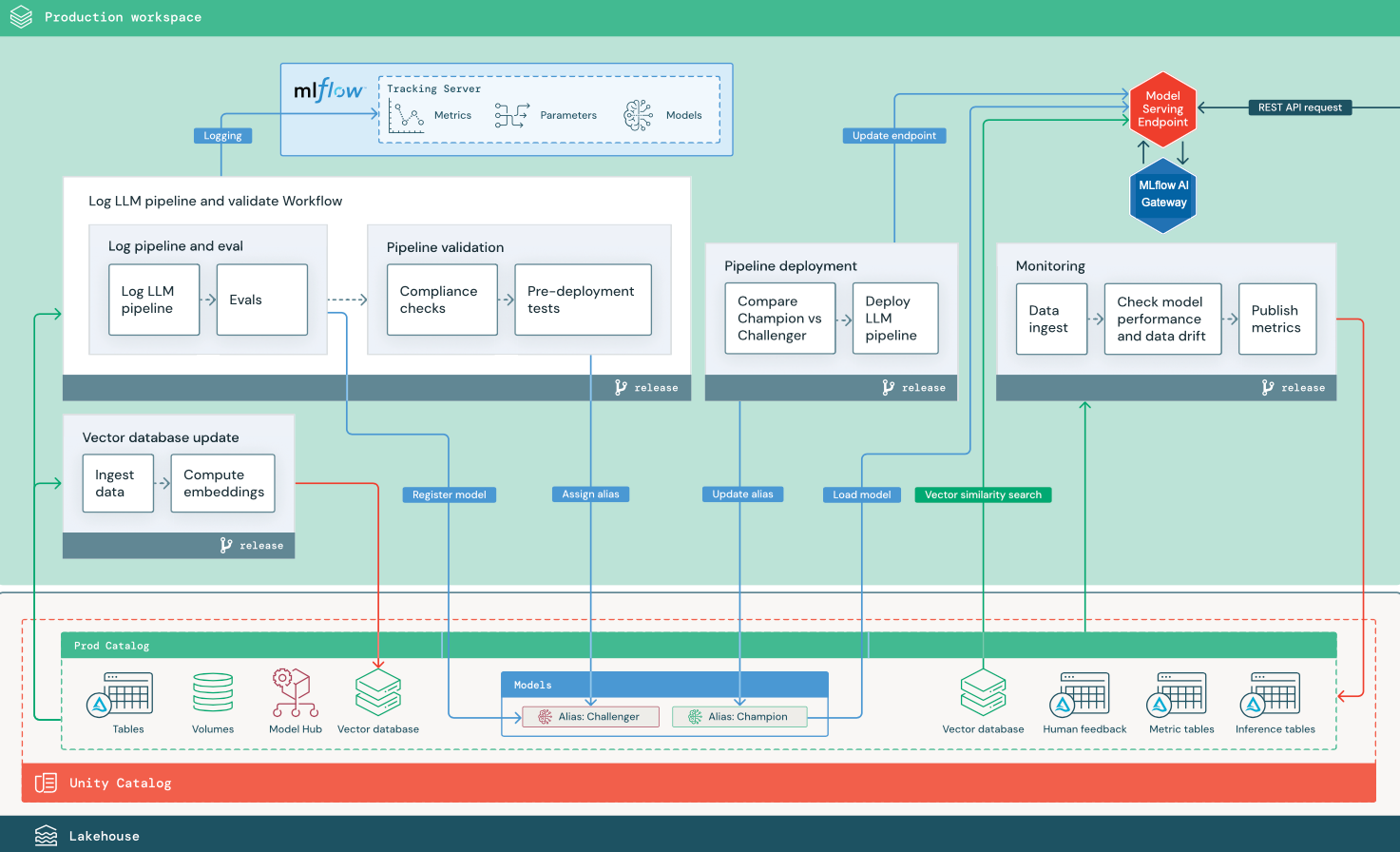

The operational boundary between machine learning and general software engineering has vanished, leading to a unified framework often called AgentOps. While MLOps focused on stable model training and deployment, the rise of agentic architectures has introduced new requirements for long-context reasoning, tool-calling observability, and multi-step execution traces.

Key infrastructure changes in 2026 include:

Vector Layer Integration: Direct integration of vector databases (like Milvus or Weaviate) into the core DevOps data fabric for retrieval-augmented generation (RAG).

Token-Cost Observability: FinOps tools now track "Total Token Cost" (TTC) alongside standard CPU/RAM usage to manage inference spiraling.

Autonomous Guardrails: CI/CD pipelines now include "Reasoning Trace Audits" where models are tested not just for output accuracy, but for the logical steps taken to reach an answer.

MLOps vs. LLMOps: Operational Requirements Comparison

Operational Area | MLOps Focus (Traditional) | 2026 LLM/AgentOps Focus | Key Differentiator |

|---|---|---|---|

Pipeline Trigger | New training data or code drift. | Prompt engineering updates or tool-calling failure. | Real-time behavior adjustment vs. retraining cycles. |

Monitoring | Accuracy, precision, and feature drift. | Hallucination rates, token latency, and reasoning traces. | Qualitative safety metrics over quantitative error rates. |

Infrastructure | GPU clusters for batch training. | High-performance inference endpoints at the edge. | Latency at the point of interaction is the primary KPI. |

Data Loop | Static datasets for supervised learning. | Dynamic context windows and real-time RAG updates. | Integration of live enterprise data into every request. |

Why is Sovereign Cloud the new standard for AI Infrastructure?

A significant shift in 2026 is the rapid rise of Geopatriation, where enterprises move sensitive AI workloads out of global public clouds and into regional or sovereign infrastructure. Gartner forecasts that spending on sovereign cloud infrastructure (IaaS) will reach $80 billion in 2026, representing a 35.6% increase from the previous year.

This trend is driven by two factors: geopolitical risk and data residency laws for AI training. Large organizations now operate on "Distributed Cloud" models where the model's brain resides on a major hyperscaler (like Oracle, a three-year leader in Gartner's Magic Quadrant), but the inference and private data layers are hosted strictly within national borders.

How do DORA metrics evolve in the era of AI-augmented Delivery?

While DORA metrics—Deployment Frequency, Lead Time for Change, Change Failure Rate, and Time to Restore Service—remain the industry standard, they are no longer sufficient on their own. In 2026, high-performing teams use the DX Core 4 framework to balance delivery speed with the quality of AI-generated code.

Elite teams are now tracking "Rework Rate"—how much AI-suggested code is scrapped or heavily edited—to ensure that high deployment frequency isn't masking a decline in actual technical health. The goal has shifted from "How fast can we ship?" to "How sustainable is our shipping speed?" as AI-generated commits threaten to overwhelm manual code reviews.

The Shift to AI-Specialized Compute and Liquid Infrastructure

The standard "general-purpose" cloud instance is becoming a relic of the past as the industry pivots toward AI-optimized infrastructure. In 2026, the convergence of Cloud and DevOps has birthed "Liquid Infrastructure"—software-defined resources that can reconfigure themselves in real-time based on the specific architectural needs of an LLM request.

When a multi-agent system initiates a high-compute reasoning task, the underlying cloud fabric, managed by AI-augmented DevOps agents, automatically scales up specialized accelerators (H100/H200 equivalents or custom ASICs) and optimizes the networking fabric to reduce inter-node latency. This is no longer just "Auto-scaling"; it is Intent-Based Orchestration. The developer simply defines the latency and accuracy targets in a YAML file, and the platform engineering layer negotiates the hardware allocation across diverse cloud regions to find the most cost-effective path.

Why 2026 is the Year of the Custom Silicon Cloud

Major hyperscalers have moved beyond commoditized hardware. Google Cloud (TPU v6), AWS (Trainium3), and Azure (Maia 200) now provide deep vertical integration between their proprietary silicon and their MLOps tooling. For enterprises, Gartner identifies AI-native development platforms as a way to leverage this vertically integrated hardware to reduce training costs by up to 40%.

However, this creates a new "Silicon Lock-in" challenge. DevOps teams are now tasked with building "Hardware Abstraction Layers" so that models can remain portable across different custom chipsets. The emergence of open-source frameworks has become a critical piece of the 2026 DevOps toolkit, allowing engineering teams to compile models for various hardware backends without rewriting their entire pipeline.

FinOps 3.0: Managing the "Token Economy" and Inference Sprawl

The financial management of cloud resources, formerly focused on reserved instances and spot pricing, has evolved into FinOps 3.0, where the primary currency is the "Token per Second" (TPS) and "Cost per Successful Inference." As generative AI features move from pilot to production, companies are discovering that the recursive nature of AI agents can lead to exponential cost surges in a matter of hours.

Strategic leaders in 2026 are implementing "Token Budgets" at the department level. Much like a traditional budget, departments are allocated a specific quota of high-reasoning tokens. When the quota is reached, the system automatically degrades service to faster, cheaper, and smaller models unless a financial override is approved.

The Rise of "Economic Circuit Breakers"

To prevent "AI Runaway"—a scenario where a looping agent consumes thousands of dollars in a single hour—DevOps teams have integrated economic circuit breakers directly into production environments. These systems monitor the Burn Velocity. If the real-time spend exceeds a 300% deviation from the predicted baseline, the Agentic platform enters a "Safety Pause" mode, requiring a human operator to validate the agent's logic before continuing.

This trend is backed by industry shifts toward AI financial transparency, mandating that every LLM response be tagged with exact cost metadata. This granular visibility allows companies to perform true Unit Economics analysis: "Does this $0.50 AI-generated email lead to enough customer lifetime value to justify the cost?"

Preemptive Cybersecurity: When DevOps Meets AI Defense

The biggest threat to cloud security in 2026 isn't just a misconfigured cloud bucket; it's "Prompt Injection at Scale" and "Agent Hijacking." As agents gain tool-calling permissions—the ability to read emails, write to databases, and execute code—they become high-value targets for attackers.

Current DevOps practices have integrated AI Red Teaming as a mandatory step in the deployment pipeline. Before any agent is promoted to production, it must pass an automated "Gauntlet" of adversarial prompts designed to leak system instructions or bypass safety filters. Gartner predicts that by 2028, over 50% of enterprises will use specialized AI security platforms to mitigate these risks.

Implementation of "Identity for Machines" (M2M)

In 2026, we have moved beyond simple API keys. we are now using Dynamic Identity for Agents. Every time an AI agent starts a task, it is issued a short-lived, task-specific cryptographic identity. This identity is restricted to the specific data and tools required for that single interaction. If the agent is compromised during its execution, the attacker finds themselves in a "Sandboxed Identity" with no lateral movement capabilities.

The Human Element: The "Engineering Conductor" Role

Despite the massive increase in automation, the human role in the Cloud, Devops, and MLOps cycle hasn't disappeared—it has shifted. We are seeing the rise of the "Engineering Conductor." Instead of writing lines of code, these senior engineers orchestrate fleets of AI workers, spending their time defining the constraints and guardrails of the system.

By the end of 2026, the distinction between a "Cloud Engineer" and an "ML Engineer" will be historical. There will only be AI Systems Engineers—professionals who can navigate the entire stack from silicon to the agentic user experience.

What is the 2026 Implementation Roadmap for Engineering Leaders?

Modernizing Cloud, DevOps, and MLOps requires a phased approach that moves from manual silos to a unified "Cognitive Fabric."

Phase 1: Foundation (Months 1–3): Audit existing pipelines for "AI readiness." Establish a Unified Data Fabric where MLOps and DevOps teams share the same observability tools and version control for datasets.

Phase 2: Platform Engineering (Months 4–7): Deploy an Internal Developer Platform (IDP) that abstracts the complexity of Kubernetes and GPU scheduling. Move toward GitOps-driven model management, where every model change is a verifiable commit.

Phase 3: Agentic Autonomy (Months 8–12): Implement Preemptive Cybersecurity platforms. By 2028, over 50% of enterprises will use specialized AI security platforms to protect their investments—starting your pilots now is essential for long-term safety.

Frequently Asked Questions

Does MLOps replace DevOps or supplement it?

MLOps is a specialized extension of DevOps. While DevOps provides the infrastructure for applications, MLOps manages the unique lifecycle of the models inside those applications. In 2026, they are managed through a unified Platform Engineering team to avoid tool sprawl.

What is the most significant cost risk in 2026 cloud strategies?

The primary risk is Shadow AI Inference, where developers use unsanctioned third-party APIs that bypass FinOps controls. This can lead to unbudgeted "Token Sprawl" that rivals traditional compute overspending.

How do we handle AI accuracy in automated pipelines?

Accuracy is the "hidden barrier" to value in 2026. Teams must build an AI Accuracy Survival Kit that includes automated hallucination testing and human-in-the-loop (HITL) triggers for critical enterprise actions.

Why is everyone talking about AgentOps?

AgentOps manages autonomous systems that make decisions on behalf of users. Unlike a chatbot that just talks, an agent acts (e.g., booking a flight or updating a database). This requires more sophisticated logging and security than standard MLOps provides.