The Grafana LGTM stack has emerged as the industry standard for open-source observability, offering a unified workflow for logs, metrics, and traces that can reduce telemetry costs by up to 80% compared to legacy solutions. This reduction is driven by "Adaptive Telemetry" and "LGTM" (Loki, Grafana, Tempo, Mimir) architecture, which prioritizes efficient indexing and metadata over massive raw data ingestion. In 2026, the stack has evolved from a collection of loosely coupled tools into an AI-integrated ecosystem where correlations between different telemetry signals happen automatically.

What is the Grafana LGTM Stack?

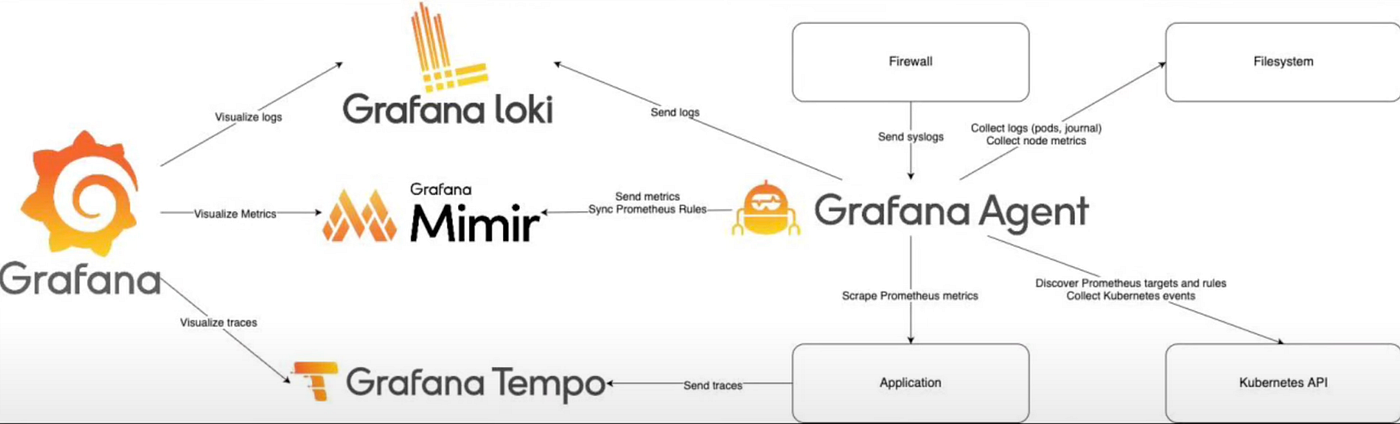

The LGTM stack is a "composable" observability platform that integrates four core open-source projects to cover the three pillars of observability: metrics, logs, and traces. Unlike monolithic vendor platforms, the LGTM stack allows you to swap components or connect to any data source, adhering to the principle of "no vendor lock-in."

The stack is composed of:

Loki: A horizontally scalable, highly available, multi-tenant log aggregation system inspired by Prometheus.

Grafana: The visualization layer that provides a single pane of glass for all data.

Tempo: A high-volume, distributed tracing backend that only requires object storage to operate.

Mimir: The world’s most scalable Prometheus-compatible metrics database, capable of handling billions of series.

In 2025 and 2026 Gartner Magic Quadrant reports, Grafana Labs was recognized as a Leader specifically for this "Completeness of Vision" in building a truly open, composable stack.

Why Choose LGTM Over the ELK Stack?

The primary advantage of the LGTM stack over the traditional ELK (Elasticsearch, Logstash, Kibana) stack is cost-efficiency and operational simplicity. While ELK is powerful for full-text search, it requires massive amounts of RAM and high-performance storage because it indexes every word of every log.

Feature | Grafana LGTM Stack | ELK Stack |

|---|---|---|

Indexing Strategy | Indexes metadata (labels) only; results are fetched via grep-like logic. | Full-text indexing of all content, creating high storage overhead. |

Storage Requirements | Uses low-cost object storage (S3, GCS) for long-term retention. | Requires high-IOPS block storage for the Elasticsearch index. |

Scaling Mechanism | Horizontally scalable per microservice (Ingester, Querier, etc.). | Shard-based scaling which can lead to "hot spots" in the cluster. |

Native Signals | Native support for Metrics (Mimir), Logs (Loki), Traces (Tempo). | Strong in Logs; Metrics and Traces are secondary integrations. |

Loki, in particular, was designed to be cost-effective by only indexing metadata. This allows teams to store logs for years at a fraction of the cost of Elasticsearch, which is vital in the 2026 regulatory environment where data retention mandates are increasing.

How Managed Open Source Changes the Game

Building and maintaining your own LGTM stack on-premise can be daunting. In 2026, many SRE teams are moving toward Managed Open Source Observability. This model provides the benefits of open standards without the "toil cycle" of managing storage backends like Prometheus or tuning JVM pressure in Elasticsearch.

Managed solutions like Grafana Cloud or ObserveNow take the operational burden off DevOps engineers. According to the Grafana 2026 State of Observability Report, 87% of surveyed organizations now prefer managed OSS over self-hosted because it allows them to focus on "Observability as a Service" for their developers rather than "Infrastructure Management."

OpenTelemetry: The Standard for LGTM Integration

The LGTM stack’s dominance in 2026 is largely due to its "OTel-first" philosophy, which treats OpenTelemetry as the primary ingestion protocol. Rather than relying on proprietary agents that create data silos, the LGTM components consume OTel data natively, allowing for a seamless flow from the application code to the visualization layer.

The advantages of an OTel-native LGTM stack include:

Unified Metadata: By using the OpenTelemetry semantic conventions, logs, metrics, and traces share the same resource attributes (e.g.,

service.name,deployment.environment). This enables Grafana to correlate an error log in Loki directly to a specific trace in Tempo without custom mapping.Protocol Consolidation: Teams can use a single OTLP exporter to send all three telemetry types. This reduces the CPU overhead on production nodes that previously had to run multiple forwarders like Filebeat, Telegraf, and Jaeger agents.

Future-Proofing: Since OTel is vendor-neutral, organizations can switch between self-hosted LGTM and managed services like Grafana Cloud without changing a single line of application code.

Performance Tuning: Harnessing Loki's Metadata Power

To get the most out of Loki in a high-volume 2026 environment, SREs must master label management and stream selection. Unlike Elasticsearch, which struggles with "too many indexes," Loki’s performance bottleneck is usually "cardinality explosion"—the creation of too many unique label combinations.

Practical tuning strategies for 10TB+ daily ingestion:

Keep Cardinality Low: Avoid using high-entropy values like

user_idorip_addressas Loki labels. Instead, keep those in the log line and filter for them during query time.Use Static Labels: Labels should describe the infrastructure (e.g.,

cluster,namespace,app) rather than the request.Leverage Recording Rules: For frequently accessed data, use Loki recording rules to pre-calculate metrics from logs. This effectively turns expensive "grep" operations into instant Prometheus-style metric lookups.

The Evolution of Unified Alerting

In 2026, the Grafana LGTM stack has replaced separate alerting engines with a Unified Alerting framework. This system allows you to create alerts that combine data from different backends in a single expression—for example, triggering an alert only if "Error Rate (Mimir) > 5%" AND "Database Latency (Tempo) > 200ms."

This multidimensional alerting reduces "alert fatigue" by ensuring that notifications are only sent when multiple signals confirm a genuine service degradation. By utilizing Grafana’s native incident management (OnCall), these alerts are automatically routed to the correct team with a pre-populated "Incident Bridge" containing the relevant dashboard links.

According to industry benchmarks, teams using unified multidimensional alerting see a 40% reduction in Mean Time to Acknowledge (MTTA) compared to those using siloed alerting tools for logs and metrics separately. This efficiency is why the LGTM stack has become the de facto choice for enterprise-grade Reliability Engineering.

The Role of AI in Grafana 13

With the release of Grafana 13 in early 2026, AI has become a first-class citizen in the LGTM stack. Instead of manually writing PromQL or LogQL queries, users can now use natural language to "Ask Grafana" about system health.

Key AI features in the current stack include:

Adaptive Telemetry: Automatically identifies metrics that aren't being used in dashboards or alerts and suggests aggregation or removal to save costs.

Incident Contextualization: When an alert triggers, Grafana's AI correlates the logs in Loki with traces in Tempo and metrics in Mimir to provide a "Root Cause Insight" summary instantly.

Automatic Dashboarding: AI agents can now build complex dashboards by analyzing the labels and structures of incoming OpenTelemetry data.

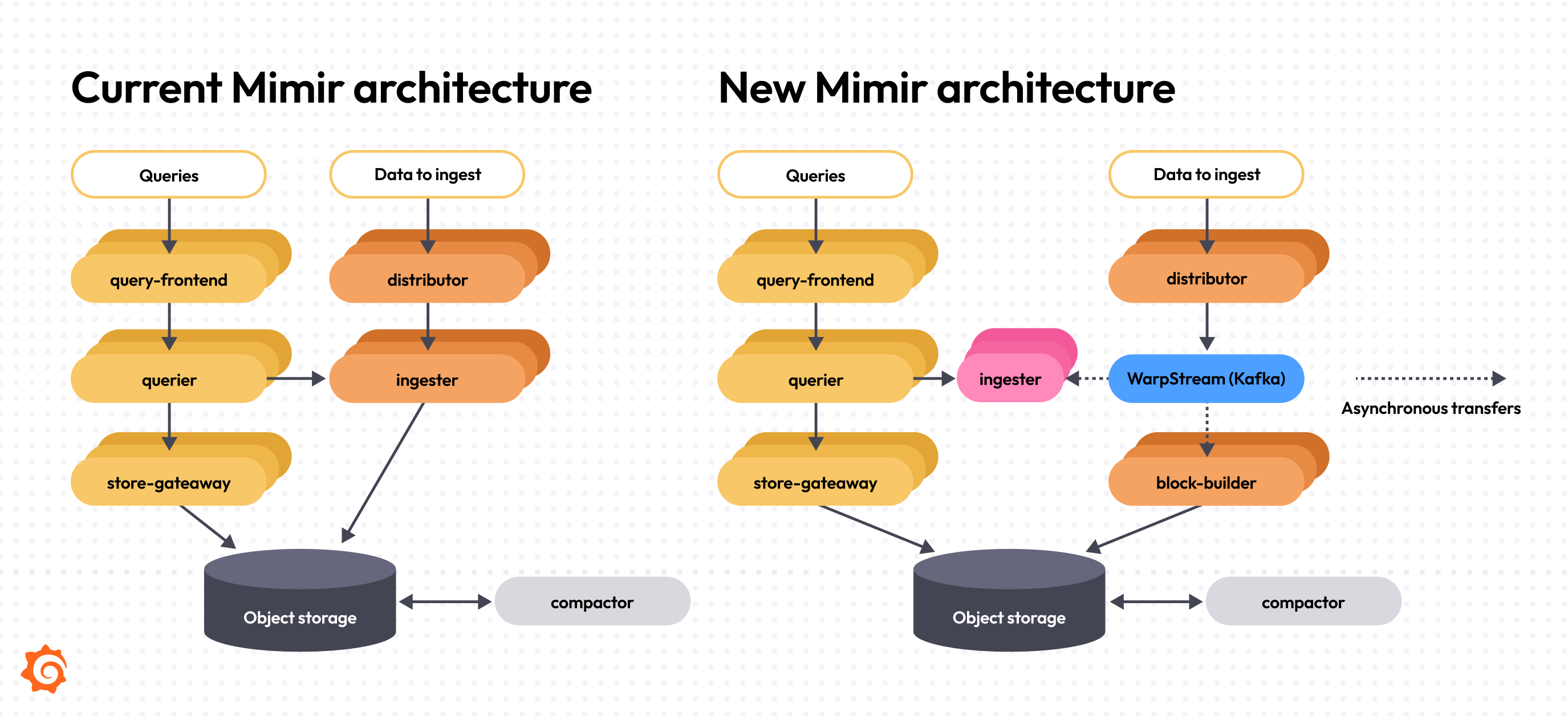

Best Practices for Scaling Mimir and Tempo

Scaling to handle petabytes of data requires a shift in how you think about storage. Both Mimir and Tempo are built to be OpenTelemetry-native. This means they thrive when signals contain high-cardinality metadata that allows for faceted querying.

For Mimir: Use "sharding" for high-volume tenants and implement aggressive caching for the queriers. Mimir can easily scale to 1 billion active series in a single cluster by distributing the load across many small, stateless components.

For Tempo: Leverage "Search-on-Index." Instead of searching every trace, use Tempo’s ability to query traces based on attributes found in logs or metrics first. This "exemplar-based" workflow allows you to leap from a spike in a Grafana graph directly to the specific trace that caused it.

Frequently Asked Questions

Can I use the LGTM stack with OpenTelemetry?

Yes. The LGTM stack is OpenTelemetry-native. You can use the OpenTelemetry Collector to send metrics to Mimir, logs to Loki, and traces to Tempo without needing any proprietary agents.

Is the LGTM stack better for Kubernetes than ELK?

Generally, yes. The label-based indexing of Loki and Mimir mirrors the label-based organization of Kubernetes (namespaces, pods, services), making it more intuitive for K8s monitoring than the full-text search approach of ELK.

How do I reduce costs if my Loki ingestion is too high?

Use Grafana's "Adaptive Telemetry" suite or implement recording rules in Loki to aggregate high-volume logs into metrics. This allows you to keep the analytical value of the logs while discarding the raw data after a short retention period.

Conclusion

The Grafana LGTM stack represents the pinnacle of unified observability in 2026. By moving away from expensive, proprietary data silos and toward a composable, open-source architecture, organizations can achieve deeper visibility with significantly less spend. Whether you choose to host it yourself or use a managed cloud version, the ability to correlate metrics, logs, and traces in a single UI is no longer a luxury—it is a requirement for modern infrastructure.

For engineers who want to "own their own observability strategy," there is currently no more powerful or flexible toolset than the LGTM stack.