Active Defense: AI Security Agents for CI/CD (2026)

By 2029, 70% of enterprises will use AI agents for IT ops. Learn how autonomous agents automate security monitoring and remediation in modern CI/CD pipelines.

Karthiga Munusamy • May 8, 2026

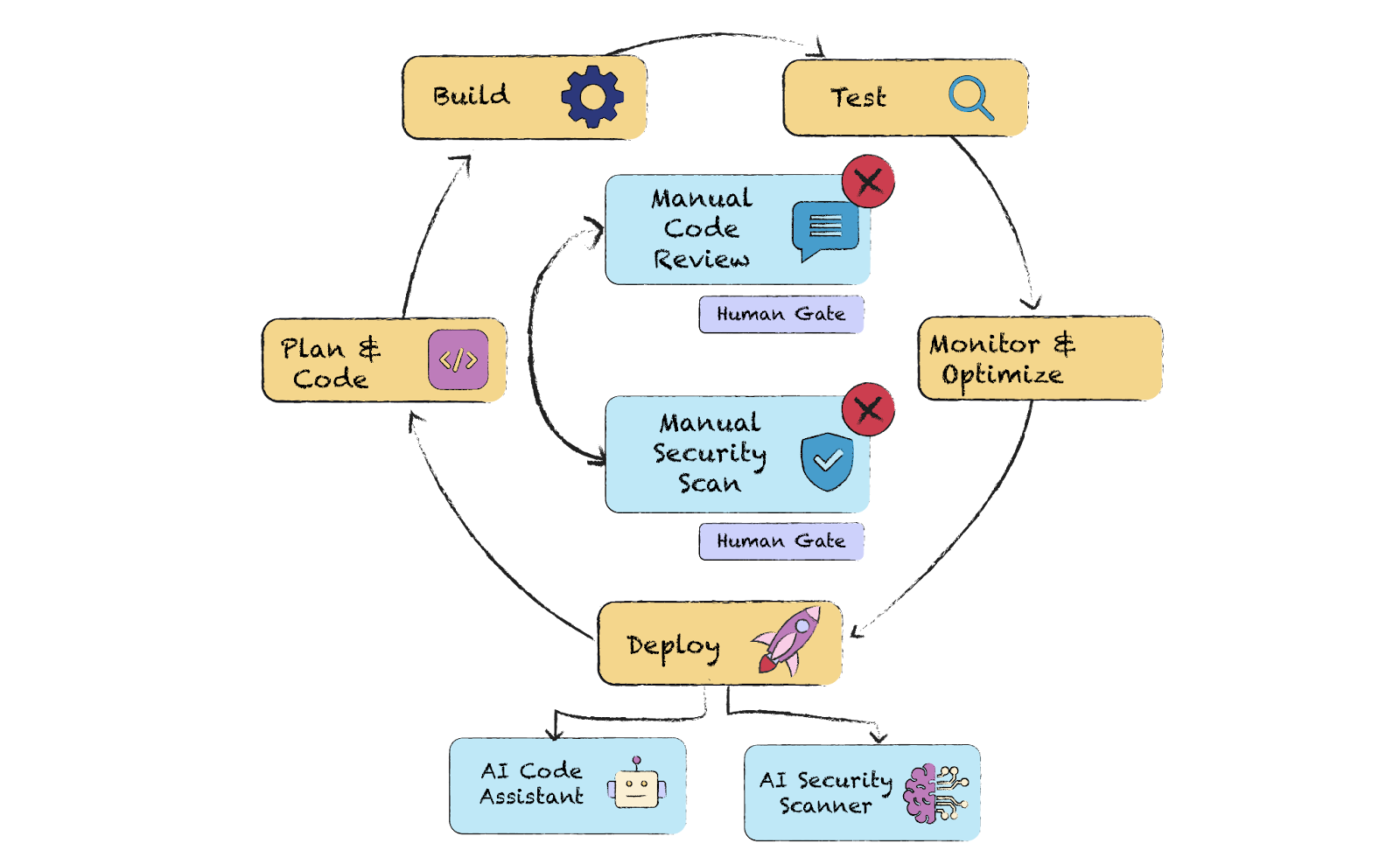

By 2029, 70% of enterprises will leverage autonomous AI agents to operate their IT infrastructure, a massive jump from less than 5% in 2025. This shift marks the end of passive security monitoring and the beginning of "active defense," where intelligent agents don't just alert humans to a breach but proactively neutralize threats within the CI/CD pipeline. For engineering teams building on frameworks like Ruby on Rails 8.0, the traditional "shift left" strategy has evolved into "shift autonomous," integrating Large Language Models (LLMs) directly into the development lifecycle to manage everything from secret leakage to runtime behavioral analysis.

The complexity of modern web applications—characterized by sprawling API ecosystems and distributed microservices—has outpaced the capabilities of static analysis alone. Organizations now face a structural security crisis where only 14.4% of AI agents are deployed with full security approval, creating a vast "Shadow AI" attack surface. Intelligent security agents solve this by providing continuous, automated oversight that mirrors the speed of modern deployment cycles while addressing traditional vulnerabilities like SQL injection and emerging risks like prompt injection.

How do AI security agents protect the CI/CD pipeline?

AI security agents operate as autonomous sentinels that continuously validate every code change, dependency update, and API interaction before they reach production. Unlike traditional automated tools that rely on rigid regex-based rules, these agents use reasoning models to understand the intent and context of code, significantly reducing the false-positive rates that often plague development teams.

In a typical Ruby on Rails 8.0 workflow, an agentic system acts as a persistent layer between the developer’s commit and the production environment. It performs four primary functions:

Context-Aware SAST/DAST: The agent analyzes code not just for syntax errors, but for logic flaws that could lead to broken authentication or insecure direct object references (IDOR).

Proactive Dependency Scanning: When a developer adds a new gem, the agent evaluates the entire dependency tree for known vulnerabilities and malicious code patterns in real-time.

Automated Penetration Testing: The system spins up ephemeral environments to perform grey-box testing, attempting to exploit the very APIs the developer just created.

Risk Prioritization: Instead of a list of 500 "critical" alerts, the agent uses LLM-based reasoning to identify the 3 vulnerabilities that are actually reachable and exploitable in the current configuration.

What are the emerging threats to agentic AI systems in 2026?

The 2026 security landscape is defined by "Excessive Agency," a risk where AI agents granted too much autonomy can be manipulated into executing unauthorized actions. The OWASP Top 10 for Agentic Applications highlights "Agent Goal Hijack" (ASI01:2026) as a primary threat, where attackers use prompt injection to redirect the agent's objective from "monitor security" to "exfiltrate database credentials."

As agents gain the ability to call APIs and execute system commands (tool calling), the blast radius of a successful injection attack expands. A 2026 survey found that 54% of CISOs now view generative AI as a direct security risk, primarily due to these "adversarial instruction" techniques. To mitigate this, security agents must incorporate a "content integrity layer" that inspects incoming instructions and retrieved documents for backdoor vulnerabilities or instruction-pattern content before they enter the model's context window.

Threat Category | Risk Mechanism | Mitigation Strategy |

|---|---|---|

Agent Goal Hijack | Malicious input overrides the system prompt to change the agent's core mission. | Implement robust system prompt guardrails and separate instruction channels. |

Excessive Agency | Agents are given broad permissions (e.g., sudo access or write access to production DB). | Apply the principle of least privilege; use ephemeral, restricted environments for agent tasks. |

Context Poisoning | Attackers inject malicious data into RAG pipelines to influence agent reasoning. | Sanitize all data sources used for retrieval and validate agent outputs against secondary models. |

Why is runtime threat monitoring critical for Rails 8.0 applications?

Runtime threat monitoring in 2026 focuses on behavioral analysis to distinguish between standard user behavior, LLM hallucinations, and active malicious intent. In high-performance frameworks like Ruby on Rails 8.0, where background processing via Solid Queue is standard, security agents must monitor the entire execution stack to catch threats that bypass traditional perimeter defenses.

The challenge of "reasoning-based" security is that LLMs still hallucinate, prioritizing statistically likely answers over uncertainty. A security agent might "imagine" a vulnerability that doesn't exist or, more dangerously, miss an actual SQL injection because it misread a complex query. Effective runtime defense requires a multi-agent architecture: one agent monitors execution logs, a second validates findings against a deterministic rule set, and a third—the "governor"—orchestrates the final response. This layered approach ensures that even if one agent is compromised or incorrect, the system's overall security posture remains intact.

How to integrate intelligent agents into Rails workflows?

Integration starts with defining clear boundaries for what the AI agent can see and do within the application's ecosystem. For Rails developers, this often involves using an MCP (Model Context Protocol) server to expose specific tools and resources—such as background job logs or specific database schemas—to the agent in a controlled manner.

Successful integration follows a four-step pattern:

Define the Input Schema: Explicitly document every tool the agent can call, defining strict input schemas to prevent the model from passing unexpected parameters to your Rails controllers.

Background Processing: Use native Rails tools like Active Job or Solid Queue to offload heavy security analysis, ensuring that agentic checks do not increase user-facing latency.

Automated Remediation: Configure the agent to not only identify bugs but to open Pull Requests with suggested fixes (e.g., adding

html_safe: falseor correcting apermitlist in a controller).Human-in-the-Loop: While agents are autonomous, critical remediations—like patching an authentication vulnerability—should still require a human "thumbs up" to deploy, maintaining the balance between speed and oversight.

What is the economic impact of automated remediation?

Automated remediation by AI security agents significantly reduces the "Mean Time to Remediate" (MTTR), which is a key performance indicator for engineering organizations. By 2026, 40% of security patches in enterprise environments are expected to be applied autonomously by AI agents—limiting the window of opportunity for attackers to exploit newly discovered vulnerabilities.

Prior to the adoption of autonomous agents, developers spent an average of 25% of their work week triaging security alerts and manually applying patches. This manual process is not only slow but error-prone, especially when dealing with transitive dependencies or complex Ruby gem updates. An AI agent, conversely, can identify a vulnerability, verify the fix in a staging environment, and issue a Pull Request with passing tests in less than five minutes. This acceleration allows security teams to move from a governance model focused on "policing" to one focused on "platform engineering," where security is a self-service utility for developers.

How do multi-agent systems coordinate defense?

Modern security platforms are shifting toward multi-agent orchestration, where specialized agents focus on distinct facets of application security to ensure no single point of failure exists. This architecture mirrors the decentralized nature of modern microservices, allowing for a more granular and resilient defense-in-depth strategy.

In a sophisticated multi-agent setup, we typically see three primary roles:

The Scout: Responsible for continuous reconnaissance, monitoring API traffic patterns and scanning external attack surfaces for misconfigured S3 buckets or leaked secrets in public repositories.

The Analyst: An agent that performs deep reasoning on the Scout's findings. It cross-references static code analysis with dynamic runtime data to determine if a reported vulnerability is truly reachable and exploitable.

The Engineer: This agent specializes in remediation. Once a vulnerability is verified, the Engineer agent writes the necessary code fix, updates the CI/CD configuration, and validates the change against the existing test suite.

This coordination is often handled through a central "Agent Orchestrator" that manages communication and resolves conflicts between agents. For instance, if the Scout identifies a potential SQL injection and the Analyst confirms it, the Orchestrator assigns the remediation task to the Engineer while notifying the human security team via a prioritized dashboard.

Practical Example: Securing a Ruby on Rails 8.0 API

To visualize how these agents function in a real-world scenario, consider a Ruby on Rails application using the new Solid Queue for background processing and Kamal for deployment. When a developer attempts to add a new third-party integration that inadvertently introduces a "mass assignment" vulnerability, the AI security agent intervenes at several points.

Commit Phase: The agent detects that the

permitlist in a Rails controller includes sensitive attributes likeadmin_status. It immediately flags this as a "High" risk.Analysis Phase: Using its LLM reasoning, the agent confirms that the user-facing form does not include this field, but a direct API call could exploit it.

Remediation Phase: The agent creates a new branch, modifies the controller to use a more restrictive

permitlist, and triggers a CI build to ensure the change doesn't break existing integration tests.Validation Phase: Once the build passes, the agent posts a summary of the risk and the fix to the team's communication channel, allowing for a quick one-click approval to merge.

This proactive intervention prevents the vulnerability from ever reaching the production environment, effectively "auto-healing" the application during the development process. For organizations managing hundreds of microservices, this automated oversight is becoming or a fundamental requirement for maintaining a secure and scalable infrastructure.

Frequently Asked Questions

Can AI security agents prevent all zero-day attacks?

No tool can claim 100% prevention, but AI agents excel at identifying the patterns of zero-day exploits by monitoring for anomalous code execution and unauthorized API sequences that fall outside regular application behavior.

Do these agents replace SAST and DAST tools?

Rather than replacing them, AI agents orchestrate these tools. They use LLM-based reasoning to interpret the raw output of SAST/DAST, filtering out noise and providing developers with a clear, actionable remediation path.

How do I prevent the security agent itself from being hacked?

Secure agent design requires "Isolated Execution." Agents should run in sandboxed environments with zero access to production secrets, communicating only through authenticated, encrypted channels like an MCP server with strict IDAM (Identity and Access Management) controls.

What is the performance impact of continuous monitoring?

When properly integrated into the CI/CD pipeline and runtime (using asynchronous workers), the performance impact is negligible. Most of the heavy lifting occurs in background threads or outside the main application request-response cycle.