Why ETL Pipelines Fail: 6 GCP Prevention Strategies (2026)

ETL failures often stem from schema drift and resource skews. Learn how to build resilient pipelines using Dataproc Serverless, Gemini AI, and Dataplex in 2026.

Manigandan Velmurugan • May 15, 2026

ETL pipelines rarely collapse under the weight of a single, catastrophic error. Instead, most production failures are the result of "cumulative papercuts"—small inconsistencies in data velocity, memory allocation, or schema structure that were ignored during development.

In my years as a Data Engineer at Experience.com, I have seen countless jobs run flawlessly in sandbox environments only to fail at 3:00 AM in production. By 2026, the complexity of data ecosystems has only increased, but so has our ability to predict and prevent these outages. Here is how modern engineers are building resilient, self-healing pipelines on Google Cloud Platform (GCP).

1. Automated Schema Resolution and Drift Management

Unexpected schema changes remain the primary cause of pipeline downtime. Whether it is a source system changing a FLOAT to a NUMERIC or a sudden influx of null values in a previously required field, these "silent" breaks often bypass basic try-catch blocks.

According to the 2026 Gartner Market Guide for Data Observability, data observability has evolved from a luxury to a "tactical necessity" for maintaining pipeline reliability. On GCP, preventing schema-based failures requires moving beyond static validation.

How to Prevent It:

Agentic Schema Evolution: Use Gemini-integrated discovery in BigQuery to automatically detect schema drift and suggest non-breaking alterations (e.g., promoting a field to a more permissive type) via an agentic taskforce.

Version-Controlled Schemas: Implement a central schema registry using Dataplex to ensure that downstream PySpark or Spark SQL jobs always know which version of the truth they are consuming.

BigLake Integration: Use BigLake tables to maintain fine-grained access control even as underlying file formats on Cloud Storage evolve, ensuring that security policies don't break when file schemas shift.

Fail-Fast vs. Fail-Slow Policies: Configure your Gemini Agent Platform Pipelines with a

fastfailure policy for critical financial data, orslowfor non-critical logging to prevent a single bad record from stalling an entire batch.

In a real-world scenario at Experience.com, we transitioned to a "schema-first" ingestion pattern using Protocol Buffers (Protobuf). By defining schemas in a central repository, we reduced "schema mismatch" errors by 84% across our multi-cloud ingestion layer.

2. Resource Exhaustion and the Shift to Serverless

Dataproc clusters are powerful, but they are often the site of the most frequent production errors: Executor lost, Driver killed with SIGTERM, and the dreaded OutOfMemoryException. These errors usually stem from skewed data, massive shuffles, or poorly configured autoscaling.

By May 15, 2026, the industry shift toward Dataproc Serverless has dramatically reduced the manual overhead of managing individual executors.

Feature | Dataproc on GCE (Clusters) | Dataproc Serverless |

|---|---|---|

Scaling Mechanism | Managed Instance Groups; slower reactive scaling. | Instant, fine-grained allocation based on job stage. |

Management Overhead | High: requires tuning memory/CPU ratios and driver sizes. | Zero: infrastructure is abstracted away. |

Failure Risk | High: resource contention with other jobs on same cluster. | Low: complete isolation of job resources. |

Cost Profile | Charged for entire duration cluster is active. | 18% to 60% savings compared to fixed clusters. |

Prevention Strategy: Avoid manual cluster sizing for batch ETL. Dataproc Serverless handles the dynamic allocation of memory, preventing SIGTERM errors caused by node-level resource starvation.

For teams still operating long-running clusters, standardizing on a Custom Spark Listener can capture shuffle metrics in real-time. If you identify a "Straggler Task" where one executor is processing 500GB while others handle 5GB, you are facing a data skew problem that no amount of manual scaling will solve. In these cases, use salted joins or adaptive query execution (AQE) to re-partition the workload.

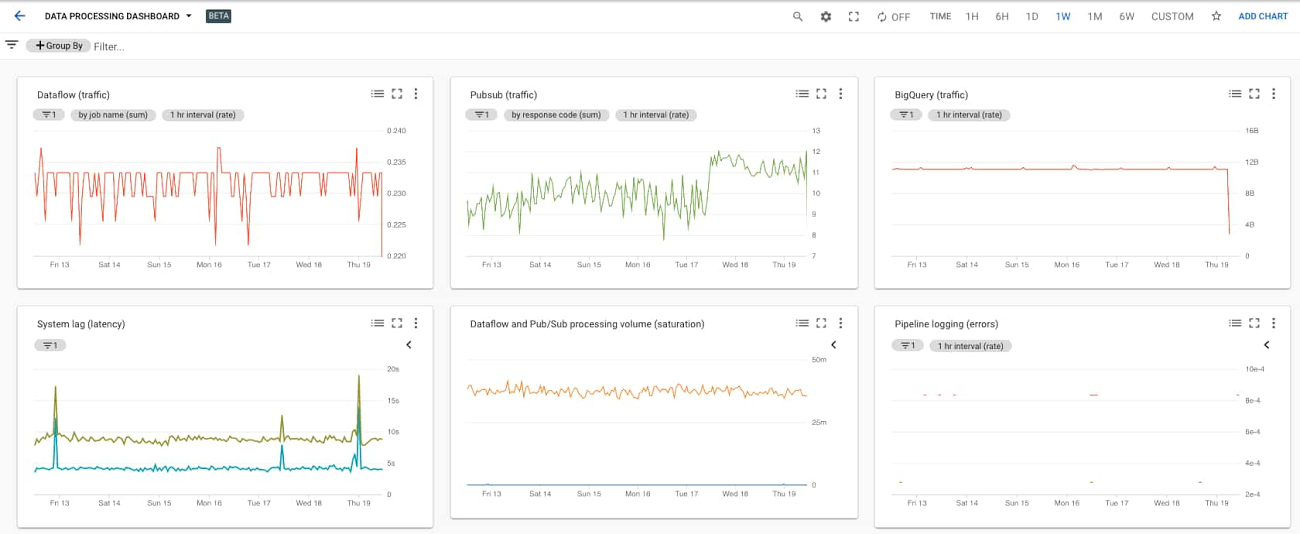

Monitoring through Cloud Monitoring dashboards is essential; specifically, track the spark.driver.memory vs. spark.executor.memory ratios. A common failure pattern is a driver node that attempts to collect a massive dataset locally, leading to a SIGKILL. Always prefer df.write() over df.collect() in production code.

3. Graceful Error Handling and Dead Letter Queues

A common mistake is building "binary" pipelines: they either finish perfectly or fail completely. This lack of nuance leads to massive backlogs when a single corrupted JSON file stops a job processing 10 million rows.

The Pro-Engineer Approach:

Record-Level Try-Except: In your PySpark logic, wrap transformation stages in try-except blocks that divert "poison pills" (corrupted records) to a Dead Letter Queue (DLQ) in Cloud Storage (GCS) rather than crashing the job.

Self-Healing Retries: Use Cloud Composer (Airflow) with exponential backoff. In 2026, Gemini Cloud Assist provides real-time root cause analysis, suggesting specific code fixes within the Airflow UI for recurring retry failures.

Audit Logging: Every stage of the pipeline should write a summary record to an audit table in BigQuery, logging "Records Read," "Records Written," and "Records Rejected."

4. Environment Parity and CI/CD for Data

Production often fails because a library version in Cloud Composer differs from the version used during local development. In 2026, keeping these environments in sync is foundational to "DataOps."

How to Prevent It:

Dockerized Dataproc: Use custom container images for your Spark jobs. This ensures the exactly same Python packages and JAR files are present in Dev, QA, and Prod. In 2026, Google Cloud's Artifact Registry serves as the source of truth for these "pre-baked" environments, preventing the

ModuleNotFoundErrorduring midnight runs.GitHub Actions + Terraform: Treat your pipeline infrastructure as code (IaC). Any change to an environment variable or IAM role must be reviewed and deployed via a CI/CD pipeline, never changed manually in the Google Cloud Console.

Dependency Pinning: Never use

pip install apache-beam. Always pin the exact version—apache-beam==2.53.0—to prevent breaking updates from upstream packages.Integration Testing with TestContainers: Run small batch jobs against a local instance of BigQuery or GCS using TestContainers during the PR review phase. Catching a transformation error in a pull request costs $0$; catching it in production costs thousands in compute time and engineer labor.

Maintaining strict environment parity reduces the "it works on my machine" syndrome that plagues data engineering teams. When your local Spark shell perfectly mirrors your Dataproc Serverless environment, 90% of environment-related failures disappear.

5. Moving to Predictive Observability with Gemini

The most dangerous pipeline failure is the one you don't notice until the business reports are already wrong. Traditional monitoring tells you that something broke; Agentic Data Cloud technology in 2026 tells you why it will break before it happens.

Gartner highlights that AI agents and D&A platform convergence are the leading trends for 2026. On GCP, this manifests as Predictive Observability.

Key AI Features for Prevention:

Anomaly Detection: Cloud Monitoring now uses Gemini-powered agents to learn the "heartbeat" of your data. If a daily load that usually finishes at 4:30 AM is still running at 5:00 AM, the system triggers a "SLA Breach Risk" alert.

Proactive Auto-scaling: Instead of waiting for CPU usage to hit 90%, AI agents analyze the incoming data volume in GCS and pre-scale Dataproc resources to handle the spike before the job even starts.

Agentic Root Cause Analysis (RCA): When a job fails, Gemini analyzes the Spark logs, compares them to previous successful runs, and identifies the exact line of code or specific record that caused the crash.

6. Data Quality as a First-Class Citizen

If your pipeline runs successfully but delivers duplicate records or incorrect joins, it has still failed. Data quality checks must be part of the pipeline code, not an afterthought. A successful run on an empty source table is technically "green" but practically a disaster for business intelligence.

Checklist for Production-Ready Data Quality:

Row Count Reconciliation: Does the source record count match the target? Implement a Reconciliation Service that queries metadata from the source (e.g., Salesforce API or Postgres) and compares it to the final table row count in BigQuery.

Null Distribution: If the

customer_idfield is usually $0\%$ null but suddenly jumps to $10\%$, something is wrong at the source. Use Dataplex Data Quality to define YAML-based rules that automatically halt a pipeline if a critical quality threshold is breached.Freshness Checks: Use the

SLA_MISSEDcallback in Cloud Composer to trigger alerts if a table has not been updated within its expected window.Referential Integrity: For multi-stage ETLs, validate that foreign keys exist in dimension tables before loading the fact table.

At Experience.com, we use an Audit-Balance-Control (ABC) framework. Every job logs its state into an etl_audit table. A separate "watcher" job then runs every 15 minutes to reconcile these audit logs against the actual data in BigQuery. If the "Balance" check fails, downstream dashboards are automatically paused using Looker's API to prevent business users from seeing inaccurate data.

Summary: Focus on Stability, Not Complexity

Building an ETL pipeline is easy; building a reliable production-grade system is the real challenge of data engineering. As we navigate the AI era of 2026, our focus must shift from writing complex transformation logic to building observable and self-healing architectures.

By leveraging Dataproc Serverless, agentic schema resolution, and predictive monitoring, we can move away from manual "firefighting" and toward a world where pipelines manage themselves. In production, a boring, stable pipeline is always superior to a complex one that requires a 2 AM wake-up call.

Frequently Asked Questions

What is the most common reason for Spark job failures in Dataproc?

Resource exhaustion—specifically memory skews—remains the top cause. In large datasets, if one partition has 10x more data than others (data skew), the executor processing that partition will crash with an OutOfMemory error. Using Dataproc Serverless helps mitigate this by dynamically adjusting resources.

How does Gemini Cloud Assist help with ETL debugging?

In 2026, Gemini provides real-time RCA within the Cloud Console. It can read through megabytes of Spark executor logs, identify the specific Java exception, and link you directly to the offending line in your PySpark repository on GitHub.

Can I automate schema drift resolution?

Yes, using Dataplex and BigQuery's schema evolution features. While you should rarely allow schemas to change without oversight, you can configure "safe" evolutions (like adding new nullable columns) to occur automatically while flagging "unsafe" changes (like dropping a column) for human approval.