AI in Data Engineering: 2026 Impact and Challenges

AI will require 80% of data engineers to upskill by 2027. Discover where AI outperforms manual cleaning and the structural barriers facing automated pipelines.

Nisamudheen M • May 11, 2026

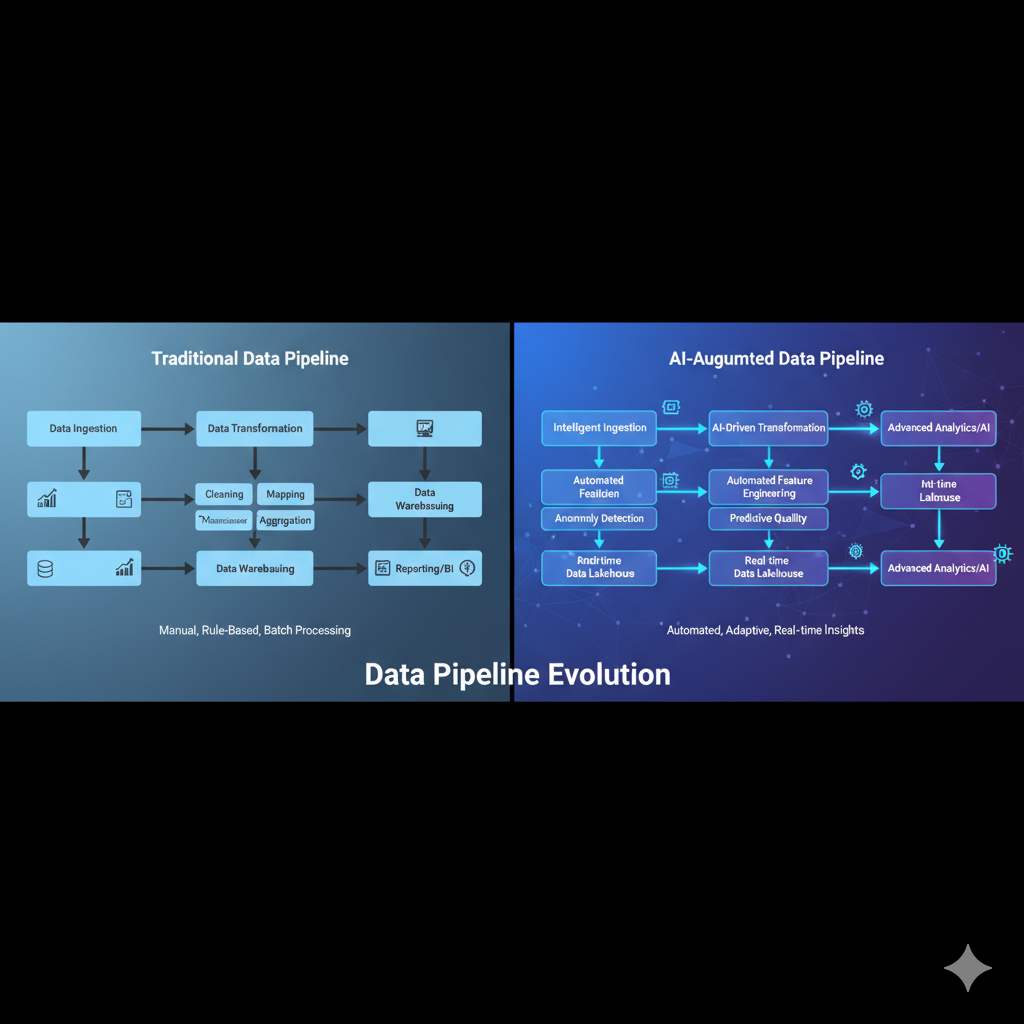

The era of manual, script-heavy data preparation is ending. By the close of 2026, 80% of the engineering workforce will be forced to upskill as Generative AI (GenAI) moves from experimental "copilots" to autonomous agents capable of constructing, monitoring, and repairing data pipelines.

While this shift promises to eliminate the "data janitor" role, it introduces a new set of structural friction points. The transition is not a simple swap of human effort for machine logic; it is a total reconfiguration of how data is governed, cleaned, and delivered. In 2026, the success of a data team is measured not by the volume of SQL code they write, but by their ability to orchestrate AI agents that manage the pipeline's complexity.

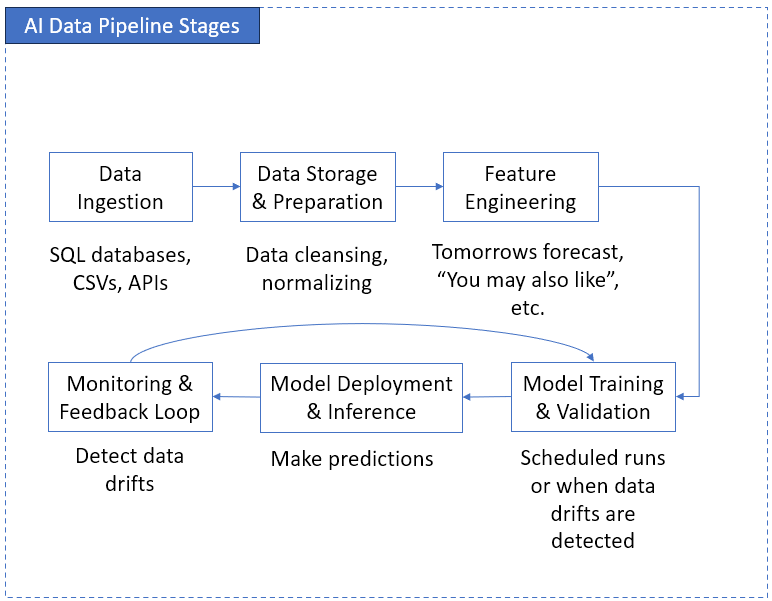

How does AI transform the data engineering workflow?

AI transforms data engineering by shifting the practitioner's focus from writing ETL (Extract, Transform, Load) logic to designing high-level data contracts and overseeing automated orchestration. Instead of manually mapping source-to-target fields, engineers now use Generative AI for automated data lineage and impact analysis.

In 2026, the modern pipeline is "agentic." Autonomous agents handle schema evolution, detect drift in real-time, and suggest optimizations for query performance. This automation is particularly visible in platforms like Snowflake and Databricks, which have integrated AI-driven data quality frameworks to replace static, rule-based monitoring.

What are the hardest challenges for AI in data cleaning?

The greatest challenge for AI in data cleaning is the "context gap"—the machine's inability to understand the business intent or tribal knowledge that defines whether a specific data point is "correct." While AI can find numerical outliers, it struggles with semantic nuances that only a domain expert can resolve.

Key hurdles for AI-driven cleaning include:

Semantic Ambiguity: Recognizing that "N/A," "-1," and "999" might all mean different things across different legacy systems within the same company.

Hallucination in Imputation: When AI "fills in" missing data, it may invent highly plausible but factually incorrect values that corrupt downstream analytics.

Operational Reality: A significant 37% gap exists between lab benchmark scores and real-world production performance, largely due to the messy, non-standardized nature of enterprise data.

Where are the insurmountable barriers for AI autonomy?

Structural barriers such as regulatory compliance, data privacy, and the "Black Box" transparency problem prevent AI from achieving full autonomy in data engineering. Regulations like the EU AI Act of 2026 mandate that high-risk AI systems maintain strict documentation and human oversight.

Data engineers must navigate these three primary barriers:

Regulatory Compliance: Strict data-locality and consent rules under GDPR and newer amendments require immutable audit logs that autonomous AI agents often bypass unless specifically architected to include them.

Security & Shadow AI: The rapid adoption of AI has led to a tripling of employees using ungoverned tools, creating massive security holes and sensitive data policy violations.

The Explainability Crisis: By 2026, organizations are shifting investments toward Explainable AI (XAI) because black-box automation is no longer acceptable for financial or medical data pipelines.

Where will AI outperform manual engineering?

AI consistently outperforms manual engineering in high-scale pattern recognition, repetitive boilerplate generation, and multi-dimensional data quality monitoring. In 2026, manual SQL or Python data cleaning is considered inefficient for datasets exceeding 1,000 rows, as AI-powered platforms can now identify and fix errors in seconds that would take a human hours.

Capability | Manual Engineering (SQL/Python) | AI-Driven Engineering (Agentic) |

|---|---|---|

Schema Evolution | Requires manual code updates for every change in the source system. | Automatically detects changes and suggests mapping updates or data contracts. |

Data Imputation | Limited to statistical averages or simple rule-based "if-then" logic. | Uses large language models to infer missing text values based on surrounding context. |

Drift Detection | Static alerts based on predefined thresholds. | Dynamic, unsupervised learning models that adapt to changing seasonal patterns. |

Code Generation | Time-intensive; prone to syntax errors and non-optimized JOIN patterns. | Generates production-ready, peer-reviewed SQL and Python from natural language in seconds. |

How is AI reshaping the management of unstructured data?

One of the most profound shifts in 2026 is the ability of AI to integrate unstructured data—such as PDFs, medical scans, and architectural diagrams—directly into structured engineering pipelines. Previously, this data was often discarded or required manual labeling by expensive human services. Today, LLM-based parsing agents can extract entities and sentiment with high accuracy in specialized domains, such as legal discovery or medical billing.

This capability is turning "Dark Data" into a competitive asset. Data engineers are now building "semantic layers" that sit between raw unstructured storage and the warehouse. These layers use vector embeddings to store the multi-dimensional meaning of files, allowing analysts to query a video archive using the same SQL syntax they would use for a transaction table.

However, this introduces a new category of technical debt: embedding drift. As the base models used to generate these embeddings are updated or swapped, the entire semantic layer may need to be "re-indexed." Managing this lifecycle is becoming a core competency for senior data engineers at companies like Databricks and Snowflake as they race to provide unified analytics across all file types.

What does the rise of "Agentic" workflows mean for legacy ETL?

The traditional "box-and-arrow" ETL diagram is being replaced by agentic swarms—small, specialized AI agents that negotiate with each other to move and transform data. In a 2026 enterprise environment, an "Ingestion Agent" might detect a new data source, while a "Security Agent" automatically applies PII masking before the "Transformation Agent" ever sees the payload.

This shift solves the long-standing problem of pipeline rigidity. In the past, if a source system changed its date format from MM/DD/YYYY to YYYY-MM-DD, the entire pipeline would break, requiring a manual fix. Agentic pipelines now use "self-healing" logic: the agent recognizes the pattern change, applies the correct casting function, and logs the correction without human intervention.

While this sounds like the end of human engineering, the reality is more complex. These autonomous systems require sophisticated observability frameworks to prevent "feedback loops". If an AI agent incorrectly "fixes" a data point and then uses that faulty logic for the next 10,000 records, the resulting data pollution can be catastrophic. The modern engineer's role has moved from fixing the plumbing to auditing the robotic plumber's decisions.

Practical Steps: Transitioning to an AI-Native Data Stack

For organizations still operating on legacy SQL-only pipelines, the transition to an AI-native stack must be incremental. The priority is not to replace your team with AI, but to augment your highest-value assets first.

Phase 1: Metadata Enrichment. Use AI to automatically generate descriptions and tags for your existing data catalog. This increases data discoverability as users can find tables using natural language, a trend seen in recent metadata management comparisons.

Phase 2: Automated Quality Guards. Implement AI-driven anomaly detection on critical tables. Unlike static rules (e.g., "column X cannot be null"), AI models can learn that "column X is usually null on weekends," preventing thousands of false-positive alarms.

Phase 3: Agentic Orchestration. Begin delegating non-critical, high-volume ingestion tasks to autonomous agents. This frees your senior engineers to focus on architectural design and compliance with new standards like the EU AI Act.

By following this phased approach, teams can lower the risk of "automation shock" and ensure that their infrastructure remains auditable. The goal is to reach a state where the data pipeline is not just a pipe, but a dynamic, self-aware system that evolves with the business it serves. Every data engineer should aim to become the architect of their domain, ensuring that as the volume of information grows, the clarity of the resulting insights grows even faster.

How should data engineers prepare for 2027?

Data engineers must pivot from "builders" to "orchestrators." The value proposition of a modern engineer is no longer their ability to write code, but their ability to govern the AI that writes it. This requires a deep understanding of AI observability, data contracts, and ethical AI governance.

The goal is to build a "trust-first" data architecture. As the technology continues to converge—with top AI models separated by as little as 3% in performance—the differentiator for any company will be the quality, security, and reliability of the data feeding those models.

Frequently Asked Questions

Will AI replace data engineers by 2030?

No, but it will replace the current version of the data engineer. The role is evolving into a more strategic position focused on architecture, data product management, and governance. Engineers who only write basic SQL scripts face the highest risk of displacement.

Is AI data cleaning safe for regulated industries?

Only when paired with "Human-in-the-loop" (HITL) workflows. In industries like finance or healthcare, AI-suggested cleaning must be verified by a person or a secondary "LLM-as-a-judge" to meet compliance standards such as the EU AI Act.

Does AI increase the cost of data engineering?

Initially, yes—due to the high cost of tokens and specialized AI talent. However, the long-term ROI is found in a massive reduction in "technical debt" and the ability to process unstructured data (images, audio, PDF) that was previously inaccessible to traditional pipelines.