The global computing landscape reached a definitive pivot point in 2026, where the demand for specialized AI silicon officially decoupled from general-purpose server growth. While central processing units (CPUs) remain the operational backbone of the internet, graphics processing units (GPUs) now capture over 80% of data center capital expenditure, driven by the transition from generative experimentation to agentic AI production.

The hardware battle is no longer just about raw teraflops; it has shifted toward memory bandwidth, interconnect density, and thermal management. For enterprises, the decision between cloud-rented H100s and on-premise Blackwell or Rubin systems has become a complex financial and technical calculation. This shift is reshaping everything from data center design to the global energy grid.

Who Dominates the GPU Market in 2026?

NVIDIA maintains a commanding lead in the AI accelerator market, capturing roughly 75-80% of total revenue in 2026 despite aggressive moves from competitors and custom internal silicon from hyperscalers. The company reported a staggering $193.7 billion in data center revenue for fiscal terms ending in 2026, a result of its transition to a full-stack systems provider rather than just a chip manufacturer.

AMD has emerged as the most significant challenger, leveraging its Instinct MI325X and MI400 series to secure a 5-7% share of the data center GPU market. While NVIDIA’s CUDA ecosystem remains a massive moat, AMD’s focus on HBM4 (High Bandwidth Memory) capacity and open-source software stacks has made it the primary alternative for enterprises seeking to avoid vendor lock-in.

What Is the Most Efficient Architecture for AI in 2026?

In 2026, the focus of GPU architecture has moved from "logic-intensive" designs to "memory-intensive" systems. The emergence of the NVIDIA Vera Rubin platform has redefined performance benchmarks, moving beyond the Blackwell series with 3.6 exaflops of compute and 260 TB/s of NVLink bandwidth. This architecture is specifically designed to handle the "HBM crunch," where the bottleneck for large language models (LLMs) is no longer the speed of the processor, but the speed at which data can reach it.

AMD has countered this with its own HBM4 implementation, partnering with Samsung to integrate 31 TB of HBM4 memory into its top-tier Instinct racks. This massive memory pool allows for larger models to reside entirely on the GPU, drastically reducing the latency associated with off-chip data movement.

Metric | NVIDIA Blackwell (B200) | NVIDIA Vera Rubin (R100) | AMD Instinct MI400 |

|---|---|---|---|

Max Compute (FP8) | 2.25 Petaflops per GPU | ~4.5 Petaflops per GPU | Competitive with B200 class |

Memory Standard | HBM3e | HBM4 | HBM4 |

Primary Interconnect | NVLink 5 (1.8 TB/s) | NVLink 6 (3.6 TB/s) | Infinity Fabric (Open Standard) |

Cooling Requirement | Hybrid/Liquid Mandatory | Full Direct-to-Chip Liquid | Full Liquid-Cooled Ready |

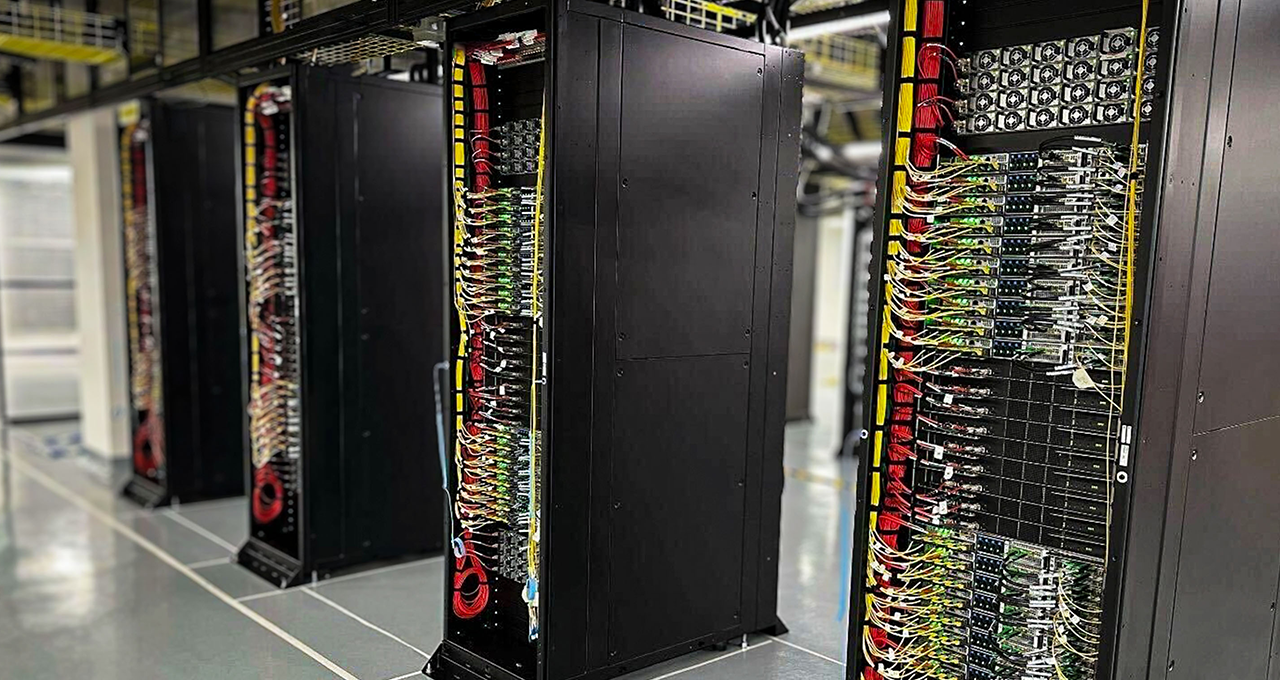

Why Is Liquid Cooling Mandatory in 2026?

The sheer power density of modern AI racks has rendered traditional air cooling obsolete for high-end deployments. In 2026, liquid cooling adoption in AI data centers is expected to reach 47%, as single racks now consume upwards of 120kW. Direct-to-chip liquid cooling—where coolants are circulated through plates directly on the GPU—is the only way to prevent thermal throttling on high-end NVIDIA and AMD clusters.

This transition isn't just about performance; it's a sustainability requirement. Liquid-cooled systems can dramatically reduce Power Usage Effectiveness (PUE) and Total Cost of Ownership (TCO) by eliminating the massive energy draw of high-speed fans and computer room air conditioning (CRAC) units. Companies like ASUS and Foxconn are now shipping fully integrated, liquid-cooled "AI Pods" that arrive as turnkey supercomputing units.

Is Cloud or On-Premise GPU Hosting Better for AI?

For most enterprises in 2026, the financial math favors a "neo-cloud" strategy over traditional hyperscalers or on-premise hardware for training. Specialized GPU cloud providers offer significantly lower rates, with NVIDIA H100 instances starting at $2.00 per hour, compared to approximately $6.88 per hour on major hyperscalers like AWS.

Provider Type | Typical H100 Price (2026) | Best For | Trade-offs |

|---|---|---|---|

Hyperscalers (AWS/Azure) | $6.00 - $12.00/hr | Massive scale & ecosystem | Highest cost; complex egress fees |

Specialized (GMI/Spheron) | $2.00 - $3.00/hr | Cost-optimized AI training | Fewer managed high-level services |

Marketplaces (GPUnex) | $1.49 - $2.50/hr | Spot workloads & startups | Variable availability; raw infra |

However, on-premise dedicated servers are making a comeback for production-scale inference. For a consistent 24/7 workload, bare-metal GPU servers often provide better long-term TCO than cloud rentals, as they eliminate the "virtualization tax" and unpredictable networking costs that can add 20–40% to a cloud bill.

The 2026 Forecast: From Training to Inference

The most significant trend for the remainder of 2026 is the pivot of hardware sales from training-centric to inference-centric chips. As the market moves from building models to serving them at world-scale, the industry is prioritizing silicon that offers the highest "tokens per dollar" rather than raw training throughput.

This shift favors architectures like the NVIDIA GB200 NVL4 and specialized inference accelerators integrated into the GPU fabric. By the end of 2026, expect the market to diversify further as custom AI chips (TPUs, LPUs) from Google and Meta continue to chip away at the general-purpose GPU market for specific internal workloads, forcing traditional chipmakers to innovate even faster on memory and interconnect speeds.

How Is Agentic AI Changing GPU Requirements?

Agentic AI—systems that can autonomously plan, reason, and execute multi-step tasks—requires a shift from high-throughput batch processing to low-latency, stateful "thinking" cycles. In 2026, the primary metric for GPU performance has evolved from simple training speed to sustained reasoning tokens per second (TPS). Unlike previous generative models that produced one-off outputs, agents maintain continuous loops of feedback, requiring GPUs to hold massive "context windows" in active memory.

This has led to the rise of multi-GPU fabric systems where the interconnect (NVLink or Infinity Fabric) is more critical than the individual chip. For an agent to solve a complex coding task or manage a customer service pipeline, it may need to bounce data between 8 or 16 GPUs in milliseconds. Architectures like NVIDIA’s NVLink 6, which offers 3.6 TB/s of bidirectional throughput, are specifically designed to treat a whole rack of GPUs as a single, massive logical processor.

What Are the Real-World Costs of Powering AI Clusters?

The energy consumption of an AI-centric data center in 2026 is now a primary bottleneck for global expansion, with high-performance clusters consuming significantly more power than previous-generation CPU farms. A single NVIDIA Blackwell rack can draw up to 120kW, meaning a mid-sized data center deployment easily reaches many megawatts of demand. This surge has forced a 47% increase in the adoption of liquid cooling systems to manage the thermal density without exponentially increasing energy waste.

Enterprises are facing a "power wall," where the cost of electricity is beginning to rival the cost of the hardware itself. Over a three-year lifecycle, the Total Cost of Ownership (TCO) for a GPU cluster is now split almost 50/50 between capital expenditure and operational maintenance (power and cooling). This has driven the industry toward more efficient FP4 and FP6 precision formats, which allow models to run with less power without sacrificing substantial accuracy.

How to Choose the Right GPU for Your AI Infrastructure?

Selecting the right hardware in 2026 depends on whether your organization is focused on frontier model training, high-volume inference, or edge-case agentic workloads. While the high-end H200 and Blackwell chips get the headlines, many enterprises are finding better ROI using "right-sized" accelerators like the L40S for video and multi-modal apps, or AMD's MI325X for pure LLM serving.

For Training Large Models: Prioritize raw HBM4 capacity and interconnect speed. NVIDIA’s Rubin or AMD’s Instinct MI400 are the current gold standards for high-density training.

For Massive Inference (serving users): Look for the highest "tokens-per-watt." Specialized inference cards often provide 30-40% better efficiency than general-purpose training GPUs.

For Prototyping and Dev: Leverage "Neo-Cloud" providers where H100 instances are available for as low as $2.00/hr, allowing for rapid testing without the $400,000 upfront cost of a single DGX station.

Frequently Asked Questions

Can I still use consumer GPUs (RTX 5090) for AI?

While consumer cards like the RTX 5090 remain powerful for local development and small-scale fine-tuning, they lack the memory density (VRAM) and interconnect speed required for modern enterprise agents. In 2026, most serious AI development has moved to data-center grade silicon due to the software moats and multi-GPU scaling features locked out of consumer drivers.

Is AMD actually a viable alternative to NVIDIA in 2026?

Yes. With the maturation of the ROCm software stack and partnerships with major cloud providers, AMD has secured a 5-7% market share by focusing on memory-bound workloads. For models like Llama 4 or Claude 4, AMD hardware often offers better price-to-performance for inference than NVIDIA equivalents.

What is the lead time for purchasing high-end GPUs in 2026?

Supply chains have stabilized significantly compared to 2024, but high-end liquid-cooled racks still carry a 12-16 week lead time due to the complexity of the cooling infrastructure and HBM4 manufacturing limits. Many companies use hybrid cloud strategies to bridge this gap.