Modern frontend development in 2026 has shifted from static component libraries to intelligent, agentic interfaces that adapt in real-time. By leveraging Generative UI (GenUI) and robust streaming protocols, developers are now building applications that don't just respond to user input but anticipate user intent.

According to Forrester's 2026 predictions, roughly 40% of enterprise applications will feature autonomous AI agents by the end of the year, representing a massive leap from the chatbot-centric designs of 2024. This evolution requires a fundamental rethink of the frontend stack, moving toward "vibe coding" environments and server-sent event (SSE) architectures.

What is Generative UI and How Does it Work?

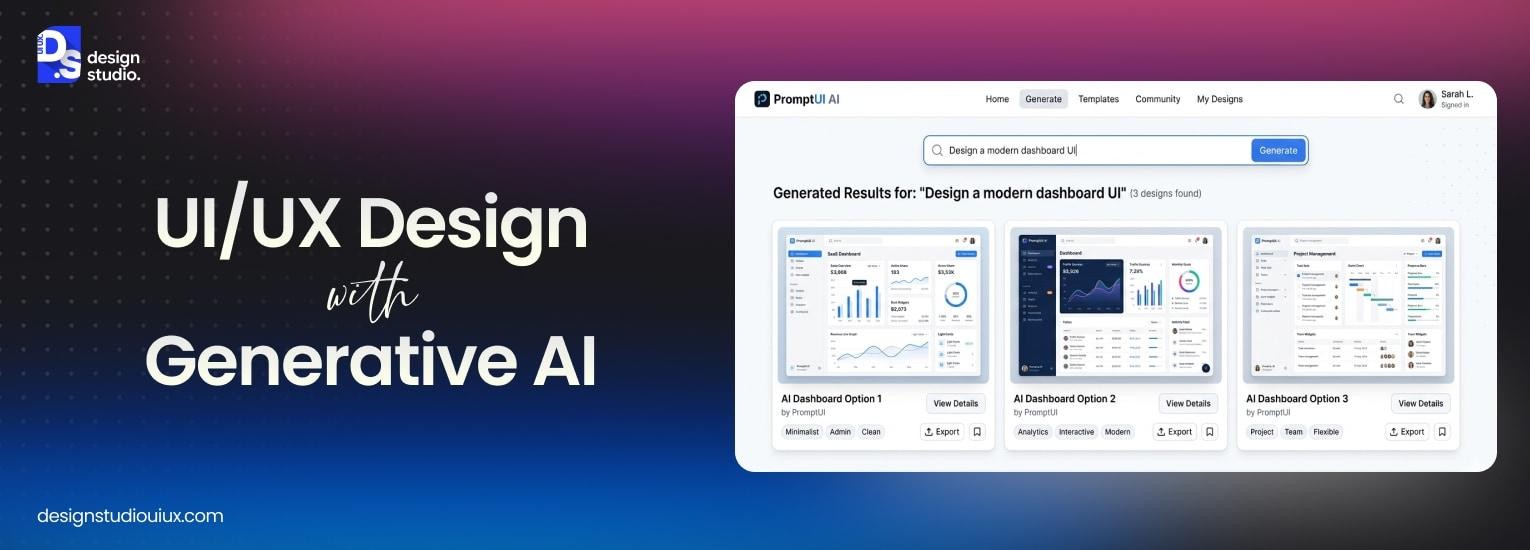

Generative UI is a design pattern where the AI agent creates rich, interactive interfaces dynamically—including forms, charts, and dashboards—rather than returning plain text for the user to parse. Instead of hard-coding every possible state, developers provide the AI with a library of trusted components (using tools like Vercel’s AI SDK) that the model can compose and render on the fly based on the conversation's context.

The stack for GenUI usually includes a coordination layer—like the Model Context Protocol (MCP)—to bridge the gap between backend tools and the frontend. In this environment, the "UI" is no longer a fixed set of pages; it is a fluid execution of components that appear exactly when the user needs to perform a specific action, such as approving a transaction or visualizing a data projection.

Why are Server-Sent Events the Standard for 2026 AI Apps?

Server-Sent Events (SSE) have emerged as the de facto standard for streaming LLM responses because they offer a lightweight, unidirectional way to push token-by-token updates without the overhead of WebSockets. While WebSockets are better for high-frequency bidirectional gaming or trading apps, SSE is more resource-efficient for 2026's AI workloads where the server is doing 90% of the conversational heavy lifting.

Feature | Server-Sent Events (SSE) | WebSockets (WS) |

|---|---|---|

Directionality | Unidirectional (Server → Client) | Full Bidirectional (Two-way) |

Protocol | Standard HTTP/HTTPS | Upgraded WS Protocol |

Reconnection | Native automatic reconnection support | Manual implementation required |

Cloud Compatibility | High (Works seamlessly with edge functions) | Moderate (Requires sticky sessions/complex state) |

In a React or Svelte project, implementing SSE allows you to maintain "Time to First Token" (TTFT) metrics under 200ms, ensuring that the user sees the interface reacting immediately while the full logic and UI components are still being generated in the background.

How do Vercel and Netlify Compare for AI Hosting?

Hosting an AI frontend in 2026 requires more than just a CDN; it requires a platform that manages durable functions and high-performance edge compute. Vercel has doubled down on being the "AI Cloud," offering purpose-built SDKs that handle the complex orchestration of streaming UI components. Netlify, meanwhile, offers a more flexible compute model with support for background tasks and scheduled functions on their free commercial tier.

Vercel’s advantage lies in its tight integration with the Next.js ecosystem, where "Server Actions" and the AI SDK work in tandem to stream GenUI. Developers can use the useVercelAI hook to instantly wire up a streaming response to a local component library. Netlify counters this with broader framework support and more permissive execution limits for long-running AI agents that might need more than the standard 10-second serverless timeout.

How to Build a GenUI Component Bridge

Implementing a bridge between LLM outputs and frontend components is the most critical technical step for a modern AI application. The goal is to move from text-based "Markdown responses" to Structured Schema Responses that the client can map to a local component registry.

Define the Registry: Map specific JSON keys to your UI components (e.g.,

"VIEW_CHART": StockChart).Schema Enforcement: Use libraries like Zod or TypeChat to ensure the LLM strictly follows the JSON structure required by your components.

The Stream Parser: Use a streaming library—such as the Vercel AI SDK—to parse incoming tokens in real-time. If a chunk identifies as a "UI hint," the stream redirects the data to a viewer rather than the text chat window.

By treating the AI agent as a "headless CMS" that emits component blueprints, you decouple the design from the logic. This allows your design team to update the look and feel of a dashboard in the component library without needing to retrain or even re-prompt the underlying language model.

The Emergence of Vibe Coding and Prototyping

As we move through 2026, the traditional distinction between "designer" and "developer" is blurring thanks to the rise of vibe coding. This approach focuses on high-level intent rather than low-level syntax. Instead of writing every div and span, developers describe the "vibe"—the behavior, aesthetics, and user journey—and the agentic frontend engine handles the boilerplate.

This shift is significantly reducing the time-to-market for new features. According to reports on the state of development in 2026, teams using vibe-driven AI tools are deploying 3.4x faster than those sticking to manual documentation-driven processes. However, this speed necessitates a "governance-first" approach where every AI-generated component is validated against a central design system to prevent visual drift.

Performance Metrics That Matter: Beyond Page Load

In the AI-first era, traditional metrics like Largest Contentful Paint (LCP) are taking a backseat to Interaction Readiness (IR) and Context Hydration Time. Since much of the UI is generated on-demand, users care less about how fast the full page loads and more about how quickly the agent can provide a relevant interactive widget.

Standardizing on Model Context Protocol (MCP) is proving critical for performance. By providing a unified way for frontend agents to access data, MCP reduces the round-trip latency often associated with multi-agent orchestration. For developers, this means the frontend can "pre-fetch" context before a LLM even finishes its reasoning step, allowing for transitions that feel instantaneous rather than laggy.

How to Secure Agentic Interfaces Against Prompt Injection

As AI agents gain the ability to execute frontend tools autonomously, they introduce a new attack surface known as Indirect Prompt Injection. In this scenario, an agent might read a malicious email or webpage that contains hidden instructions, leading it to perform unauthorized actions like exfiltrating user data through a Generative UI component.

A 2026 report on agentic security highlights that approximately 1 in 8 security breaches now originate from autonomous agentic systems. To mitigate these risks, developers are shifting toward a "Zero Trust" architecture for frontend tools, where the UI layer acts as a strict validator rather than a blind executor.

Check and clean data before use: Every JSON schema emitted by the LLM must be validated against a JSON-schema template before hitting the component registry.

Controlled Execution Environment: Use IFrames or isolated web components to render generative elements, preventing them from accessing global document cookies or local storage.

Why Human-in-the-Loop is the Essential UX Pattern

To counter the unpredictable nature of AI-generated interfaces, the Human-in-the-Loop (HITL) pattern has become mandatory for high-stakes enterprise applications. According to 2026 UX design trends, the most effective interfaces are those that allow the agent to propose a UI state but require an explicit user "checkpoint" for final execution.

In practice, this means your Generative UI components should include a "Review & Commit" state. For example, if an agent generates a complex financial chart or a multi-step form, the interface should remain in a read-only "Draft" mode until the user clicks a verification button. This pattern not only prevents hallucinations from causing data corruption but also builds the necessary trust for users to interact with increasingly complex autonomous systems.

Summary: Building the 2026 Frontend

The modern AI frontend is less about visual design and more about orchestrating streaming events. To implement these ideas in 2026:

Adopt a Streaming-First Architecture: Use SSE to send both text tokens and UI component descriptors from the agent to the client.

Modularise your UI: Build a library of "blind" components that can be hydrated with data at runtime by an AI generator.

Implement Set rules to control what the AI can do. Make sure the AI only uses approved actions and tools. Use protocols like MCP to enforce these limits. This keeps the AI safe and predictable.: Use MCP or similar protocols to ensure the AI agent only triggers approved tool actions.

By moving away from static layouts and embracing dynamic generation, frontend engineers are no longer just building screens—they are building the cognitive interface for machine intelligence.