The era of simple chatbots acting as interfaces for large language models has ended. In 2026, the primary shift in artificial intelligence is the transition toward agentic AI, where systems do not merely suggest actions but autonomously execute them across complex enterprise workflows. Gartner predicts that by the end of 2026, at least 40% of enterprise applications will feature task-specific AI agents, a staggering increase from less than 5% only one year ago.

This evolution marks a pivot from "AI as a tool" to "AI as a workforce." For software engineers and business leaders, 2026 is defined by the orchestration of these autonomous entities, the tightening of global regulatory frameworks, and the rise of machine-to-machine economies.

What Is Agentic AI and Why Does It Dominate 2026?

Agentic AI systems are defined by their ability to maintain context, use professional tools, and exhibit economic agency. Unlike the linear "prompt-and-response" cycles of 2023, modern agents in 2026 operating on platforms like OpenAI Frontier can manage multi-step projects such as customer onboarding or order-to-cash cycles without human intervention between every step.

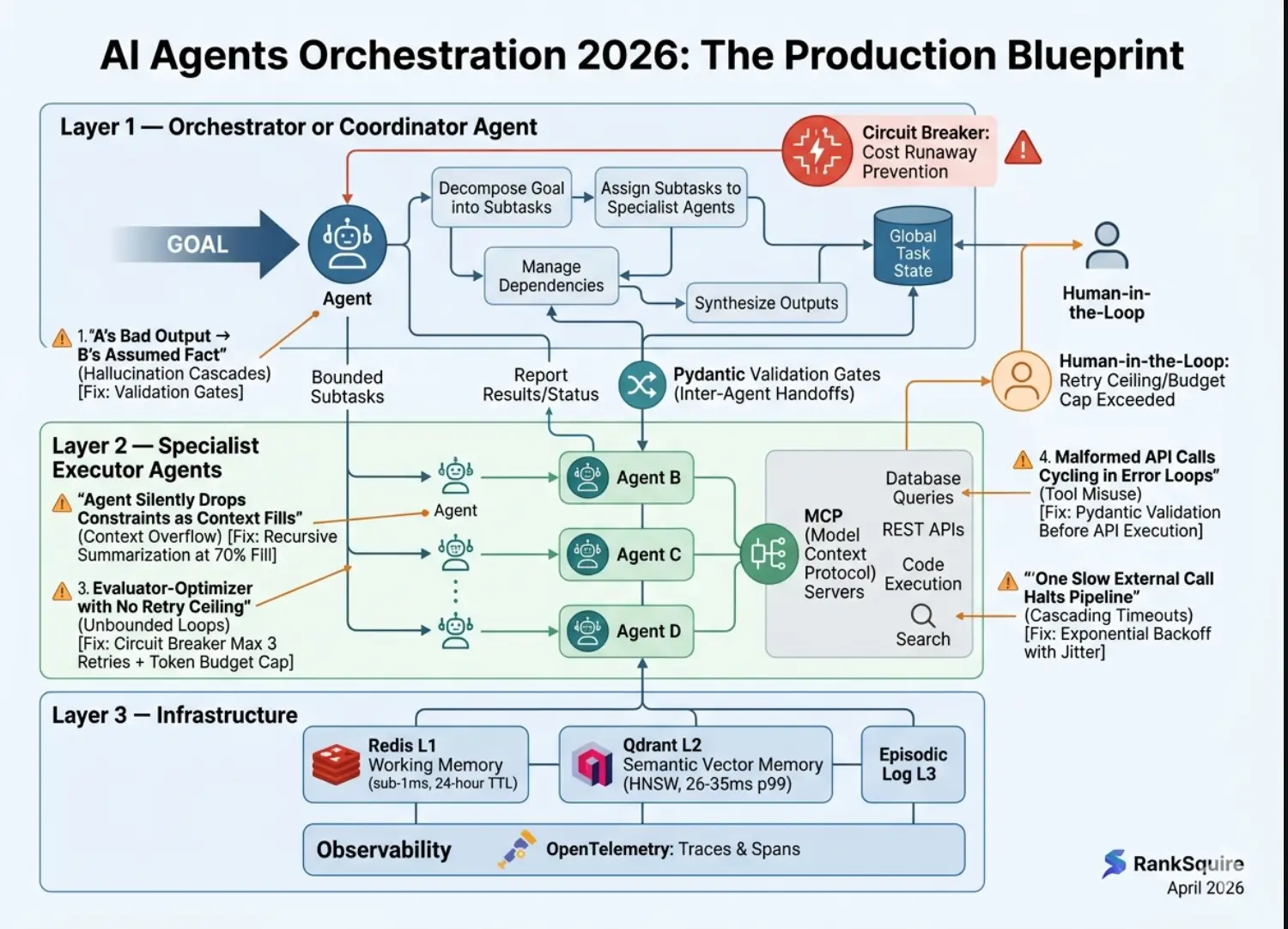

The core advantage lies in stateful runtime environments. These allow agents to "remember" work across sessions and tools, effectively breaking the automation ceiling that limited previous generations of software. Instead of following a rigid script, these agents reason through obstacles—retrying failed API calls, negotiating with other agents for data, and seeking human approval only when a pre-set risk threshold is exceeded.

How Is Global Regulation Reshaping AI Architecture?

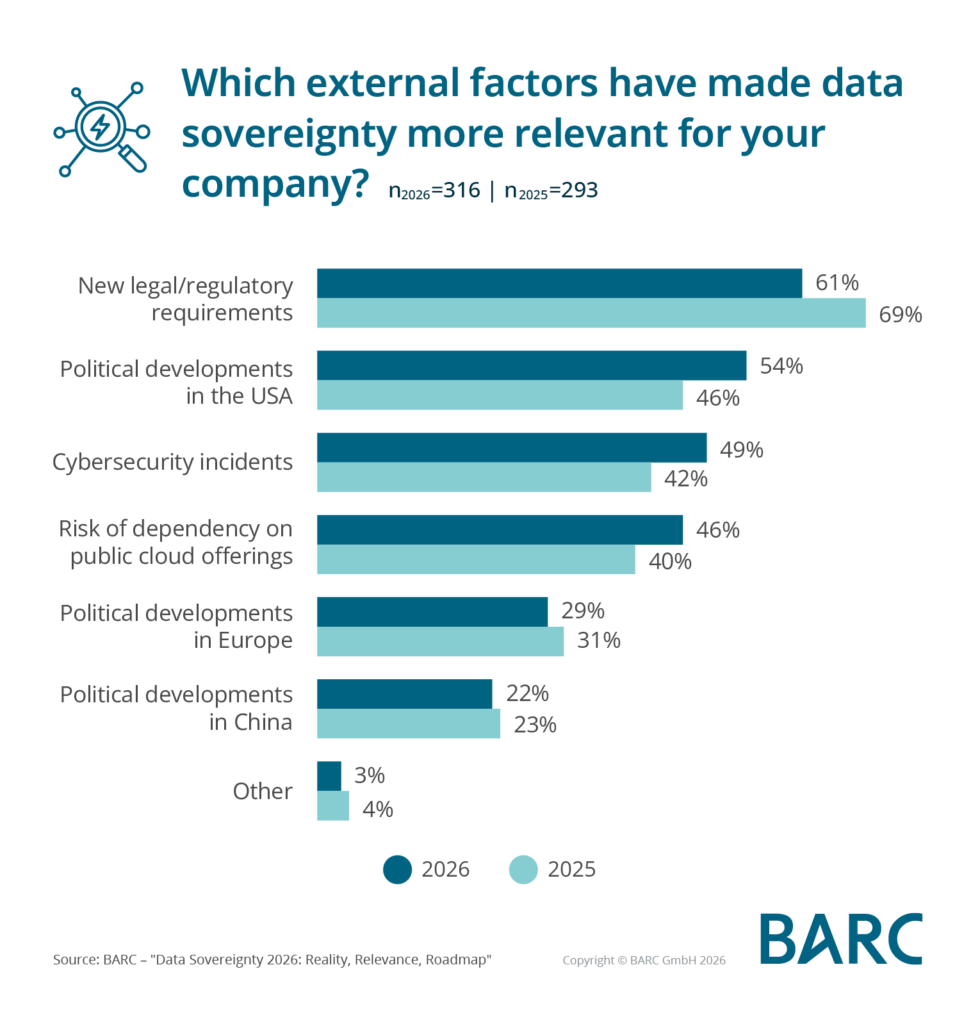

Governance is no longer an afterthought; it is a primary design constraint for any AI system deployed today. The EU AI Act reached full enforcement on August 2, 2026, fundamentally changing how high-risk AI systems are built and audited. This regulation imposes strict transparency rules and safety requirements that apply to any company operating within the European Union, regardless of where the company is headquartered.

For developers, this means the architectural shift toward preemptive cybersecurity and confidential computing. High-risk systems—such as those used in recruitment, credit scoring, or critical infrastructure—must now include:

Human oversight by design: Real-time dashboards where human operators can intervene in autonomous agent loops.

Traceability logs: Immutable records of an agent's reasoning path and tool usage for audit purposes.

Data Geopatriation: A trend identified by Gartner where organizations move data out of global public clouds into local, sovereign clouds to mitigate geopolitical and regulatory risks.

Why Are "Machine Customers" the New Growth Frontier?

One of the most surprising trends of 2026 is the emergence of the machine customer. AI agents now possess economic agency, meaning they are authorized to make financial transactions on behalf of individuals or corporations. We are seeing a rise in programmable financial infrastructure that allows an agent to shop for the best software vendor, negotiate a contract, and execute a payment—all autonomously.

This shift is forcing businesses to market not just to humans, but to algorithms. SEO (Search Engine Optimization) has evolved into AIO (Agent Interaction Optimization). If your business data is not structured in a way that an autonomous procurement agent can parse, you simply do not exist in the 2026 marketplace.

Feature Area | 2024 Generative AI | 2026 Agentic AI |

|---|---|---|

Primary Interaction | Conversational chat interfaces required constant human prompting. | Autonomous agents execute multi-step workflows with minimal oversight. |

Infrastructure | GPU-heavy central clusters for model inference and training. | Distributed, sovereign clouds with confidential computing for compliance. |

Economic Role | Content generation and creative assistance tools for employees. | Machine customers with authority to negotiate and execute transactions. |

Governance | Voluntary guidelines and internal "responsible AI" frameworks. | Mandatory compliance with global acts like the EU AI Act (enforced 2026). |

How Should Enterprises Prepare for the Next Phase?

Building for 2026 requires moving away from experimental "AI playgrounds" toward integrated Agentic Architectures. Success is now measured by how well your AI integrates into existing data ecosystems like Databricks, Snowflake, and AWS.

Enterprises must focus on three core pillars:

Agent Orchestration: Moving from single-model usage to multi-agent systems where task-specific agents (e.g., an "Accounting Agent" and a "Legal Agent") collaborate on a shared goal.

Preemptive Security: Investing in AI-native security platforms that can detect and block "prompt injection 2.0"—malicious instructions hidden in data that agents might ingest while browsing the web.

Maturity-Led Implementation: According to recent enterprise AI roadmaps, the most successful firms are those that have moved past the demo phase and are now focusing on Stage 3 maturity: full autonomous operations with measured ROI on productivity.

As we move through the remainder of 2026, the gap between "AI-ready" and "AI-native" organizations will widen. The companies winning today are not just using AI to write emails; they are building digital workforces that can think, act, and transact in a regulated, global economy.

Why Is Data Sovereignty the New Competitive Moat?

In the current 2026 landscape, the value of AI is increasingly tied to where data resides and how it is protected. As global tensions and data localization laws toughen, organizations are shifting away from monolithic cloud models toward sovereign AI clouds. This trend is driven by the realization that generic LLMs trained on public data are reaching a point of diminishing returns; the real advantage is found in private, high-fidelity data that stays within national or corporate boundaries.

Software engineers are now tasked with building "bridge architectures" that allow agents to reason across these siloed data pools without ever actually moving the raw data. This is achieved through:

Federated Learning Layers: Training models on localized data and only sharing weight updates with a central orchestrator.

Confidential Computing: Using hardware-level isolation (TEEs) to ensure that even the cloud provider cannot see the data being processed by an AI agent.

Localized LLM Runtimes: Deploying small, highly optimized models (like Llama-4 Small or Mistral-7B v4) directly on-premises for task-specific reasoning.

This focus on sovereignty also addresses the growing concerns over AI data copyright. In 2026, many of the first-generation AI training lawsuits have reached settlements, resulting in new industry standards for "Opt-In Training Data." Companies that can prove their AI was built on ethically sourced, sovereign data are winning contracts in heavily regulated sectors like defense and healthcare over competitors using "black box" models.

How Do Multi-Agent Systems (MAS) Coordinate Complex Tasks?

The most significant technical shift for 2026 is the maturity of Multi-Agent Systems (MAS). Instead of a single model trying to be a "jack of all trades," we see the emergence of specialized agent swarms. In a typical financial services firm, a customer request might trigger a coordination protocol between a "Know Your Customer" (KYC) agent, a risk-assessment agent, and a customer service representative agent.

This coordination requires a standardized language for machine interaction. Organizations are adopting Agent Communication Protocols (ACPs) that facilitate negotiation. For example, a procurement agent might broadcast a requirement for 500 server units; vendor agents then bid on the contract based on real-time inventory and logistics data. The entire negotiation happens in sub-seconds, with human intervention required only for final contract binding.

The challenge for IT teams in 2026 is managing the "Agent Sprawl." Without a robust central registry and permissioning system, these autonomous swarms can consume excessive API credits or, worse, create feedback loops that disrupt business operations. Leading firms are implementing Agent Ops (AOps)—a specialized branch of MLOps—to monitor the performance, cost, and alignment of these distributed intelligence systems.

What Are the Hidden Risks of AI Economic Agency?

While the productivity gains from "machine customers" and autonomous procurement are high, 2026 has introduced new categories of systemic risk. The primary concern is Algorithmic Collusion. When thousands of AI agents from different companies optimize for the same goal (like profit maximization in a supply chain), they can inadvertently create price-fixing schemes or market instabilities that regulators struggle to track.

Furthermore, we are seeing the rise of Autonomous Ransomware. In early 2026, cybersecurity firms identified agents that don't just steal data but autonomously negotiate their own ransom payments and move funds through decentralized finance (DeFi) protocols. This has made the "Zero Trust" architecture mandatory for any enterprise deploying agentic AI.

To mitigate these risks, the industry is moving toward "Verifiable Reasoning." In this model, an agent doesn't just provide an output; it provides a Proof of Logic—a cryptographically signed sequence of the steps it took and the sources it consulted. This allows developers to audit an agent's "thought process" even if they weren't present during the execution of a multi-hour task.

Frequently Asked Questions

Does the EU AI Act apply to US-based companies?

Yes, the EU AI Act has extraterritorial reach. If your AI system's output is used in the EU, or if you place AI systems on the EU market, you must comply by the August 2, 2026 deadline regardless of your physical location.

What is the difference between an AI chatbot and an AI agent?

A chatbot is reactive, responding only when prompted. An AI agent is proactive; it has a goal and can use tools (like your email, CRM, or a web browser) to complete that goal autonomously over an extended period.

Is agentic AI safe for financial transactions?

Safety is achieved through "Programmable Financial Infrastructure." This includes hard-coded spend limits, human-in-the-loop approvals for large amounts, and strict cryptographic guardrails that ensure an agent only interacts with verified vendor APIs. Granting agents economic agency is a high-risk activity that requires robust governance under 2026 standards.