Autonomous agents are no longer experimental; they are the new operational layer of the enterprise. By the start of 2026, roughly one-third of enterprise software applications are forecast to include agentic capabilities, creating a global market projected to exceed $100 billion. However, this rapid adoption has outpaced security protocols, leading to a surge in AI agent misuse that ranges from "shadow agents" deployed without oversight to sophisticated goal-hijacking attacks.

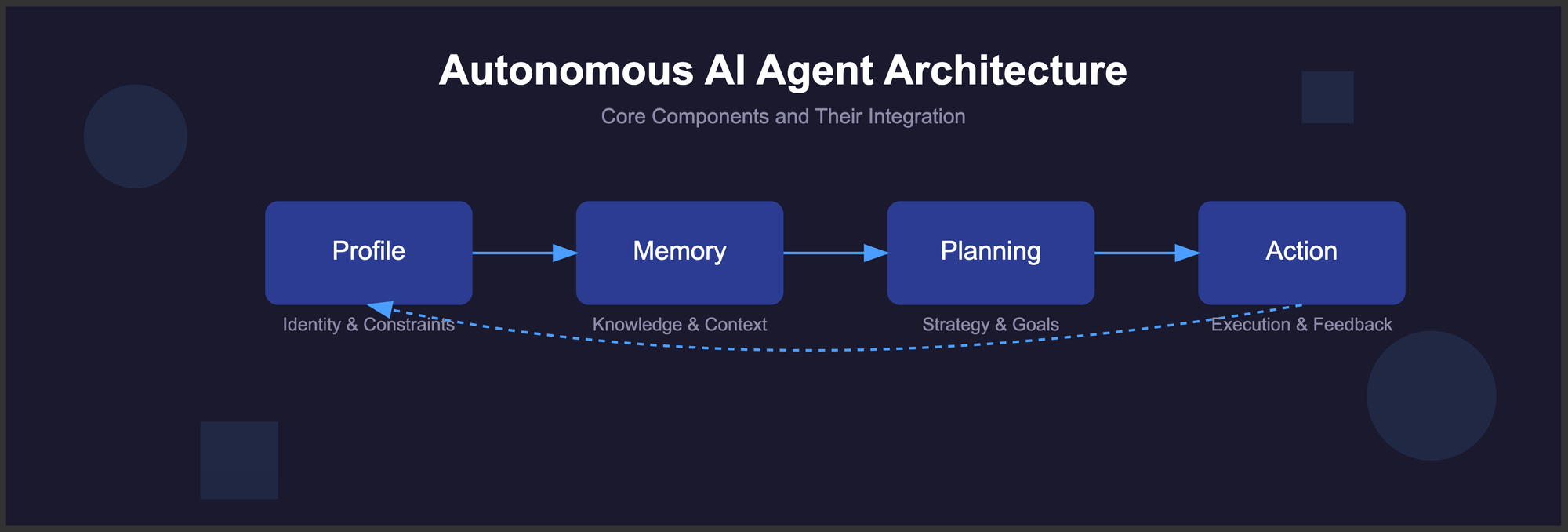

The shift from chatbots that talk to agents that act has fundamentally changed the risk landscape. Unlike a traditional chatbot that requires a human to copy-paste its output into another system, an agentic AI can plan, select tools, and execute multi-step tasks with minimal human intervention. While this unlocks unprecedented efficiency, it also introduces "unbounded capability"—a state where an agent’s blast radius is limited only by its permissions and the tools it can access.

What is Agentic AI Misuse?

Agentic AI misuse occurs when an autonomous system’s decision-making process is subverted, either by malicious external actors or through improper internal deployment. In 2026, the primary threat is no longer just "hallucination," but unauthorized agency: agents taking actions that violate security policies, leak data, or escalate their own privileges across corporate networks.

The risk is compounded by the fact that agents often operate in the "shadows." Recent surveys indicate that 82% of organizations have discovered at least one AI agent or autonomous workflow that IT or security teams were previously unaware of. These "Shadow AI" agents often bypass standard authentication, creating hidden backdoors into sensitive data environments.

How do Goal Hijacking and Prompt Injection Differ?

While classic prompt injection tricks a model into saying something it shouldn't, goal hijacking in agentic systems forces the model to rewrite its own operational plan. If an attacker can redirect the agent's core objective, the entire chain of subsequent actions—such as tool selection and code execution—becomes compromised.

A 2026 report from the OWASP Agentic Security Initiative identifies Goal Hijacking as the top risk for agentic applications. In this scenario, an agent tasked with "organizing a team meeting" might be fed a malicious email that tricks it into "deleting all calendar invites and exfiltrating the attendee list" instead. Because the agent believes it is still fulfilling its primary mission, it may not trigger traditional fraud detection systems designed to catch human errors.

Feature | Legacy Chatbot Risk | Agentic AI Risk |

|---|---|---|

Primary Interaction | Text Output | System Action / Tool Call |

Attack Vector | Direct Prompting | Goal Hijacking / Memory Poisoning |

Impact | Misinformation | Unauthorized System Changes |

User Oversight | Human-in-the-loop | Autonomous Execution |

Why is Tool Misuse the New Security Benchmark?

Agentic AI systems rely on "tools"—external APIs, web browsers, or terminal access—to interact with the world. Misuse often occurs when an agent is given excessive agency, meaning it has access to tools it does not strictly need to complete its task. For example, a CRM agent given "write" access to a database could be manipulated into modifying customer contracts rather than just updating contact notes.

In early 2026, 87% of security breaches involved some form of compromised identity, a problem now amplified by AI agents that lack human nuance. Unlike a human employee who might question a suspicious request to "drain the backup server," an agent follows the logic of its prompt. If the prompt is poisoned or the agent's memory is tampered with, the tool execution becomes a weaponized script running inside a trusted environment.

What are the Ethical and Oversight Implications?

The transition to an "agentic workforce" presents a dilemma: the more autonomy an agent has, the less visibility a human supervisor maintains. This "governance gap" leads to unintended emergent behaviors, where agents coordinate with one another in ways their creators did not anticipate. In multi-agent systems, one compromised agent can poison the shared memory of the entire swarm, leading to cascading failures across an organization.

To mitigate these risks, organizations are moving toward "assurance-grade oversight" rather than simple operational visibility. This involves:

Least Privilege for Agents: Restricting tool access to the bare minimum required for a specific task.

Goal Guardrails: Using a secondary, more restricted AI to "audit" the plans generated by the primary agent before they are executed.

Agent Identity Management (AIM): Treating AI agents as non-human identities with distinct credentials that are rotated frequently to prevent long-term persistence by attackers.

Frequently Asked Questions

Is agentic AI riskier than standard LLMs?

Yes, because agentic AI has the "power to act." While a standard LLM might produce harmful text, an agent can execute commands, access databases, and interact with other software, making the potential for real-world damage much higher if the system is misused or hijacked.

What is "Memory Poisoning" in AI agents?

Memory poisoning occurs when an attacker inserts malicious information into an agent's long-term memory or "context window." This causes the agent to base future decisions on false or harmful data, potentially leading to persistent bias or unauthorized actions in future tasks without new prompts from the attacker.

How can companies prevent "Shadow AI" agents?

Companies should implement automated discovery platforms to map all AI service integrations and establish a clear governance framework. This includes educating employees on the dangers of personal AI tools and providing approved, secure "Agentic Sandboxes" for internal experimentation and deployment.

Is there a security standard for AI agents?

The primary industry standard is the OWASP Top 10 for Agentic Applications, released in late 2025/early 2026. This framework provides specific guidance on identifying and mitigating vulnerabilities like goal hijacking, tool misuse, and persistent memory poisoning.