Software engineering is transitioning from a manual implementation craft to a high-level orchestration discipline. By 2026, Gartner predicts that 40% of enterprise applications will feature task-specific AI agents, up from less than 5% in late 2024. This shift represents the "agentic era," where developers no longer just use AI as a completion tool, but as a collaborative partner capable of reasoning through Jira tickets, debugging complex CI/CD failures, and maintaining documentation autonomously.

The rise of agentic software development is not merely an incremental improvement in IDE autocomplete. It is a fundamental reorganization of the developer lifecycle. In earlier phases of AI adoption, tools like GitHub Copilot functioned as "co-pilots," providing snippets of code based on immediate context. Today, autonomous agents are being integrated into the very fabric of the repository, capable of multi-step reasoning and independent tool use to solve problems that previously required hours of human context-switching.

How are AI agents different from standard AI assistants?

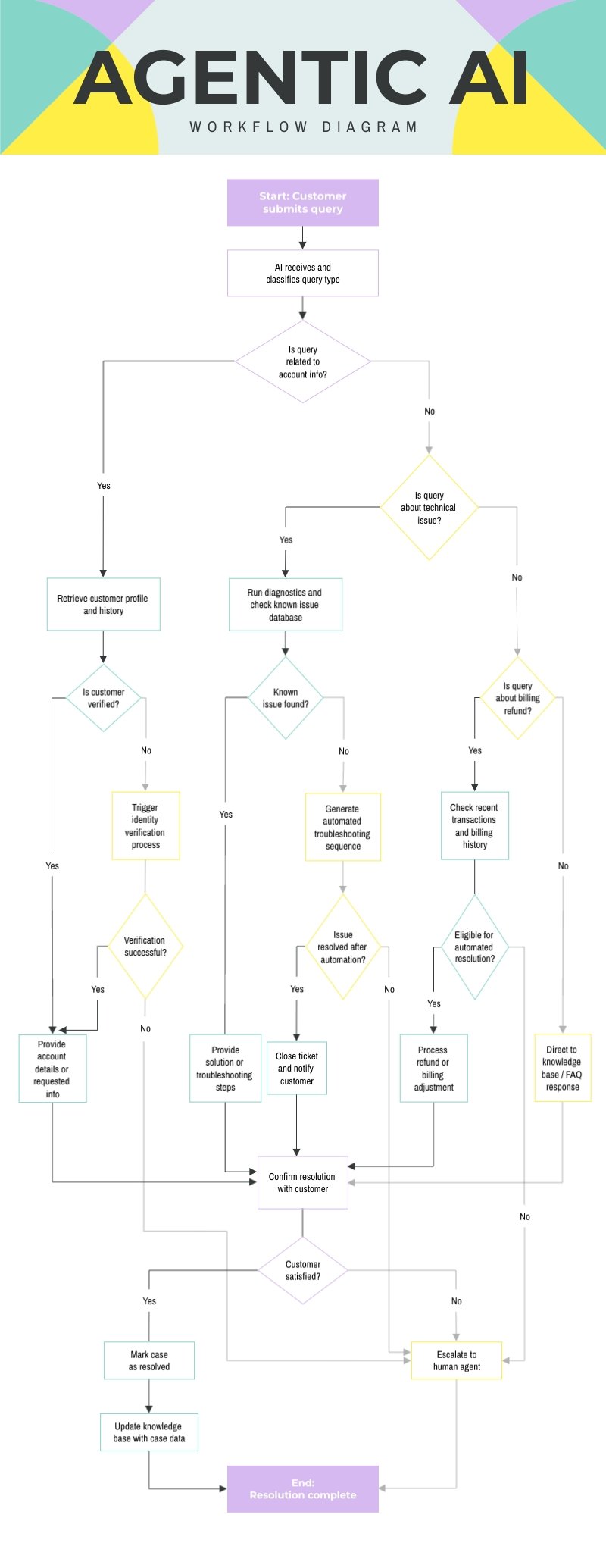

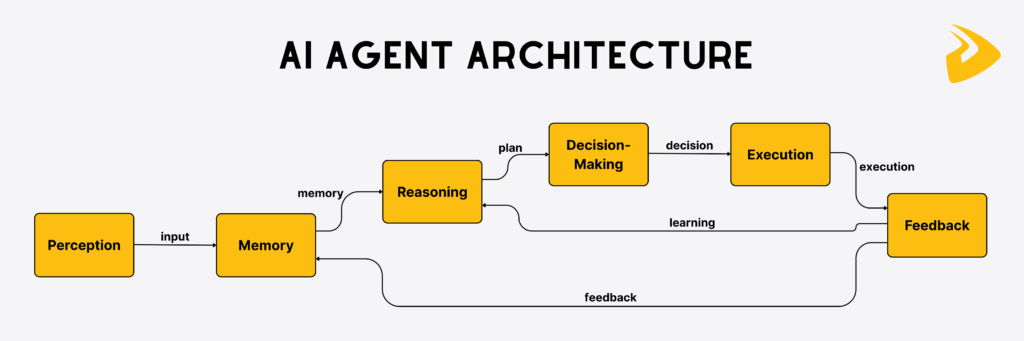

AI agents differ from standard AI assistants by their ability to maintain state, use external tools independently, and decompose complex goals into sequential tasks without constant human prompting. While a standard LLM might generate a function when asked, an agentic system can identify a bug in a codebase, write a failing test to reproduce it, modify the source code to fix it, and verify the solution by running the entire test suite.

The core of this capability lies in the orchestration layer. Frameworks such as LangGraph and CrewAI allow developers to define "agentic workflows" where different agents take on specialized roles. A "Researcher" agent might scan documentation, while a "Coder" agent implements the logic, and a "Reviewer" agent validates the output against the project's security standards. This division of labor mirrors a traditional engineering team but operates at machine speed.

Why is the "Creative Director" model replacing the "Code Producer"?

The identity of the software engineer is shifting from a producer of syntax to an orchestrator of automated logic. As GitHub’s 2026 data suggests, developers are increasingly acting as "creative directors" of code. In this model, the engineer defines the technical requirements, sets the architectural guardrails, and verifies the final output, while the agents handle the repetitive, detail-oriented execution of implementation and testing.

This shift resolves a long-standing tension in software development: the "toil" vs. "innovation" gap. Engineers historically spend up to 30% of their time on non-productive tasks like manual triage, documentation updates, and chasing dependency vulnerabilities. Agentic workflows in GitHub Actions now automate these repository-level tasks by interpreting intent from Markdown descriptions and executing changes directly within the CI/CD pipeline.

What are the leading frameworks for agentic orchestration?

Selecting an orchestration framework is now one of the most consequential decisions for a technical lead. The choice generally balances the need for granular control versus the speed of implementation.

Feature | LangGraph | CrewAI | Claude Code |

|---|---|---|---|

Orchestration Model | State Machine (Nodes + Edges) | Role-Based Teams (Specialists) | Direct Terminal/Repo Operation |

Best For | Complex, branching logic with high state persistence | Collaborative pipelines and fast shipping | Direct codebase refactoring and debugging |

Primary Strength | Built-in checkpointing for "human-in-the-loop" approval | High-level abstraction of agent communication | Deep integration with Anthropic’s coding-specific models |

LangGraph has become the standard for "control-heavy" applications. It models workflows as explicit state machines, allowing developers to set "interrupts" where a human must approve an agent's decision before it proceeds. Conversely, CrewAI focuses on "team-based" coordination, where agents are assigned specific personas like "QA Lead" or "Backend Developer," making it ideal for automating existing business processes.

How do agentic workflows improve security and code quality?

Agentic AI improves security by shifting "left" to the very moment code is authored, rather than waiting for a post-deployment scan. Contemporary agentic CI systems can automatically monitor pull requests for "secret leakage" or vulnerable patterns, but they go a step further than traditional linters: they can suggest and apply the actual remediation code.

By 2026, Gartner anticipates that autonomous AI software engineering will be synonymous with "preemptive cybersecurity." Instead of just detecting a risk, agents can autonomously propose library upgrades, rewrite insecure SQL queries, and verify that the fix doesn't break existing functionality. This reduces the "mean time to remediate" from days to minutes, as the agent has local context of the entire codebase and its dependencies.

What are the risks of autonomous agentic development?

While the productivity gains are measurable, the delegation of authority to AI agents introduces "agentic risk." This includes the possibility of an agent propagating a subtle logic error across a distributed system or, in extreme cases, an agent accidentally deleting critical infrastructure if its permissions are too broad.

To mitigate these risks, modern engineering teams are implementing "Agentic Guardrails":

Human-in-the-loop (HITL): Requiring explicit manual approval for any agent-initiated code merge or deployment.

Sandboxed Execution: Running coding agents in isolated environments (like ephemeral Docker containers) where they can test code without access to production data.

Audit Logs: Maintaining a complete trail of the "reasoning steps" an agent took to reach a conclusion, allowing engineers to debug the agent's logic just like they would debug a human's PR.

How do AI agents handle real-world debugging workflows?

The true test of an agentic system is its performance on "zero-day" production bugs where the root cause is unknown. In a typical manual workflow, a developer must traverse logs, correlate timestamps with deployment events, and manually inspect the delta of current versus previous code. An agentic debugging pipeline, however, automates this discovery phase by treating the repository as a queryable knowledge base.

A concrete example of this in action is the integration of agents into Sentry or Datadog. When an exception occurs, a "Triage Agent" can automatically fetch the stack trace, identify the specific commit that introduced the breaking change, and analyze the associated Jira ticket to understand the developer's original intent. By the time a human engineer is paged, the agent has already drafted a "Pull Request for Fix" that includes a new unit test demonstrating the regression and the proposed code change.

This "Agent-First Debugging" reduces the Mean Time to Resolution (MTTR) significantly. Instead of starting from scratch, the human engineer acts as a validator. They review the agent's logic, check the test results in a sandboxed environment, and click "Merge." This shift reduces cognitive load and allows senior engineers to focus on architectural stability rather than recurring firefighting.

What is the infrastructure cost of running agentic dev teams?

Scaling an agent-driven development team introduces a new category of "AI Operational Expense" (AIOPEX). Unlike traditional SaaS seats for IDEs, agentic costs are primarily driven by token consumption and the "reasoning density" required for complex tasks. Developers must now choose between "Low-Reasoning" models for repetitive tasks and "High-Reasoning" models (like GPT-4o or Claude 3.5 Sonnet) for architectural changes.

Token Economy: A single agentic loop to fix a bug might involve 10-15 calls to an LLM, consuming upwards of 50,000 tokens as the agent "rethinks" its strategy after failing a test.

Compute Overhead: Running ephemeral environments (Docker containers) for agents to execute and test code in real-time adds to the cloud bill, requiring a robust "Agent Sandbox" infrastructure.

Context Window Strategy: Managing the context window is the primary technical challenge. Agents need access to the entire codebase, but passing millions of lines of code into every prompt is financially unsustainable. Engineering teams are solving this using vector databases (RAG) to provide "Just-in-Time" context to the agent.

Despite these costs, the ROI is often calculated in "human hours saved." If an agent costing $5 in API credits can perform a documentation audit that would take a senior engineer four hours (costing ~$400 in salary/overhead), the economic argument for agentic adoption becomes undeniable for enterprise organizations.

How to build your first agentic coding pipeline?

Starting with AI agents doesn't require a total overhaul of your existing stack. The most effective approach is "incremental automation"—identifying a high-friction, low-creativity task and delegating it to a specialized agent.

Define the Scope: Choose a narrow task, such as "Updating README.md" or "Converting JS files to TypeScript."

Select your Orchestrator: For most frontend teams, the Vercel AI SDK or Claude Code provides a low-barrier entry point. For backend or complex logic, LangGraph offers the necessary state management.

Establish Guardrails: Never give an agent write-access to your

mainbranch. Configure your CI/CD to require a human reviewer for any PR tagged withidentity: ai-agent.Monitor the Logic: Use tracing tools like LangSmith to visualize the agent's reasoning steps. This allows you to spot "hallucination loops" where an agent gets stuck trying the same failing fix repeatedly.

As your comfort level grows, you can move from "Single-Agent" tasks to "Multi-Agent" orchestrations, where a "Product Manager Agent" writes the spec, a "Developer Agent" writes the code, and a "Security Agent" audits the result before it ever reaches a human's desk.

The road ahead: From Copilot to Autonomous Repository

The future of software development is not found in a better text editor, but in a more intelligent repository. We are moving toward a state where the repository is "alive"—active agents are constantly pruning dead code, optimizing database queries, and keeping documentation in sync with API changes.

For the developer, this means a higher floor but also a higher ceiling. The requirement to understand low-level syntax may decrease, but the demand for system design, security intuition, and orchestration skills will soar. The engineers who thrive in 2026 will be those who can treat AI agents not as black boxes, but as a diverse team of digital specialists capable of scaling their individual impact by an order of magnitude.

Frequently Asked Questions

Can AI agents replace human software engineers?

AI agents cannot replace human engineers because software development is about solving human problems, not just writing code. While agents handle implementation, humans are required for high-level architectural decisions, business requirement gathering, and ethical oversight. The role is evolving from "builder" to "architect and verifier."

Which programming language is best for building AI agents?

Python remains the dominant language for building AI agents due to its massive ecosystem of libraries like LangChain and CrewAI. However, TypeScript is rapidly growing in popularity for agentic workflows, especially for developers building web-integrated agents using frameworks like the Vercel AI SDK.

What is the difference between "Agentic AI" and "Generative AI"?

Generative AI produces content (text, code, images) based on a prompt. Agentic AI uses generative models as a "brain" but adds the ability to use tools, maintain memory, and act autonomously to achieve a goal. Generative AI is the engine; Agentic AI is the self-driving car built around that engine.