How AI Accelerates Data Pipeline Development (2026 Guide)

AI data foundations drive 40% faster ETL cycles. Learn how agentic systems, autonomous schemas, and knowledge engineering are redefining the 2026 data stack.

SatheeshKumar M • May 8, 2026

By 2026, the global data engineering market is projected to reach $105.4 billion, a growth driven largely by the integration of artificial intelligence into the core ETL/ELT lifecycle. Data engineers are no longer just "plumbers" of digital information; they are now orchestrating agentic systems that automate the most tedious parts of pipeline development, from schema mapping to autonomous error recovery.

The shift toward AI-augmented data engineering isn't just about speed—it's about survival in an era where data volumes have outpaced manual human intervention. Organizations that successfully implement AI-driven data foundations are investing up to four times more in governance and quality than those seeing poor AI outcomes. This gap defines the new competitive landscape of 2026.

How does generative AI reduce ETL development time?

Generative AI reduces the development cycle by automating the generation of complex SQL transformations and handling the nuance of semantic mapping between disparate data sources. In 2026, enterprises are seeing up to 40% faster operator response times when pipelines are managed by autonomous operations.

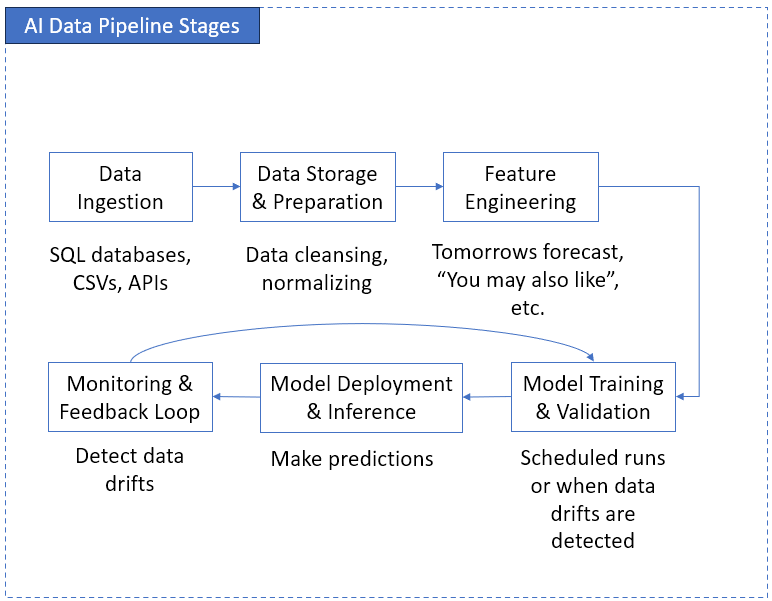

The primary bottleneck in traditional data engineering has always been the "last mile" of transformation—writing the specific logic that cleans, joins, and prepares data for business intelligence. Modern tools like Snowflake Cortex and Databricks Mosaic AI now provide SQL-native AI functions that can interpret unstructured data or suggest optimal joins based on historical query patterns. This has effectively shifted the role of the data engineer from writing code to auditing the output of specialized AI agents.

What are the key architectural shifts in 2026?

The architecture of data pipelines is moving away from rigid, scheduled batches toward autonomous, agentic systems that reason across data sources and make real-time adjustments. The autonomous data platform market is expected to hit $15.23 billion by 2033, reflecting a foundational shift in how we build for reliability and scale.

Feature | Traditional Pipelines (2020-2024) | AI-Augmented Pipelines (2026) |

|---|---|---|

Schema Handling | Manual definition and rigid updates | Autonomous schema evolution and inferencing |

Error Handling | Static retry logic; manual intervention | Agentic self-healing and root cause analysis |

Optimization | Manual indexing and partition tuning | Dynamic performance tuning via predictive ML |

Data Quality | Rule-based checks (Great Expectations) | Generative anomaly detection and synthetic patching |

In this new model, specialized AI agents collaborate under central coordination. For example, an ingestion agent might detect a change in a source API's JSON structure, while a secondary governance agent automatically updates the catalog and applies PII masking—all before a human engineer even opens a ticket.

Why is knowledge engineering replacing traditional coding?

Knowledge engineering has emerged as the critical skill for 2026, prioritizing the ability to design data semantics over the ability to write raw Python or Scala. Organizations are realizing that while AI can write the transformation code, it cannot define the business meaning of the data it is processing.

Data engineers in Los Angeles and other tech hubs are increasingly focused on building "AI-ready" foundations. This involves creating the semantic layers and knowledge graphs that allow generative AI to interpret enterprise data accurately. Without this human-led semantic grounding, AI-generated pipelines risk creating "hallucinated" data products that look correct but fail to reflect business reality.

How do Snowflake, Databricks, and Fivetran compare in 2026?

The "big three" of the data stack have pivoted their entire product roadmaps toward AI integration, but they serve distinct roles in the modern pipeline development lifecycle.

Snowflake (Snowflake Cortex): Focuses on "AI-native SQL," allowing engineers to call LLMs directly within their data warehouse. It’s the leader for teams that want to keep AI workloads close to their governed data.

Databricks (Mosaic AI): Remains the preferred choice for large-scale AI and ML workloads, often proving 15–30% more cost-effective for heavy compute tasks due to its optimized Lakehouse architecture.

Fivetran: Has evolved from a simple "mover" of data into an intelligent automation platform that uses AI to handle the long tail of SaaS connectors, reducing the need for custom-built ingestion scripts.

What are the risks of AI-automated pipelines?

The primary risk is a lack of confidence in the final output; as of early 2026, only 39% of technology leaders are confident that their current AI investments will yield a positive financial impact. This skepticism often stems from "black box" logic—when an AI agent optimizes a pipeline for cost but inadvertently causes data drift.

To mitigate this, the 2026 data stack integrates an "audit layer" by design. Observability tools like Monte Carlo has become inseparable from the transformation layer, providing the real-time guardrails necessary to ensure that as development accelerates, quality does not degrade.

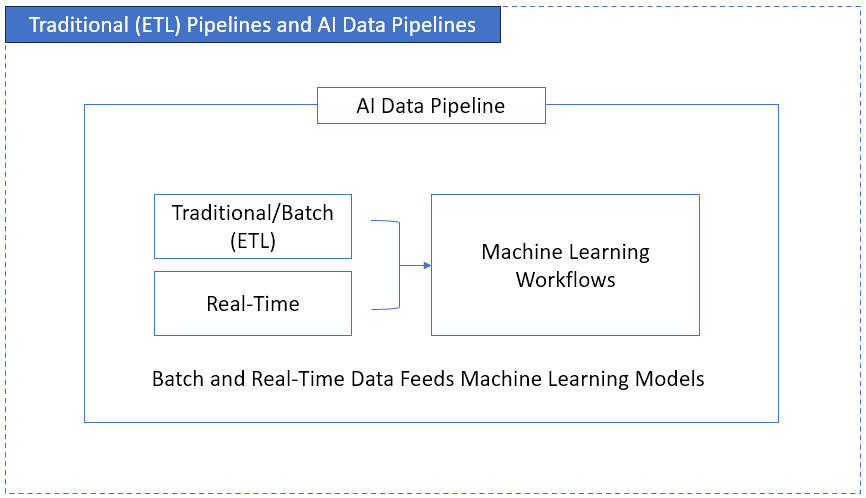

How is MLOps merging with traditional data engineering?

The boundaries between data engineering and machine learning operations (MLOps) are dissolving as pipelines increasingly serve real-time inference models rather than just static dashboards. By mid-2026, the demand for ML-integrated data pipelines has forced a reconciliation between the speed of model delivery and the stability of data governance.

Traditionally, data engineers focused on moving data, and data scientists focused on the models. This "walled garden" approach led to significant latency. AI-orchestration tools now allow for "feature stores" that are automatically populated and maintained by autonomous pipelines. When a data engineer updates a source column, the AI-driven MLOps layer automatically assesses if a model retraining is necessary, closing the loop between data quality and model performance.

What role does AI play in FinOps for data pipelines?

Data cloud costs have historically been the most volatile line item in tech budgets, but AI is now providing the predictive guardrails known as "AI-driven FinOps." High-performing data teams in 2026 use ML models to forecast compute demand and automatically throttle low-priority jobs during peak pricing periods.

Predictive performance tuning is no longer a manual task. Modern Snowflake and Databricks implementations leverage AI agents that analyze query history to suggest—or even implement—the most cost-effective clustering keys and warehouse sizing. This autonomous optimization is reducing cloud spend by an average of 15% for enterprise-scale pipelines, effectively funding the AI tools themselves.

Why is the "Human-in-the-Loop" model still essential?

Despite the rise of agentic systems, the "human-in-the-loop" (HITL) model remains the only defense against the cascading logic errors that occur when AI interprets complex business rules incorrectly. Enterprise data is often messy, legacy-bound, and filled with "tribal knowledge" that LLMs cannot extract from raw tables alone.

The 2026 data professional acts more like a judge than a court reporter. They review "draft" architectures proposed by AI, verify that compliance masking (like GDPR or CCPA) is applied at the root, and tune the prompts that govern automated schema changes. AI provides the velocity, but humans provide the vector—ensuring that the speed of delivery never compromises the integrity of the data.

Frequently Asked Questions

Can AI completely replace the role of a Data Engineer?

No, AI is shifting the role from manual scriptwriting to architectural oversight and knowledge engineering. While AI handles the execution of ETL tasks, humans are still required to define business logic, ensure data ethics, and manage the semantic layers that AI depends on.

Is AI-augmented data engineering more expensive?

While initial licensing for AI-native platforms like Snowflake Cortex or Databricks involves higher token-based costs, the reduction in operational overhead is significant. Leading enterprises report 20–25% lower operational overhead by automating repetitive maintenance and tuning tasks.

What is the best tool for a small team to start with AI pipelines?

For smaller teams, managed services like Fivetran and low-code platforms provide the fastest time-to-value. These tools allow lean teams to leverage pre-built AI automation for ingestion and basic transformations without needing a dedicated team of MLOps engineers.

The acceleration of data pipeline development in 2026 is ultimately about closing the gap between data collection and business action. By offloading the mechanical nuances of ETL to intelligent agents, data engineers can finally focus on what matters: delivering the high-quality, governed data that fuels the modern enterprise.