Building a Self-Hosted AI Incident Response & RCA Stack

Learn to build an open-source AIOps stack using Prometheus, Loki, and Local LLMs. Reduce incident toil without the SaaS price tag or cloud token bills.

Shivram Natarajan • May 8, 2026

Incidents are expensive, but commercial AIOps tools—with their opaque pricing and per-token cloud bills—can be even worse. For DevOps engineers and SREs at small-to-mid-sized companies, the "AI revolution" often feels like a choice between manual toil or vendor lock-in. However, as we move through 2026, the maturity of local Large Language Models (LLMs) and open-source observability has reached a tipping point. You can now build a credible, AI-assisted incident response stack for the cost of a single virtual machine.

This guide is about pragmatism. We aren't here to "revolutionize" your workflow with hype; we're here to cut the first 15 minutes of context-gathering during a 3 AM PagerDuty call. By leveraging self-hosted tools like Prometheus, Loki, Ollama, and n8n, you can build a pipeline that detects anomalies, summarizes chaotic alert storms, and proposes root cause hypotheses—all without sending sensitive log data to a third-party API or burning your cloud budget.

Our core principles are simple:

Open-Source first: Every tool must be FOSS or have a usable self-hosted community edition.

No SaaS lock-in: Everything runs on your infrastructure (VM, homelab, or K8s).

Hard-capped costs: Priority goes to local LLMs (Ollama, vLLM) to keep token costs at zero.

Minimal hardware: Designed to run on a single machine with 32GB RAM and a consumer GPU (or even CPU-only).

The Architecture at a Glance

A functional AI incident stack follows a linear flow from raw data to actionable insight. The "AI" isn't a replacement for the stack; it's the intelligent glue that sits between your alerting and your notification channel.

[ Sources ] [ Storage Layer ] [ Logic & AI Layer ] [ Notification ]

----------- ----------------- -------------------- ----------------

Apps/K8s -----> Prometheus (Metrics) ----> Alertmanager ----. [ Mattermost ]

Logs -----> Loki (Logs) ----> n8n / Python ----+----> [ Rocket.Chat ]

Traces -----> Tempo (Traces) ----> Ollama (LLM) ----' [ Slack Hook ]

----> pgvector (RAG)In this architecture, raw telemetry flows into standard open-source storage backends. When an alert triggers, an orchestration layer (like n8n or a Python webhook) intercepts it, fetches relevant context from the logs and metrics, and asks a local LLM to interpret the mess before pinging your on-call engineer.

Layer 1: The Observability Foundation

You cannot analyze what you do not measure. For a self-hosted stack, resource efficiency is as important as feature depth.

Tool Category | Recommended (Lean Stack) | Heavy/Unified Alternative | License Note |

|---|---|---|---|

Metrics | VictoriaMetrics | Prometheus / Mimir | VM: Apache 2.0 |

Logs | Grafana Loki | OpenSearch / Quickwit | Loki: AGPL-3.0 |

Traces | Grafana Tempo | Jaeger / SigNoz | Tempo: AGPL-3.0 |

Console | Grafana | SigNoz | Grafana: AGPL-3.0 |

The Opinionated Recommendation: If you are running on a single VM, use VictoriaMetrics for metrics and Loki for logs. VictoriaMetrics provides higher compression and lower CPU overhead than standard Prometheus, while Loki's index-free design makes it significantly lighter on disk than Elasticsearch or OpenSearch. If you want a "single pane of glass" that handles all three with a modern UI, look at SigNoz (MIT License), which provides an integrated experience similar to Datadog but is fully self-hostable.

Layer 2: Alerting & Incident Detection

The LLM is only as good as the context it receives. Traditional Alertmanager alerts are often too brief ("Service down"). To feed an AI pipeline, we need tools that can gather "evidence" before the human arrives.

Keep (Open-Source AIOps): Directly relevant to this guide, Keep (MIT License) acts as a unified alert management platform. It allows you to connect multiple providers and map alerts to workflows.

Robusta: If you are running on Kubernetes, Robusta (Apache 2.0) is essential. It intercepts Prometheus alerts and automatically attaches pod logs, graph snapshots, or even

kubectloutput to the alert notification.Grafana OnCall: For those moving away from PagerDuty, the open-source version of Grafana OnCall (AGPL-3.0) provides the on-call rotations and scheduling required to ensure the right person gets the LLM's summary.

Layer 3: Running LLMs Locally

This is the core cost-saving section. Running a local LLM means you can summarize 10,000 log lines without worrying about a $50 OpenAI bill.

Recommended Inference Engines

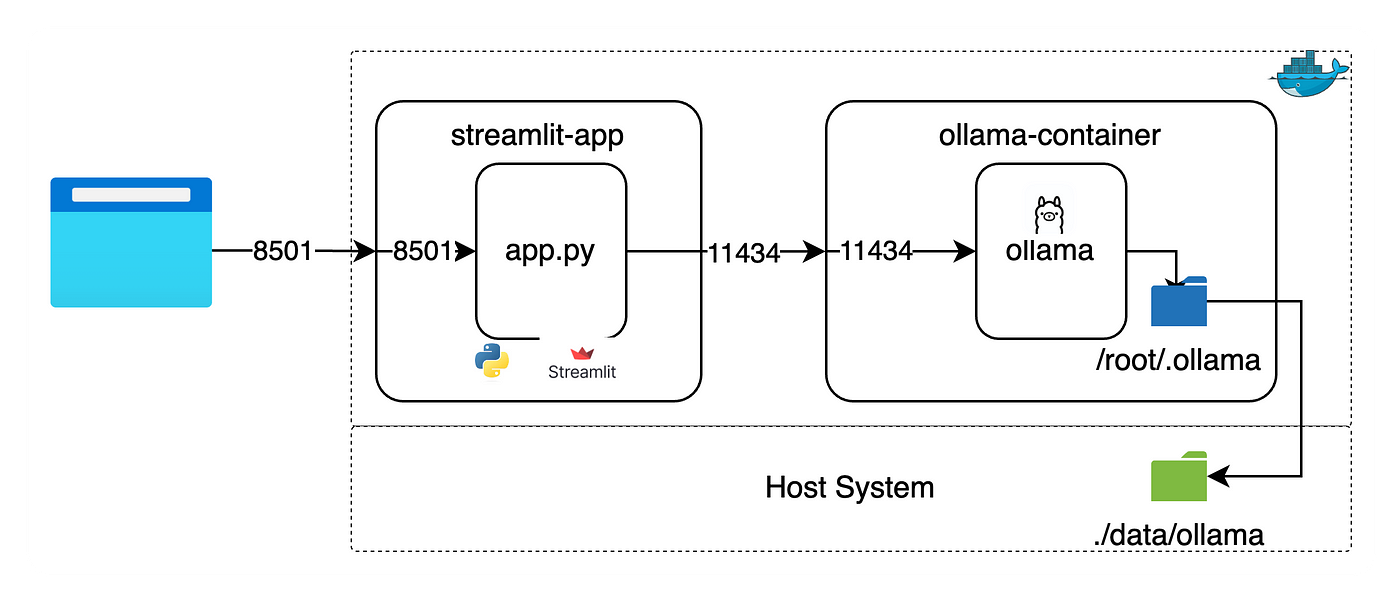

Ollama: The gold standard for ease of use. It handles model quantization, GPU management, and provides a simple REST API. Best for 90% of use cases.

vLLM: If you are processing a high volume of concurrent alerts, vLLM offers significantly higher throughput via PagedAttention.

LocalAI: A drop-in OpenAI-compatible API that can wrap multiple backends.

Model Selection & Hardware Needs

Model | Min Hardware | Strength |

|---|---|---|

Llama 3.1 8B | 8GB VRAM / 16GB RAM | Fast, great for alert summarization. |

Mistral-7B-v0.3 | 8GB VRAM / 12GB RAM | Excellent logic; good for "if-this-then-that" triage. |

Qwen 2.5 32B | 24GB VRAM / 32GB RAM | Heavy hitter for complex RCA and code analysis. |

Phi-3.5 Mini | CPU-only (4GB RAM) | Surprisingly capable for simple log classification. |

Cost Note: Running Llama 3.1 8B on CPU is free but ~10x slower than GPU. It’s fine for asynchronous postmortem drafting, but too slow for live incident triage.

Layer 4: The LLM Incident Pipeline

The magic happens when you "glue" your alerts to your LLM. Don't just send the alert text; send a Context Bundle.

The Alert Summarization Flow

When an alert triggers, your orchestration layer (n8n or Python) should:

Identify the service and time window (T-minus 5 minutes).

Query Loki for

errororwarnlevel logs for that service.Query VictoriaMetrics for related metrics (CPU, Memory, 5xx rates).

Send a prompt to Ollama.

Prompt Snippet Example:

System: You are a Senior SRE. Summarize the following incident evidence.

Evidence:

- Alert: High Error Rate on 'auth-service'

- Metrics: 5xx errors spiked from 0.1% to 15% at 03:00 UTC.

- Logs: [log line: "Connection refused: database:5432"] [log line: "Max pool size reached"]

Task: Provide a 2-sentence summary and 3 investigative next steps.Root Cause Analysis (RCA) Assistance

For deeper RCA, the LLM needs recent change context. By feeding your CI/CD deployment logs (e.g., "auth-service version 1.2.4 deployed at 02:55 UTC"), the LLM can make the connection: "The incident started 5 minutes after version 1.2.4 was deployed; the logs suggest a DB connection leak introduced in this version."

Layer 5: RAG for Incident Context

Vector databases allow the LLM to search your private knowledge—runbooks, past postmortems, and Slack history—without needing to retrain the model.

Storage: Use pgvector (Postgres extension). Since you likely already have Postgres for tools like Grafana or n8n, pgvector is the lowest-overhead way to add vector search.

Workflow:

1. Convert your Markdown runbooks into "embeddings" using a local model like nomic-embed-text via Ollama. 2. During an incident, embed the alert text and find the top-3 most similar runbook sections. 3. Inject those sections into the prompt: "Based on the internal runbook, for 'Database Connection Errors', you should first check the HAProxy status."

Layer 6: Glue Code & Orchestration

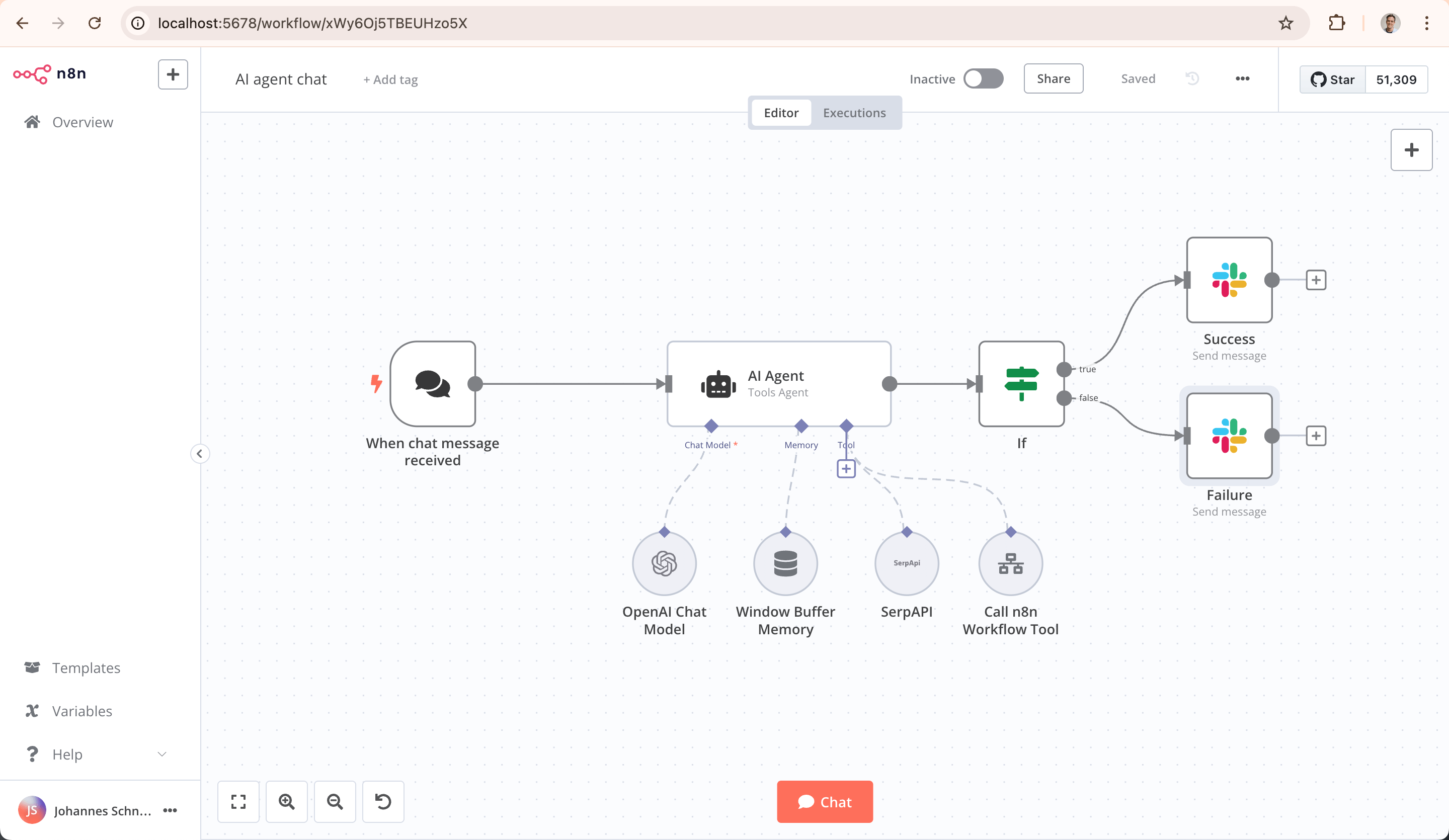

Avoid over-engineering. While LangChain is popular, a 100-line Python FastAPI service or an n8n (Fair-code license) workflow is often easier to debug.

Example n8n Flow:

Webhook: Receives Alertmanager JSON.

HTTP Request: Fetches last 50 error logs from Loki API.

Ollama Node: Processes the logs + alert with a "Summarize" prompt.

Mattermost Node: Posts the summary, a link to the Grafana dashboard, and the relevant runbook link to the

#incidentschannel.

Layer 7: ChatOps & Notifications

To truly reduce toil, the engineer should be able to interact with the system from their chat app. Use Mattermost or Rocket.Chat for a fully self-hosted experience. By setting up a Slash Command (e.g., /incident-bot ask "Why is the auth-service failing?"), you can trigger a Python script that gathers more context and returns the LLM's latest hypothesis.

Putting It All Together: A Reference Deployment

For a small team, we recommend a single "Management VM" with the following specs:

Specs: 8 vCPU, 32GB RAM, 100GB NVMe.

Optional: NVIDIA RTX 3060 (12GB VRAM) for faster inference.

Monthly Cost: ~$30–$50 on providers like Hetzner or OVH.

Estimated Savings: A comparable SaaS stack (Datadog + PagerDuty + OpenAI API) for a small production environment can easily exceed $1,500/month. By self-hosting, you pay only for the compute.

Pitfalls & Honest Limitations

The Hallucination Problem: Local LLMs will occasionally make up log flags or non-existent metrics. Always ensure the bot includes links to the raw data and clearly labels "Hypotheses" vs "Facts."

Security & Privacy: If you decide to use a hosted fallback like Groq or Gemini, you must redact PII (Personal Identifiable Information) first. Use an open-source tool like Microsoft Presidio to mask emails or IP addresses before they leave your network.

Maintenance Burden: Remember that you are now the SRE for your on-call system. If the Management VM goes down during an incident, you are blind. Ensure your alerting for the incident stack itself is heartbeated to a separate, dead-simple monitoring tool (like a basic Uptime Kuma instance).

Closing Thoughts

Building a self-hosted AI stack isn't about replacing the engineer; it's about amplifying them. The value prop is simple: reducing the cognitive load during high-pressure moments. Start small. You don't need a 70B parameter model or a complex RAG pipeline on day one.

Pro-tip: Don't build all of this at once. Start with Prometheus + Loki + Ollama + a 50-line Python script that summarizes alerts. Build the "summarization" muscle first, then move into RAG and automated RCA. The goal is to spend less time digging through logs and more time actually fixing the problem.

Frequently Asked Questions

Can I run this without a GPU?

Yes, using GGUF-quantized models in Ollama, you can run 8B parameter models on a modern CPU at acceptable speeds (2–5 tokens per second). This is enough for non-urgent analysis, but you may find it frustrating for interactive "ChatOps" during a live outage.

How do I keep the LLM from seeing sensitive data?

The best approach is to keep everything on a private VPC with no public ingress. Since you are using a local LLM (Ollama), the data never leaves your server. For logs, you can implement a "filtering" step in your Python glue code to strip out common PII patterns before sending the text to the LLM.

What is the best model for SRE tasks?

As of mid-2026, Qwen 2.5 32B and Llama 3.3 70B (quantized to 4-bit) are the gold standards for reasoning. However, for 90% of alerting tasks like "summarize these 50 logs," a smaller 8B Llama 3.1 model is faster and perfectly adequate.

Is n8n really powerful enough for this?

Absolutely. n8n's "AI Agent" and "LangChain" nodes allow you to build complex logic without writing much code. It is excellent for visualising the flow of data from an alert to a chat message, making it easier for the whole team to understand how the AI is "thinking."

Shivram Natarajan is a Senior DevOps SMA at Experience.com. Based in Los Angeles, he focuses on building resilient, cost-effective infrastructure for high-growth platforms.