Cloud & DevOps 2026: The Rise of AI Platform Engineering

By 2026, 80% of top IT teams will integrate AI agents into DevOps. Discover how platform engineering, geopatriation, and AI assistants are reshaping the cloud.

Vinitha Ganesan • May 8, 2026

The era of traditional DevOps has transitioned into a period of AI-driven platform infrastructure, where manual intervention in delivery pipelines is increasingly viewed as a technical debt. By 2026, the primary challenge for enterprises has shifted from "how do we ship faster" to "how do we govern the autonomous systems that are shipping for us." Cloud and DevOps are no longer separate departments but a unified capability focused on building self-healing, preemptive environments that align with both global geopolitical shifts and local regulatory demands.

How has Platform Engineering evolved in the age of AI?

The 2026 State of DevOps Report indicates that the rise of AI infra assistants has redefined the role of the platform engineer from a script-writer to a governance architect. In this landscape, platform engineering is the discipline of designing and maintaining internal developer platforms (IDPs) that automate not just the deployment, but the entire lifecycle management of an application using machine learning models.

According to a 2026 Red Hat analysis, over 80% of high-performing IT organizations have now successfully integrated AI agents into their platform engineering strategies. These agents don't just alert users to failures; they predict outages before they occur by analyzing cluster telemetry in real-time. This shift has reduced the "cognitive load" on developers—the original goal of platform engineering—by abstracting away the complexities of Kubernetes and multi-cloud connectivity through conversational and intent-based interfaces.

What is "Geopatriation" and why does it matter in 2026?

One of the most disruptive trends identified by Gartner for 2026 is the concept of "geopatriation"—the movement of data and applications out of global public clouds and into local, sovereign, or private data centers. This is a direct response to increasing geopolitical tensions and stricter data sovereignty laws that require national data to stay within specific borders.

For DevOps teams, geopatriation introduces a massive complexity hurdle: the need for "write once, deploy anywhere" capability across radically different infrastructure providers. This has fueled the adoption of sovereign cloud frameworks where the DevOps pipeline must automatically detect the destination region’s compliance requirements and adjust the workload configuration, encryption keys, and residency flags without human intervention. The goal is a "compliance-as-code" standard that is enforceable regardless of whether the target is AWS, a local provider, or an on-premise sovereign stack.

Should you choose Serverless or Containers for 2026 workloads?

The decision between serverless and containerized environments now rests on a utilization crossover point rather than a pure architectural preference. As of May 2026, data suggests that serverless (Functions as a Service) remains the superior economic choice for workloads running at less than 35% sustained utilization.

Decision Factor | Serverless (FaaS) | Managed Containers (K8s/ECS) | Hybrid Strategy (Serverless Containers) |

|---|---|---|---|

Utilization Threshold | Best for <35% sustained load; excels at spiky, unpredictable traffic patterns. | Most economical for >40% steady-state utilization with consistent traffic. | Ideal for baseline steady-state load with serverless bursts for demand peaks. |

Operational Burden | Minimal; the cloud provider manages the execution environment and runtime patching. | Moderate/High; teams must manage pod scaling, cluster versions, and resource limits. | Balanced; leverages container portability with serverless-style auto-scaling logic. |

Cold Start Impact | Significant; 200ms–800ms latencies are common for non-provisioned concurrency. | Zero-to-low; pods remain warm, though initial scaling of new nodes can take minutes. | Medium; 2026 optimizations have reduced "container-start" to under 500ms. |

The "serverless containers" market (e.g., AWS Fargate, Google Cloud Run) is projected to reach $4.29 billion by the end of 2026, representing a CAGR of nearly 30%. This suggests that the majority of enterprises are moving toward a middle-ground architecture that provides the portability of Docker with the "no-ops" scaling of Lambda.

How is Preemptive Cybersecurity changing the CI/CD pipeline?

The old paradigm of "detect and respond" has been replaced by preemptive cybersecurity, a trend Gartner highlights as critical for organizations managing AI-driven systems. In a 2026 DevOps environment, security is no longer just a "gate" at the end of the pipeline; it is a continuous, AI-led scanning process that looks for "weak signals" in the codebase, supply chain, and runtime cluster.

Preemptive security tools use large language models (LLMs) to perform predictive threat modeling. Instead of waiting for a known CVE to be published, these systems analyze the logic flows in new pull requests to identify potential zero-day vulnerabilities or backdoors. This move toward "secure-by-design" infrastructure means that by the time a developer submits code, an AI has already simulated millions of attack scenarios against that specific logic and either approved it or provided a remediation plan.

The Rise of the AI Infrastructure Assistant

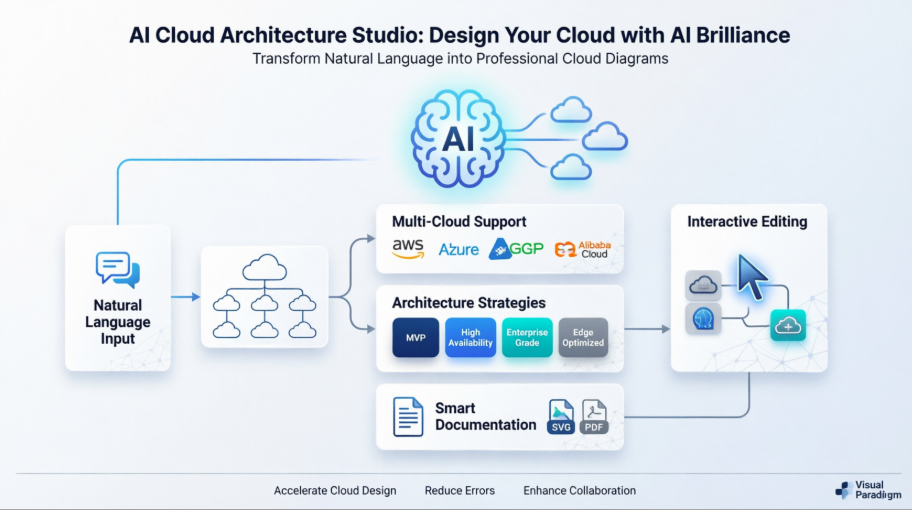

Infrastructure-as-Code (IaC) is undergoing its most significant transformation since the launch of Terraform. In late 2025 and early 2026, conversational infrastructure assistants have become standard in enterprise DevOps toolchains. These tools allow engineers to describe desired states in natural language—"Deploy a PCI-compliant backend cluster in Frankfurt with automatic failover to London"—and the assistant generates, tests, and validates the HCL or YAML files.

These assistants, such as those integrated into Puppet and Red Hat OpenShift, are now capable of managing "Day 2" operations. They monitor cost fluctuations across providers and can initiate "spot instance scavenging" or "resource right-sizing" without human triggers. The financial impact is substantial: early adopters have reported up to a 22% reduction in monthly cloud waste by allowing AI to manage the fine-grained tuning of cluster resources that humans typically overlook.

Why is "Compliance-as-Code" the new gold standard?

Regulatory environments in 2026 have become so fragmented that manual compliance checks are effectively impossible. Between the EU’s evolving AI Act and various regional data sovereignty mandates, DevOps teams must now treat compliance as a first-class citizen of their code.

Modern frameworks now embed compliance checks directly into the GitOps workflow. Every time a change is made to the infrastructure, a "digital twin" of the environment is spun up in a sandbox, and a suite of thousands of automated regulatory tests is run. If the change would violate a residency rule or an encryption requirement, the merge is blocked. This "self-governing" infrastructure ensures that the enterprise is "audit-ready" at every single commit, rather than scrambling for weeks before a semi-annual review.

Summary: Building the 2026 Cloud Strategy

Success in the 2026 cloud and DevOps landscape requires a fundamental pivot from focus on speed to focus on governance and resilience. The tools that won the 2020s—basic CI/CD and manual container management—are now the baseline, not the advantage.

To stay competitive, IT leaders must:

Invest in Platform Engineering: Shift from custom, snowflake environments to standardized internal developer platforms.

Adopt AI-Led Security: Replace traditional scanning with preemptive, predictive threat modeling.

Plan for Geopatriation: Ensure that your architectural choices (like Kubernetes and standardized IaC) allow you to move workloads between global cloud and local sovereign providers with minimal friction.

Leverage AI Assistants: Use infra-assistants to solve the "talent gap" and manage the increasing complexity of multi-cloud environments.

The next three years will be defined by those who can harness the power of AI to manage their infrastructure while staying nimble enough to navigate the shifting geopolitical landscape of global data.

Frequently Asked Questions

Can AI completely replace the DevOps Engineer?

No, but it significantly changes the role. AI handles the repetitive tasks of configuration, monitoring, and basic troubleshooting. The DevOps engineer in 2026 has become a Platform Architect, focusing on setting the high-level policy, security constraints, and governance models that the AI then executes.

Is multi-cloud still relevant with the rise of Geopatriation?

Multi-cloud is more relevant than ever, but it has evolved into Hybrid-Sovereign Multi-cloud. Organizations no longer use multiple clouds just for "price shopping," but rather to meet specific legal requirements in different jurisdictions, using standardized platform tools like Red Hat OpenShift or Google Anthos to maintain consistency.

How do "Cold Starts" affect serverless adoption in 2026?

Major cloud providers have largely mitigated the cold start problem using SnapStart and Instant-Restore technologies, which keep functions in a warm, pre-initialized state. While absolute zero-latency is still the domain of dedicated containers, the gap has closed sufficiently that 90% of web APIs can now run on serverless without a noticeable performance impact.