RAG and Vector Databases: How AI Uses Your Own Data (2026)

Learn how Retrieval-Augmented Generation (RAG) and vector databases eliminate AI hallucinations by grounding models in your own private data and context.

Vishnu S • May 8, 2026

You have likely chatted with an AI assistant and realized it has no knowledge of your specific business operations. Large language models (LLMs) are great at general knowledge, but they operate in a vacuum regarding your internal reports and latest product updates.

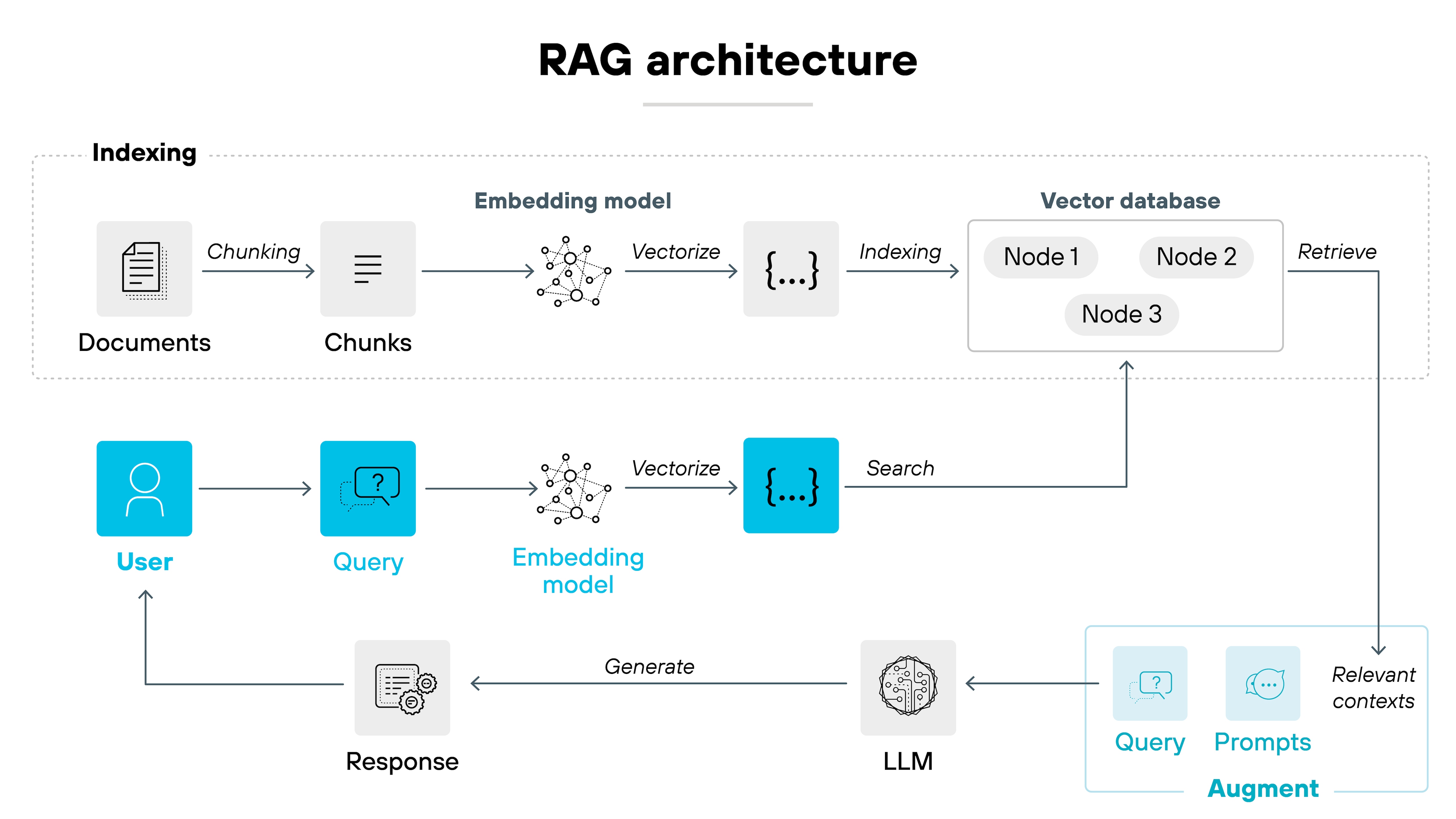

Retrieval-augmented generation (RAG) solves this by allowing AI to access current, private data before generating a response. This process reduces AI hallucinations by grounding answers in real-time evidence, turning a generic chatbot into a specialized subject-matter expert.

What is the AI memory cutoff?

Large language models are trained on massive datasets up to a specific date, meaning they are unaware of information created after their training window closed. Without a bridge to current data, these models will often "hallucinate" or fabricate answers when asked about recent events or private company files.

For a Python developer or data engineer, this creates a significant hurdle: you cannot simply ask a standard LLM to debug a proprietary internal library or summarize a meeting from yesterday. The model lacks the context, and its training is static. To make AI useful in a professional environment, you must provide it with a way to look up facts it hasn't learned.

How does RAG give AI a "library card"?

RAG connects an LLM to an external knowledge base to pull in relevant facts before answering a prompt. Instead of relying solely on its internal training, the system searches your documents for the specific information needed to answer a user's question accurately.

Search: The system scans your knowledge base for relevant documents.

Context: The most useful snippets are retrieved and added to your original prompt.

Generation: The AI reads the provided context and writes a response based on those facts.

This transformation is powerful because it uses the AI's reasoning capabilities without forcing it to "memorize" your data. Research suggests that advanced RAG mechanisms can contribute to a 64% reduction in hallucination rates by providing the model with auditable, factual sources.

Why are vector databases necessary for RAG?

Standard search engines look for exact keyword matches, but a vector database identifies content based on conceptual meaning. By transforming text into high-dimensional "vectors" (mathematical representations of meaning), the database can find relevant information even if the user's query doesn't match the exact wording of the documentation.

In a vector space, the word "car" is mathematically close to "vehicle" or "automobile." When a developer builds a RAG system, the vector database acts as the high-speed search engine that handles this semantic understanding. It ensures that when a customer asks for a "cozy gift," the system retrieves products related to comfort and warmth, rather than just products with the specific word "cozy" in the title.

What does RAG look like in the real world?

A primary application for RAG is scaling customer support for e-commerce or technical platforms where manual lookups are too slow. Instead of support agents digging through PDFs, a RAG-powered assistant can instantly pull the exact troubleshooting steps from a manual and draft a response.

Industry | How RAG works | Practical Benefit |

|---|---|---|

Loans | Systems parse complex lending criteria, local regulations, and applicant credit data to assess eligibility. | Loan officers accelerate underwriting by instantly identifying missing documentation or high-risk indicators in applications. |

Insurance | AI scans vast policy libraries and previous claims to verify coverage limits for specific incidents. | Claims adjusters cut processing time by 30% by automating the comparison of repair bids against policy fine print. |

Healthcare | Symptom descriptions trigger searches through medical journals and patient history. | Doctors surface relevant case studies and contraindications in seconds. |

Legal | Natural language queries scan thousands of contracts for specific clauses or risks. | Lawyers reduce manual discovery time by automating the initial document review. |

How do you implement RAG with Python?

As a Python developer, the most effective way to understand RAG is to see the code that transforms flat files into a searchable knowledge base. By using openai for embeddings and reasoning, and chromadb for vector storage, you can build a production-ready indexing pipeline in just a few lines of code.

The following implementation demonstrates how an insurance firm might index a specific policy document and query it for coverage exclusions.

import chromadb

from openai import OpenAI

# Initialize the OpenAI client and ChromaDB

client = OpenAI(api_key="your_api_key")

chroma_client = chromadb.Client()

collection = chroma_client.get_or_create_collection(name="insurance_policies")

# 1. Indexing: Chunking and storing document data

policy_text = "Standard Homeowners Policy: Coverage excludes flood damage but includes fire and wind."

doc_id = "policy_001"

# In a real app, use OpenAI's 'text-embedding-3-small' to create the vector

collection.add(

documents=[policy_text],

ids=[doc_id],

metadatas=[{"department": "claims"}]

)

# 2. Retrieval: Finding the relevant context

user_query = "Is flood damage covered under my homeowners policy?"

results = collection.query(

query_texts=[user_query],

n_results=1

)

retrieved_context = results['documents'][0][0]

# 3. Generation: Augmenting the LLM response

prompt = f"Context: {retrieved_context}\n\nQuestion: {user_query}\nAnswer:"

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": prompt}]

)

print(response.choices[0].message.content)By manually segmenting your data into chunks and assigning them unique IDs, you maintain full control over what the AI "reads" before it responds. This ensures that even if your primary model is updated or replaced, your proprietary data remains the consistent source of truth.

Why does this approach matter for your business?

RAG and vector databases make AI practical by ensuring it is grounded in your data and your specific operational world. This architecture allows companies to deploy AI that is secure, accurate, and easily updated without the astronomical cost of retraining a custom model from scratch.

When your knowledge base changes, the AI's knowledge changes instantly. You simply update the files in your vector database, and the next time a user asks a question, the retrieval step pulls the newest version of the truth. For developers, this provides a scalable, maintainable way to build AI tools that users can actually trust.