Will Humans Lose the Ability to Think Deeply?

Deep thinking won’t disappear—but it won’t happen by default. Here’s how AI, feeds, and dopamine loops reshape focus, and practical ways to protect your 2026‑s.

Waquar Alam • May 11, 2026

Deep thinking isn’t vanishing; it’s drifting toward rarity. In an economy tuned for notifications and novelty, the people who can sustain uninterrupted concentration will make better decisions, design sturdier systems, and keep their sense of self intact. The question isn’t “Will we lose it?” but “Who will protect it—and how?”

What does “deep thinking” mean in a digital age?

Deep thinking today means holding a complex idea in mind for 45–90 minutes without switching tasks, then producing a novel synthesis—a design, a model, a clear argument—that survives scrutiny. It’s not the absence of tools; it’s the presence of intent, structure, and time to push past first-order answers.

Most knowledge work never reaches that depth because our environment is built for responsive micro-tasks. Slack pings, email counters, sprint boards, and dashboards reward quick action. You can feel productive skimming issues and generating fast takes, but you rarely touch the parts of a problem where value actually compounds.

As a solution architect, I see this on software teams: a mid-sprint Slack thread spawns five tangents—alerts, quick fixes, scope creep—while the actual root cause waits untouched. The pattern is consistent: more motion, less conceptual progress.

Is our attention actually shrinking—or just being sliced thinner?

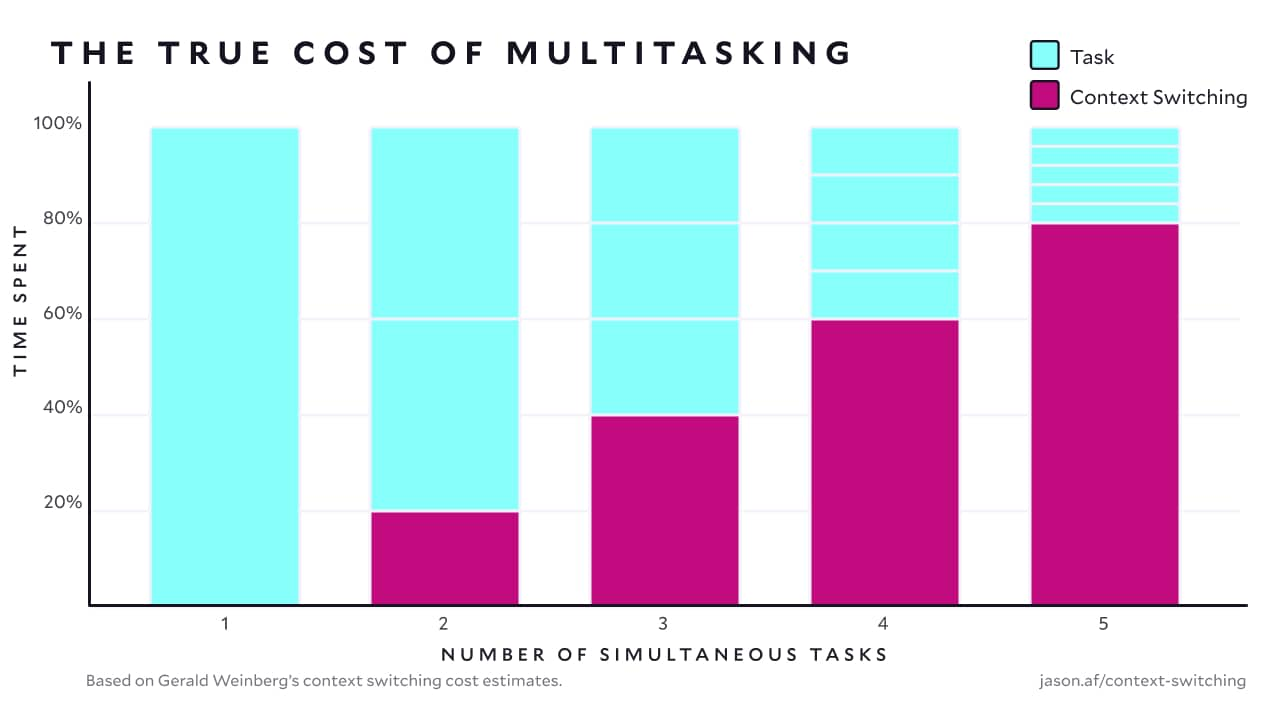

Evidence shows that frequent interruptions and context switching fragment work, increase stress, and reduce quality—even when we move faster. A classic UCI study found that interruptions lead to working “with more speed and stress,” degrading performance despite the rush (UCI, 2008). The cost isn’t just time; it’s the mental residue that lingers after every switch.

In a 2016 in‑situ study of online multitasking, UCI researchers tied shorter focus duration to personality traits and situational factors, showing how easily our attention state shifts under everyday conditions—shorter focus isn’t innate; it’s context-sensitive (UCI, 2016). And a theoretical framework from the same group maps “Focus” alongside boredom and rote work, reinforcing that focus is a state you can engineer (UCI, 2023).

If attention feels thinner, it’s because we keep cutting it. Every notification trains the brain to expect novelty on demand, making stillness feel like failure rather than the staging ground for insight.

How do dopamine loops and short‑form content shape thought?

Short‑form feeds optimize for variable rewards: a flick of the thumb, a fresh hit of novelty, and another micro‑surprise. That loop reinforces rapid scanning and instant appraisal. Over time, executive control—your ability to hold a goal and ignore noise—has to fight a stronger habit: “just one more.”

The danger isn’t entertainment; it’s displacement. Ten minutes of highlights before bed becomes 40 minutes, pushing out reading or reflection. The loop lowers your tolerance for delayed payoff—long arguments, complicated code, messy drafts—where deep thinking actually pays.

Still, there’s a humane upside: short‑form can introduce ideas, voices, and communities you would never meet otherwise. Used deliberately, it’s a discovery surface that feeds long‑form learning rather than replacing it.

Does AI make us mentally lazy—or smarter?

AI can either shrink or expand your thinking depending on how you use it. If you outsource problem framing and accept first answers, you’re practicing cognitive offloading of the hardest part—defining the question. That atrophies taste and judgment. If you use AI to draft, simulate, and get unstuck while you keep ownership of the frame, it multiplies your cognitive reach.

Real‑world contrast:

A junior developer pastes an error into a model and copies the fix. It works, but they don’t refine their mental model. The next incident repeats with a new symptom.

A senior engineer asks the model to propose two hypotheses, outlines a test plan, then instruments the system to validate. The tool accelerates exploration while the human strengthens causal reasoning.

The same holds for product strategy, legal research, or design. The line isn’t “use AI vs. don’t.” The line is whether you keep agency over goals and tradeoffs.

Where does cognitive overload actually come from?

Overload is not just “too much work.” It’s too many inputs without coherent priority. Notifications, meetings, tabs, and chat threads create simultaneous partial obligations. Your brain spins up and down, holding open loops while none of them reach a clean resolution.

A telling pattern on teams: three standing meetings, a reactive support channel, and a backlog that blends bugs with strategy. People shift contexts every 10–15 minutes. Output looks responsive but thin—lots of comments, few decisive changes to the product or process.

The antidote starts with ruthless sequence. One problem at a time, solved to a clear definition of done, beats five problems “in flight” that never land.

What practices rebuild attention without quitting tech?

The goal is to keep the good—the reach of networks and AI—while restoring the conditions for depth. Here’s a practical stack that works in modern teams.

Schedule two 90‑minute deep‑work blocks on your calendar, protected as if they were with a customer. Use them for one cognitively heavy task—design doc, root‑cause analysis, data modeling.

Batch notifications. Turn on Do Not Disturb for the block; check comms at set intervals (say, top of every hour outside deep work).

Use AI as a thinking scaffold, not an answer machine. Ask for outlines, counter‑arguments, or test cases. Keep ownership of the frame.

Offload memory, not meaning. Use a scratchpad (physical or digital) to park ideas and prevent tab sprawl.

Implement “finish Fridays” or “landing hours” where the sole goal is to close open loops.

Replace status meetings with written updates. Reading once, asynchronously, beats repeating the same talking points live.

Shallow engagement vs. deep work (what actually changes)

How it works | Early warning signs | What your brain learns | Output quality | Tool use that helps |

|---|---|---|---|---|

Rapid context switching trains quick appraisal but weak retention | Restless checking, impulse to skim | Preference for novelty over depth; lower tolerance for ambiguity | Many artifacts; thin reasoning | Timers, DND, single‑task sprints |

Deep work blocks train sustained attention and better chunking | Calm urgency; fewer switches | Stronger schemas; improved error detection through reflection | Fewer artifacts; higher‑leverage decisions | Outliner prompts, code tests, paper notes |

How should teams redesign work for depth without losing speed?

Teams don’t need to choose between agility and depth. They need to be explicit about when the group moves fast and when it moves slow on purpose.

Time‑box reaction. Keep a live support window (say, 60 minutes in the afternoon) and protect deep‑work mornings.

Write first, meet second. Draft the decision, circulate for comment, then hold a short meeting to ratify or revise.

Create “focus budgets.” Every sprint, earmark hours for one gnarly problem per person.

Gate pings. Route interrupts through a triage role, not the whole team.

Measure what matters. Reward shipped decisions, reduced rework, and long‑term defects avoided—not raw message volume.

The result is fewer last‑minute scrambles and more compounding clarity in architecture, policy, or design.

What are the real risks—and real benefits—of our tools?

Risks: dopamine loops crowd out long‑form thought; overreliance on AI weakens problem framing; context switching burns attention you cannot easily restore. These patterns threaten the slow cognition that drives original work.

Benefits: search and AI expand your surface area; communities widen perspective; automation frees time for hard thinking. Used with intent, the very tools that distract can amplify what makes you distinct.

The balance is behavioral, not theological. Don’t moralize your tech; instrument your day. Keep the frame and the sequence, then select tools that reinforce them.

A field protocol for protecting depth this week

Try this five‑day experiment and measure the difference in the quality of your output and your sense of agency.

Monday: Pick one problem worth 90 minutes. Block the time; declare DND; draft a tight problem statement.

Tuesday: Use AI to propose three solution paths; add risks; choose one; write a short plan.

Wednesday: Build or analyze for 90 minutes; log every switch you prevent; note one place where instant gratification tempted you.

Thursday: Share a written update; invite comments; run one focused review.

Friday: Close loops; document decisions; reflect 10 minutes on what made depth easier or harder.

Frequently asked questions

Is the “damage” to attention permanent?

Attention is plastic across the lifespan. Habits that reward staying power—long reads, deliberate practice, single‑task blocks—restore your capacity. The key is consistency over intensity; two protected hours every workday beat a once‑a‑week marathon.

How much deep work do I actually need?

For most knowledge workers, 8–12 hours a week of real depth moves the needle. The number matters less than the sequence: one problem framed, advanced, and landed cleanly beats scattered motion across five.

What if my job is inherently reactive?

Create containment. Cluster reactive work into a predictable window and guard at least one morning for depth. If you lead the team, add a rotating triage role so others can sustain focus without guilt or missed alerts.

The bet we make with our attention

We won’t lose the ability to think deeply, but we will lose it by default. Those who treat attention as an asset—structured time, clear frames, tool discipline—will keep the skill and the serenity that come with it. Everyone else will scroll.

— Waquar Alam, Solution Architect, Experience.com